Knowledge Architecture in GEO: Key Findings

- GEO fails when treated as a content problem, because AI visibility depends on structured knowledge not writing quality

- Inconsistent entities and disconnected signals weaken brand recognition, as AI systems rely on clear, unified identity to trust and cite sources

- Brands must build a coherent knowledge architecture, by aligning schema, internal linking, and cross-platform entity consistency to become usable by AI systems

Most brands starting a generative engine optimization (GEO) strategy do the same thing: They audit their top-performing blog posts, drop in a few statistics, tweak the headings, and call it done.

It feels productive. It looks like progress. But for the most part, it won’t work.

That’s not because content quality is irrelevant. It’s critical.

The issue is that brands that treat GEO as a content problem are solving for the wrong thing.

Visibility in generative AI search engine responses isn’t primarily a writing challenge; it’s an information architecture challenge.

Until that distinction becomes clear, most GEO efforts will produce underwhelming results while the underlying problem goes unaddressed.

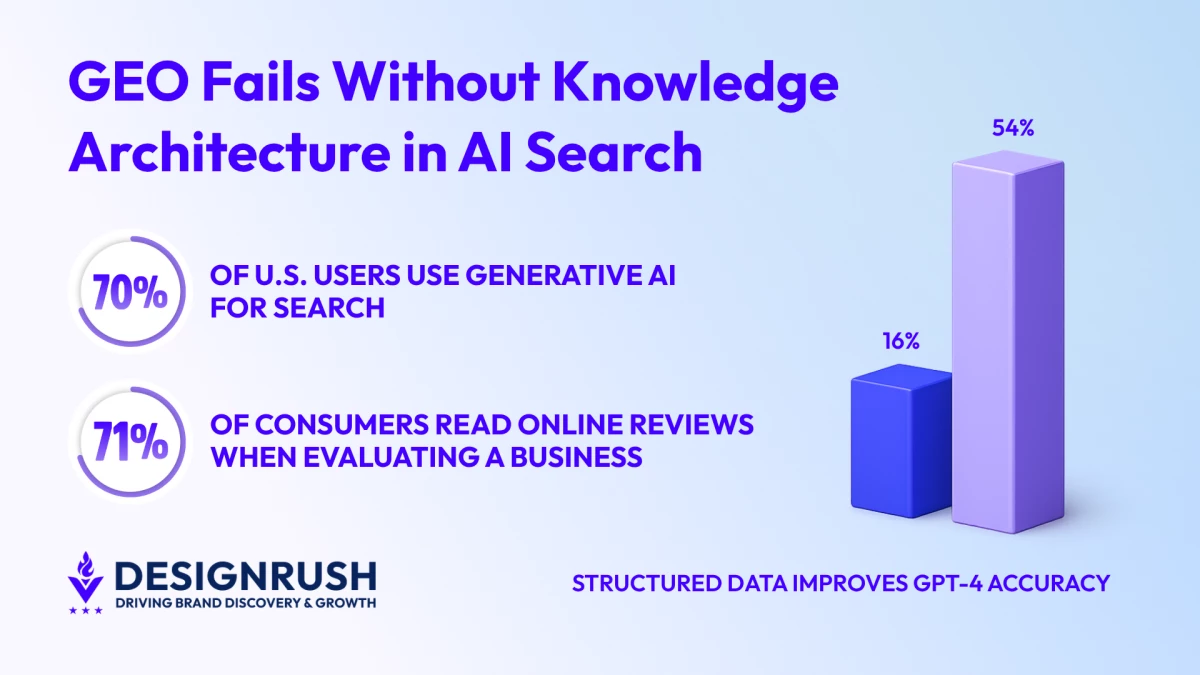

This is becoming harder to ignore. With more than 70% of U.S. users now using generative AI for search, according to Wellows, how visibility is earned, and which brands surface first, is changing.

Take a look at why GEO is the future of search:

Editor's Note: This is a sponsored article created in partnership with Intero Digital.

What Generative Engines Actually Need

To understand why architecture matters more than content tweaks, it helps to understand what happens when an AI engine processes a query.

It doesn’t read a page the way a human does. It tokenizes the content, converts it into vector space, and matches it against what it already understands about entities, relationships, and authority.

If headings lack semantic hierarchy (e.g., out-of-order H tags) or entities aren’t consistently marked up, the model treats that data like a malformed payload and drops it from the response set.

Put differently: A well-written article on a poorly structured site is like a well-argued court case with no evidence submitted. The reasoning may be sound, but the system can’t act on it.

In fact, insights suggest that GPT-4 goes from 16% to 54% correct responses when content relies on structured data.

That isn’t a marginal improvement from a content tweak; it’s a structural upgrade that changes whether a brand is usable by AI at all.

The post below debunks the common GEO misconceptions:

The Entity Problem Most Teams Are Ignoring

Entities (the specific named concepts that AI systems use as units of knowledge) are the building blocks of machine-readable content.

A brand that refers to its product as “our platform” on one page, “the tool” on another, and by its actual product name on a third is not only being inconsistent, but also fragmenting its own identity inside AI’s knowledge model.

Inconsistent naming confuses the AI’s understanding pipeline, which can cause an entity to fragment across multiple weaker signals.

The result is that the brand becomes harder to recognize, harder to trust, and less likely to be cited, not because its content is poor but because the system can’t reliably identify what it is.

Entity consistency across the web, including website structured data, social profiles, review platforms, and Wikidata entries, is critical for AI engines to recognize a brand as a single, authoritative entity.

This is the unglamorous infrastructure work that many content-focused GEO strategies skip entirely.

The video below explains actionable on-page SEO strategies for the AI era, designed to help brands navigate the shift from traditional search rankings to AI-driven discovery:

Schema Is the Machine-Readable Layer Brands Are Leaving Blank

Schema markup is where intent becomes machine-readable.

It’s the structured vocabulary that tells AI systems not only what a page says, but also what it is (whether it’s an article, a product, a service, an organization, or a person) and how all of those things relate to one another.

Organization schema should link to Person, Product, and Article schema where relevant, and @id schema should be used to bridge the schemas and create a coherent knowledge graph that AI can easily traverse.

Disconnected schema creates fragmented understanding and weaker signals. A brand that implements schema in isolation by tagging individual pages without connecting them into a coherent entity network is building rooms without a building.

Schema markup must focus on entity relationships: how content connects to broader knowledge graphs.

Using Person and Organization schema to establish author credentials and specialized schemas to signal topical expertise helps AI systems recognize and validate a brand’s authority signals.

This is what separates brands that appear in AI responses from brands that don’t.

The video below discusses why optimizing for AI-powered search is now more crucial than ever:

Internal Linking as a Knowledge Signal

Internal linking has always mattered for SEO. But in a GEO context, its role shifts.

It’s no longer primarily about passing PageRank. We now have to teach an AI system how concepts within a site relate to one another and, by extension, how authoritative that site is on a given topic.

AI engines follow internal linking connections to understand topical relationships and authority clusters.

A hub-and-spoke architecture, where a core topic page links to and from a network of related subtopic pages, creates a map that generative systems can traverse to evaluate the depth and coherence of a brand’s expertise.

Without that map, even excellent individual pieces of content sit as isolated nodes.

The AI can find them, but it can’t place them within a broader understanding of what the brand actually knows. Isolated nodes rarely become trusted citations.

Intero Digital proves why this works in its latest case study:

Source Consistency Across the Open Web

Here’s the piece that catches most teams off guard: GEO isn’t just about what lives on a brand’s own website.

The same Wellows 2025 citation study based on SE Ranking’s analysis of 129,000 domains found that referring-domain authority is the strongest predictor of being cited in ChatGPT’s answers.

AI engines are cross-referencing a brand’s on-site signals against what the rest of the web says about it. Inconsistencies between the two create doubt, and AI systems, when in doubt, cite someone else.

That uncertainty shows up in user behaviour as well, with Wellows adding that 71% of consumers read online reviews when evaluating a local business.

This makes consistent citations and reviews signals critical to how both search engines and AI systems determine trust.

This means the knowledge architecture work extends to Wikidata entries, Crunchbase profiles, LinkedIn pages, G2 listings, and every other authoritative platform where a brand has a presence.

Each of those properties should describe the brand using the same canonical language, the same product names, and the same organizational structure.

When they do, the AI’s confidence in citing that brand as a reliable entity increases. When they don’t, that confidence erodes.

Intero Digital outlines how to integrate GEO within your SEO strategy in only 12 months:

Why Brands Need Knowledge Architecture

The brands that are pulling ahead in GEO aren’t necessarily producing more content.

They’re producing content that sits on top of a coherent knowledge layer: consistent entities, connected schema, purposeful internal linking, and cross-platform source alignment.

That layer is what makes content usable by generative systems at scale.

GEO without knowledge architecture isn’t a strategy; it’s an optimization of the surface while the foundation goes unbuilt.

And in a landscape where AI engines are deciding which brands deserve to be cited, foundations are exactly what get evaluated first.