Enterprise AI Scale Gap: Key Findings

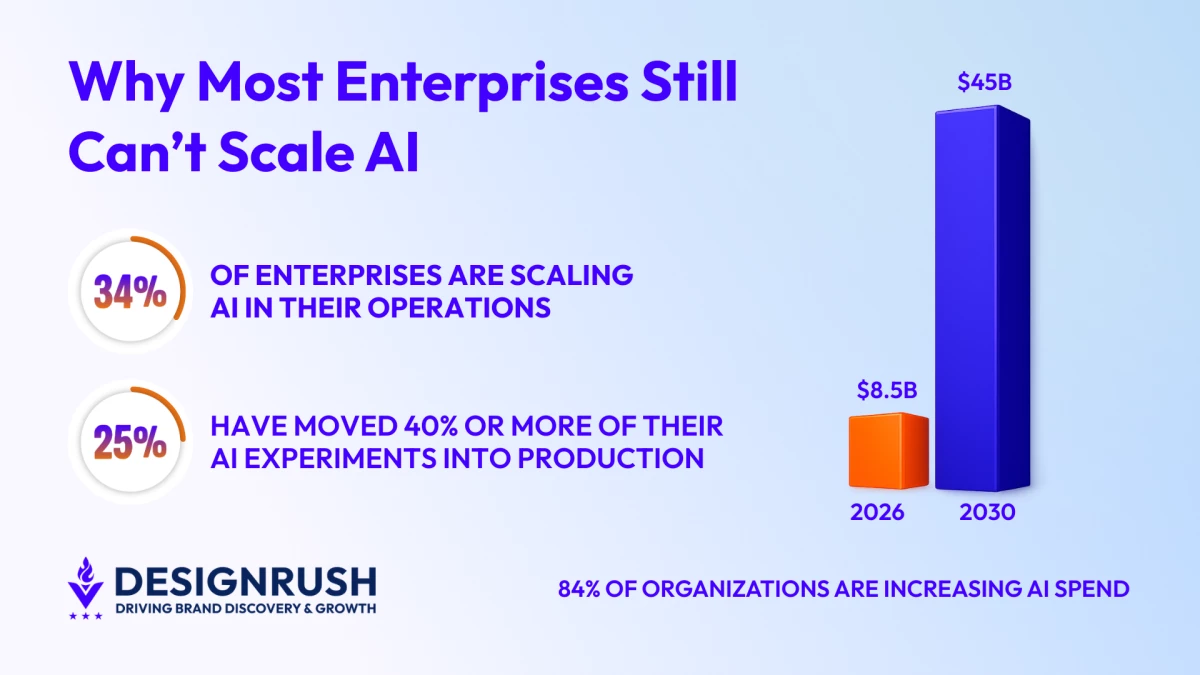

- Only 34% of enterprises are truly scaling AI, meaning that most organizations remain stuck in pilots or limited deployments despite rising investment and broader access.

- AI projects stall due to misalignment rather than model failure, as production exposes data gaps, integration challenges, and unclear ownership that pilots rarely address.

- Scaling AI requires redesign and clear accountability, because organizations that align data infrastructure, embed AI into real workflows, and assign end-to-end ownership are the ones that move from experimentation to enterprise impact.

AI has moved from simply being a buzzword to now being on the agenda in boardroom discussions.

However, beneath the headlines and enthusiasm, most enterprises are still trying to turn early AI experimentation into something that actually changes how the business operates.

This can easily be seen in Deloitte’s State of AI in the Enterprise 2026 report, which showed that only 34% of enterprises say they are using AI to deeply transform their business.

Malay Parekh, CEO of a leading global software development firm, Unico Connect, finds this figure worrying, as it shows a clear disconnect between AI’s perceived potential and its realized impact.

“That means two-thirds are still operating in early-stage pilots, limited automation, or contained use cases that have yet to reshape core operations,” Parekh said.

“The survey, conducted across thousands of business leaders, shows that while AI investment and interest are high, large-scale operational impact remains uneven.”

The video below summarizes some of the key findings in Deloitte’s report:

Editor's Note: This is a sponsored article created in partnership with Unico Connect.

Enterprise AI Adoption and Scaling by the Numbers

While companies intend to integrate AI in their operations, the gap between experimenting and scaling it across the enterprise is growing.

According to Deloitte’s report, worker access to AI tools grew by roughly 50% in a single year, expanding from under 40% of employees to nearly 60%.

Confidence is growing as well, with 84% of organizations increasing AI spending. This reinforces the idea that leadership sees long-term value in the technology.

However, the adoption scale tells a different story.

Only 25% of organizations have moved 40% or more of their AI experiments into production environments.

Meanwhile, 54% expect to reach that level of production maturity within the next three to six months.

This aligns with broader market signals, with the World Economic Forum adding that the global agentic AI market is projected to grow from about $8.5 billion in 2026 to roughly $45 billion by 2030.

While these figures show promise, they also reveal a split-screen reality.

On one side, AI budgets and ambitions are growing. On the other hand, true enterprise scale remains concentrated among a smaller group that has solved for infrastructure, ownership, and operational alignment.

Why Enterprise AI Fails to Scale Beyond the Pilot

On paper, many AI pilots look successful. AI models perform well, stakeholders are impressed, and there is visible momentum. But production changes the equation.

“The most common reason is a misalignment between what the PoC was designed to prove and what production actually demands,” Parekh said.

Once the project leaves the safety of the pilot phase and enters production, things feel very different.

Suddenly, teams are dealing with data that isn’t neatly cleaned, systems that were built years before AI was even a consideration, layers of compliance and audit scrutiny, and the hardest part of all, convincing people to change how they work.

Those realities rarely show up in a proof of concept. So the excitement that carried the pilot forward can quickly give way to slowdowns, workarounds, and unexpected resistance.

There is also a quieter issue that tends to slow things down.

More often than not, the person who started the pilot isn’t the one still there when the system finally goes live. And when responsibility shifts, the new leader brings their own priorities, pressures, and perspective on what success should actually look like.

“If there isn’t clear ownership from start to finish, progress slowly loses steam. Projects rarely collapse because the technology stops working. They drift because no one is consistently driving them forward,” Parekh said.

In other words, scaling AI is rarely a model problem. It is an alignment problem.

The Critical Decisions That Determine Enterprise AI Scale

Long before an AI system reaches production, its fate is already being shaped.

Parekh said that three early decisions often determine whether an AI initiative scales or stalls:

1. Data sourcing

Proofs of concept built on curated or overly cleaned datasets tend to unravel when exposed to real production data. Using live source data from the start, even if messy, surfaces data quality and pipeline issues early while the stakes are still manageable.

2. Integration

AI systems that operate in isolation rarely gain traction. Clear alignment on how outputs connect to existing tools, such as ERPs, CRMs, or trusted dashboards, determines whether the solution fits into workflows or becomes something employees work around.

3. Success metrics

Teams that measure performance solely through model accuracy often miss business impact. Scalable AI ties evaluation to operational outcomes from day one, focusing on which decisions improve and how that improvement is measured.

“For executives, this reframes AI from a technology initiative into a business design exercise. Scaling is not about proving the model works. It is about proving the organization is ready,” Parekh said.

Why Automation Alone Does Not Deliver Enterprise AI Transformation

Many companies approach AI with a simple goal: to automate what already exists.

That instinct, however, can limit impact.

“The honest answer is that we start by slowing down,” Parekh said.

“Clients often come to us wanting to automate what they already do. Our job is to help them question whether what they already do is the right thing to automate in the first place.”

Process mapping often reveals a gap between how workflows are documented and how they actually operate.

It often extends well beyond what is written in official documentation, because the documented version of a workflow rarely reflects how work actually gets done.

Once teams examine the informal steps, the workarounds, and the judgment calls that happen in real time, it becomes much clearer where AI can meaningfully reduce friction and where it might simply introduce complexity in a new form.

Redesign is collaborative by necessity.

“We don't hand clients a blueprint, but build it with the people who actually do the work,” Parekh said.

“Teams that help design an AI-integrated workflow are far more likely to trust it and use it consistently than those who have something imposed on them.”

The goal here isn't to automate tasks.

It's to redesign the process so that the people involved are spending their time on the parts that genuinely need human judgment, and the AI handles everything else.

That distinction matters because while automation improves efficiency, redesign changes outcomes.

The Infrastructure and Governance Barriers Blocking AI Scale

Scaling AI has a way of revealing the cracks an organization has been able to ignore.

On the technical side, data infrastructure is often the biggest constraint.

Many enterprises sit on years of valuable operational data, but it lives in silos across different systems, formats, and naming conventions.

Strong AI can be built on top of a well-governed data layer.

However, without that foundation, every new initiative turns into a custom data engineering effort, driving up time, cost, and complexity.

Monitoring is another blind spot. Once AI systems are live, performance does not stay static. Input data shifts, edge cases accumulate, and assumptions erode.

Yet many teams only prioritize observability after something breaks in a visible way, rather than building it in from the start.

Organizational clarity can be just as decisive as technical readiness.

AI initiatives often sit at the intersection of IT, operations, and business units, which makes them cross-functional by nature but also vulnerable to blurred accountability.

“AI systems that sit at the intersection of IT, operations, and business units tend to become everyone's problem and no one's responsibility,” Parekh said.

That is where scale either holds or unravels.

Ownership may sustain momentum, but without a clear, accountable leader responsible not only for technical performance but for business outcomes, even well built systems slowly drift out of alignment.

How to Move Enterprise AI from Prototype to Production

One property management company illustrates what it takes to move beyond experimentation.

The organization was managing tenant communications, maintenance coordination, and reporting across multiple disconnected systems. AI had been tested internally, but only at a surface level.

The breakthrough came after mapping the actual operational workflow end-to-end.

That exercise uncovered duplicated tasks across systems, decisions that appeared subjective but were actually rule-based, and recurring data gaps that weakened reporting accuracy.

Rather than layering AI on top of the existing system, the workflow itself was redesigned.

Here, automated communication triggers were embedded directly into tenant interactions, while maintenance requests were intelligently categorized.

Operational data was monitored for anomalies, and a built-in feedback loop allowed staff to flag incorrect outputs.

“What ultimately made the rollout successful was leadership involvement,” Parekh said.

“The operations lead participated from day one, not just as an approver but as a co-designer. By the time the system went live, adoption was not a hurdle because the team trusted what they had helped build.”

The Real Competitive Divide in Enterprise AI

The next phase of enterprise AI will not be defined by who experiments. It will be defined by who operationalizes.

Deloitte’s 34% figure is less a warning than it is a snapshot of how some have implemented AI inefficiently.

“While enterprises are investing, expanding access, and preparing for more advanced systems, scaling AI requires discipline in data, clarity in ownership, responsible AI development, and the courage to redesign how work actually gets done,” Parekh said.

The divide will not be between companies that tried AI and those that didn’t.

It will be between those that built around it and those that treated it like a side project. Scale will reward commitment, not curiosity.