AI Adoption and Use in Healthcare: Key Findings

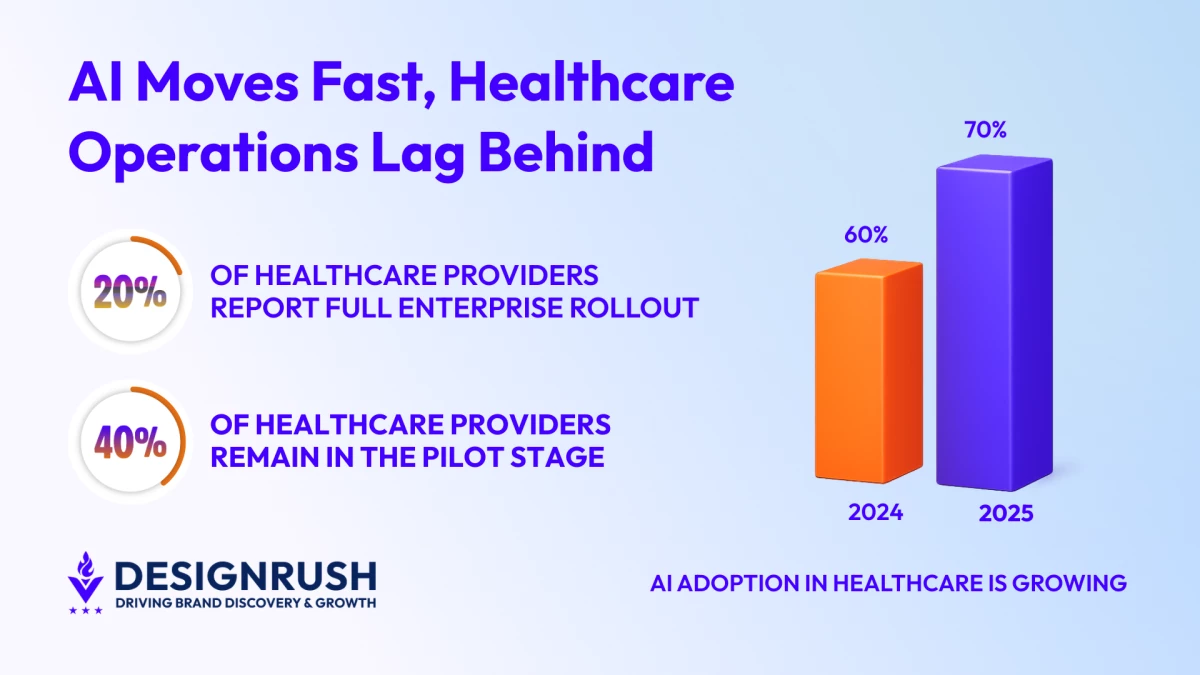

- 70% of healthcare providers have an AI strategy underway, yet only about one in five have deployed tools enterprise-wide, signaling a gap between intent and execution.

- Infrastructure limits and unchanged workflows are slowing AI expansion, suggesting that operational discipline plays a critical role in whether new tools deliver measurable gains.

- Organizations that redesign workflows and formalize governance scale AI more effectively, proving that structure, not software, drives sustained performance.

A recent survey by Bain & Company and KLAS Research found that 70% of healthcare providers had an AI strategy in place or in development, up from 60% a year earlier.

Despite that acceleration, most organizations still struggle to use AI consistently across their operations.

Funmi Mide-Ajala is Director of Customer Support & Digital Operations at Hugo, an outsourcing partner that designs and runs operational teams for healthcare organizations and other high-complexity industries.

“The gap we see isn’t between organizations that want AI and those that don’t. It’s between organizations that restructured how work gets done and those that dropped AI into unchanged processes.”

AI Use Is Growing, but Not Across the Board

AI tools are already used across administrative workflows in hospitals and health systems, assisting physicians with documentation, supporting coding teams, reviewing prior authorizations, and helping manage denials.

The same Bain & Company and KLAS Research survey reveals the areas where AI use is highest, with providers reporting:

- 62% using AI for documentation support, including ambient listening and digital scribes

- 43% using it for clinical documentation improvement

- 30% using it for medical coding

Adoption drops sharply in adjacent operational areas.

Only about 27% report using AI for prior authorizations, according to the same research. That falls to 24% for risk adjustment and diagnosis capture and 19% for denial and appeal management.

This uneven distribution reflects where organizations feel safest deploying AI.

“AI shows up first where the work is standardized and measurable,” says Mide-Ajala.

“But the real operational and financial impact sits in the messy, exception-heavy workflows, and that’s where most teams aren’t ready to deploy it yet.”

AI tools are already widely used across routine U.S. healthcare functions, with applications ranging from patient support to care coordination:

Editor's Note: This is a sponsored article created in partnership with Hugo.

Why Healthcare AI Is Struggling to Scale

Even in high-adoption areas, scale remains limited. For ambient documentation tools, only about one in five providers report full enterprise rollout, while roughly two in five remain in the pilot stage.

Infrastructure constraints help explain why. In roughly 80% of measured healthcare organizations, fewer than 70% of clinicians agree their EHR system is fast.

Introducing AI into environments that clinicians already perceive as inefficient can slow scaling, especially if workflows are not redesigned alongside the technology.

“Most pilots succeed because someone hand-holds them," says Mide-Ajala.

"The real test is whether the process still works when that person moves on. If it does, you’ve built an operation. If it doesn’t, you’ve built a demo”.

3 Ways to Move AI from Pilot to Production

If the first phase of healthcare AI was about adoption, the next phase is about execution discipline.

Mide-Ajala emphasizes that AI creates lasting value when it is embedded into well-designed workflows, stating that technology alone does not transform operations. Process design does.

Organizations that move successfully from pilot to production tend to focus on three criteria:

1. Redesign Healthcare Workflows for AI Scale

“Leaders need to audit three things: where AI meaningfully reduces effort, where human validation is still non-negotiable, and which manual steps can be eliminated entirely,” Mide-Ajala says.

When AI is layered onto unchanged processes, duplication often follows.

But when workflows are redesigned around AI’s capabilities, simplification becomes possible.

In this video, the AMA’s vice president of Digital Health Innovations, Dr. Margaret Lozovatsky, discusses why physicians must be involved in the development of the tools they use:

2. Define AI Ownership and Accountability

AI-generated outputs are not perfect and require fact-checking. Clear ownership, defined review checkpoints, and structured escalation paths are essential to ensure compliance and consistency.

“The fastest way to erode trust in an AI tool is to make no one responsible for its output,” Mide-Ajala says.

Without careful oversight, teams cannot use AI tools reliably or consistently.

3. Make AI Governance Consistent

Pilot initiatives tend to work because they’re small and tightly managed.

At the enterprise level, scaling requires repeatable frameworks that can work across teams.

That means governance models, measurable performance indicators tied to AI-supported workflows, and structured rollout playbooks allow organizations to expand consistently across departments.

“Scaling AI is an operations problem. Without shared standards, every team reinvents how AI is used, and performance becomes impossible to compare, let alone improve,” Mide-Ajala says.

That also means involving clinicians in how these tools are used. The American Medical Association’s Dr. Margaret Lozovatsky explains why:

Scaling AI in Healthcare for Competitive Advantage

Healthcare leaders are shifting their attention from the number of AI tools deployed to whether those tools are making operations measurably better.

“In this next phase, competitive advantage will not belong to those who adopted AI first, but to those who operationalized it effectively,” Mide-Ajala says.

That’s harder to measure and slower to change than buying a new tool. But it’s what determines whether any of this sticks.

Want to know more about the world of AI and how it impacts your industry?

Take a look at our list of the Top AI Companies of 2026.