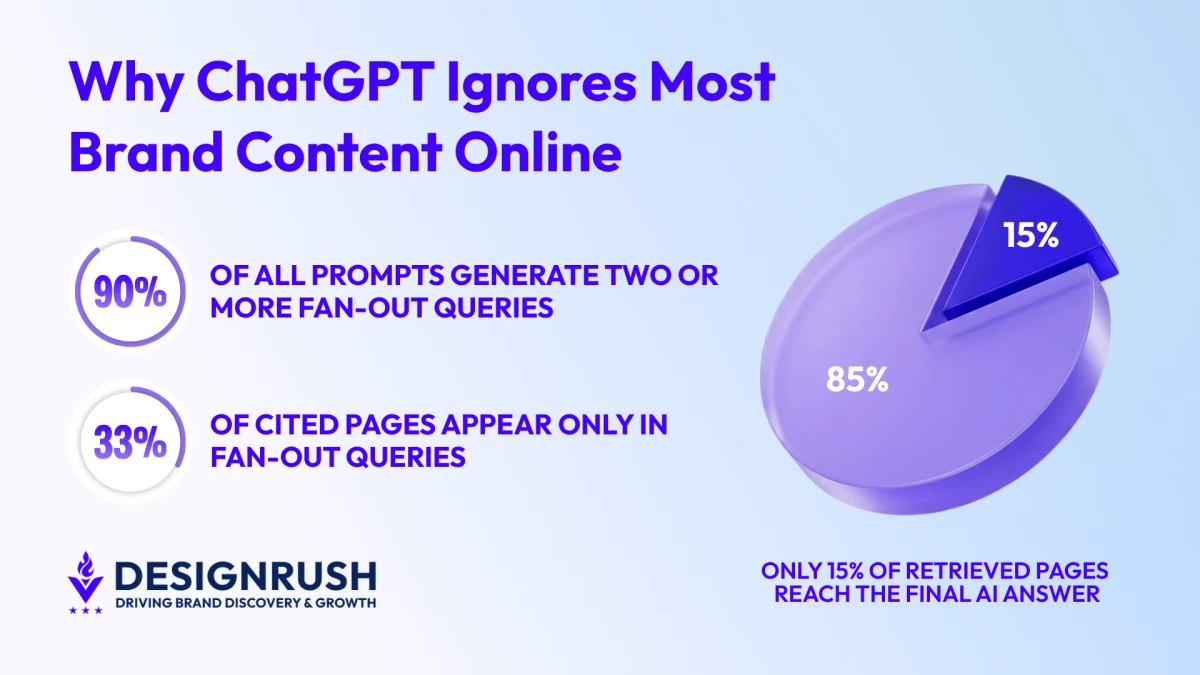

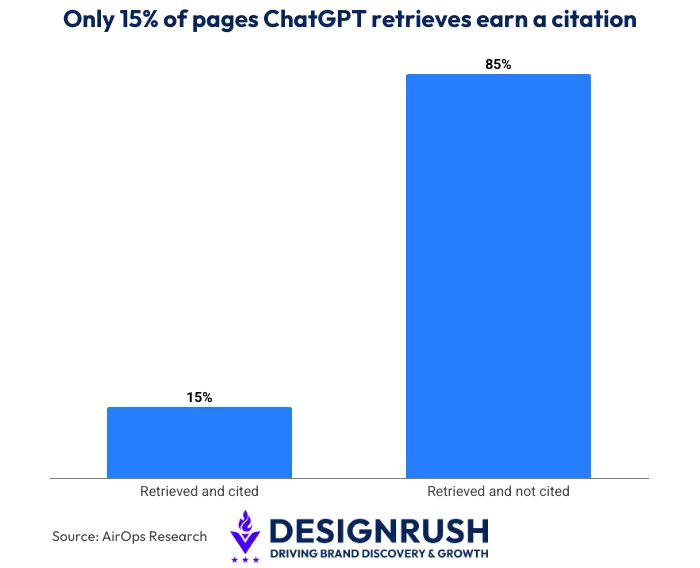

AI search is creating a new bottleneck between discovery and visibility, and a new AirOps analysis of 548,534 retrieved pages shows exactly where brands fall through it.

Only 15% of discovered pages ever appear in a final answer. The other 85% are retrieved, evaluated, and discarded before the user sees anything.

For brands executing SEO strategies built around rankings and indexability, this reframes the problem entirely.

For brands executing SEO strategies built around rankings and indexability, this reframes the problem entirely.

Today, the hard part is making sure your brand is the one selected from ChatGPT’s retrieval pool.

And understanding where content falls out of that process, and why, is now a core part of any content strategy.

Being Retrieved Is Not the Same as Being Seen

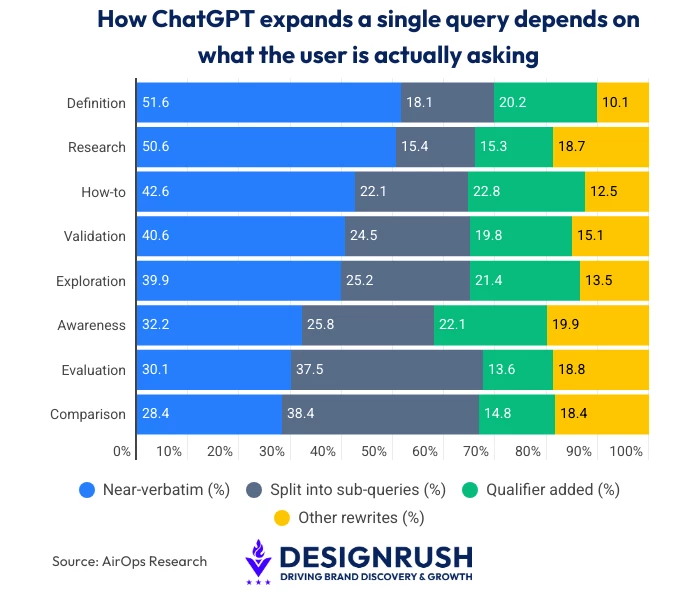

When a user submits a query, ChatGPT retrieves pages from multiple indexes, cites only a fraction of what it finds, and rarely stops at the original query.

- 89.6% of all prompts generated two or more fan-out queries, expanding 15,000 prompts into 43,233 total queries.

- 32.9% of cited pages appeared only in fan-out results, never through the original prompt at all.

- 95% of those queries have zero monthly search volume by traditional metrics, making them invisible to any standard keyword strategy.

Google rankings still matter. Position-one pages were cited 3.5 times more often than pages outside the top 20.

Google rankings still matter. Position-one pages were cited 3.5 times more often than pages outside the top 20.

But even top-ranked pages miss the cut more than half the time.

Furthermore, a separate Ahrefs analysis found that 83.39% of retrieved pages didn't appear in Google's organic results at all, meaning the LLM is drawing from indexes well beyond Google.

It is a pattern that HigherVisibility, named Agency of the Year by Search Engine Land, tracks consistently across client work.

"Getting retrieved is just the starting point. Whether you actually show up in the answer is a different question entirely," said Scott Langdon, Managing Partner at HigherVisibility.

Rankings and traffic reports measure retrieval. They say nothing about what happens at the selection step, where most content exits quietly.

What Gets a Page Cited and What Gets It Dropped

The AirOps data show that not all retrieved pages are equal candidates for citation.

What determines selection comes down to three measurable signals that most content teams are not actively optimizing for.

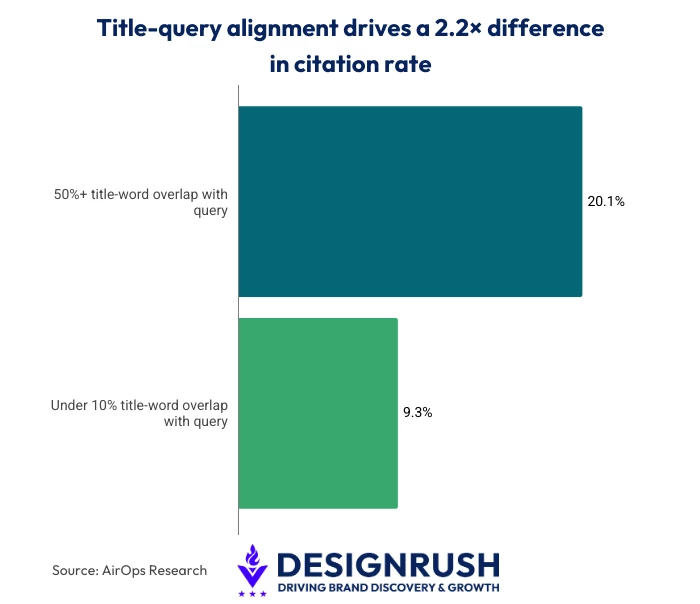

1. Title-Query Alignment

The clearest signal is how closely a page's title matches the query that triggered its retrieval.

Pages with 50% or more title-word overlap had a citation optimization rate of 20.1%.

Pages with less than 10% overlap were cited just 9.3% of the time, a 2.2x difference driven by a title-level signal alone.

Page titles written for broad brand appeal rather than for specific question matching are leaving AI citations on the table.

Page titles written for broad brand appeal rather than for specific question matching are leaving AI citations on the table.

2. Answer Position on the Page

Where the answer appears matters as much as what the answer says.

Research on citation patterns found that 44.2% of all citations draw from content in the first 30% of a page. The final third contributes only 24.7%.

Long preambles and buried answers reduce citation probability regardless of how strong the underlying content is.

3. Content Freshness

Content updated within the past three months is approximately twice as likely to be cited as older pages, per SE Ranking's analysis.

For brands targeting competitive queries, a content review should be conducted every three to six months as part of active AI SEO maintenance.

"Nine times out of ten, the page is written for a reader, not for extraction. The answer is buried, and the title doesn't match what the AI is actually searching for," said Langdon.

Where to Start

The pages most brands need to compete for citations already exist.

What is missing is the alignment between the title, the structure, and the specific questions AI evaluates when retrieving them.

None of that requires a full content overhaul.

HigherVisibility recommends three starting points for brands ready to act on it.

- Audit positions 10-20 in Google. Tighter titles, fresher content, and front-loaded answers improve both the rankings and the probability of AI citations.

- Map your fan-out topic coverage. Standard keyword research won't surface these queries. Adjacent questions left uncovered go to competitors.

- Put the answer first. If a page answers a specific question, that answer should appear in the first paragraph, not buried after two sections of background.

"One page that answers a question precisely will outperform ten pages that gesture at it," said Langdon.

Most content is built for breadth. Selection rewards depth on a single, specific question.

The Selection Problem Is Solvable

Most brands are optimizing for retrieval, but that isn’t where AI citations are won or lost.

Title-query alignment, front-loaded answers, regular content refreshes, and fan-out topic coverage are the variables that determine selection.

These are the building blocks of any serious ChatGPT SEO program.

Everything is measurable and actionable without overhauling what already exists. That is what Answer Engine Optimization, or AEO, is built around.

Brands investing in AI visibility now have a small window of opportunity.

Once AI search patterns solidify around a small number of cited domains, breaking in becomes significantly harder.