What Enterprises Get Wrong About AI: Key Findings

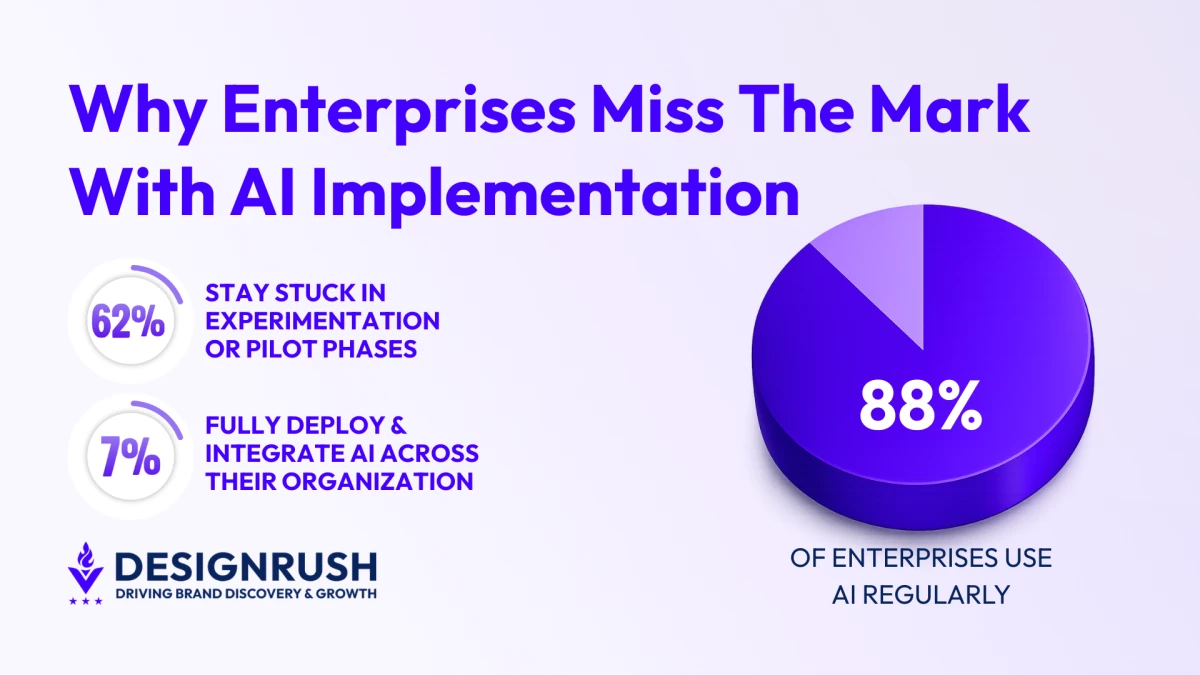

- 88% of enterprises use AI regularly, yet 62% remain stuck in pilot mode, showing adoption does not equal integration.

- Just 7% of enterprises have fully deployed AI across their organizations, proving enterprise-wide integration remains rare.

- Only 39% of companies report EBIT-level impact from AI, indicating most teams optimize demos instead of tying AI to business KPIs.

The use of AI in enterprise settings has come a long way since the early days of chatbots.

What began as automated customer replies and novelty pilots has evolved into something far more ambitious.

Today, the widespread availability of ChatGPT and other platforms has allowed enterprises across industries to test how AI might reshape operations.

Unfortunately, experimentation and transformation are not the same thing.

According to McKinsey’s State of AI report, 88% of enterprises are using AI regularly. However, 62% of them are still stuck in the experimenting and piloting phases.

That means only a third were able to successfully scale their AI initiatives.

Even then, only 7% were able to fully deploy and integrate it across their organizations.

These figures are hardly representative of the transformative benefits AI promised.

This is a painpoint that enterprise AI developers, like Unico Connect, seek to reverse when working with clients.

“The chatbot era was a useful entry point, but it's mostly behind us now,” said Unico Connect CEO Malay Parekh.

“What we're building today is more about automating decision-making and connecting intelligence to actual business workflows.”

In other words, meaningful AI adoption is less about conversational interfaces and more about embedding intelligence into operations.

Editor's Note: This is a sponsored article created in partnership with Unico Connect.

Why Most Enterprise AI Projects Stall

It’s no secret that quick and early adoption often delivers competitive advantages.

After all, enterprises that move first are able to shape customer expectations, compress costs, and signal innovation.

However, when new technology is implemented “just because everyone else is,” it only ends up creating more organizational headaches.

In the case of enterprise AI, that tension explains why scaling has proven so difficult.

According to Parekh, proper implementation can be traced back to two main reasons:

1. Organizational barriers

Most AI initiatives end up stalling because the organizations surrounding them are misaligned and unprepared.

This often manifests in several ways:

- Proofs of concept were built to impress rather than endure. AI pilots often succeed in curated environments designed for demonstration. Yet, what performs well in isolation may buckle under real transaction volumes and messy edge cases.

- Demo data that collapses under real-world variability. Controlled datasets hide inconsistencies that surface the moment AI interacts with live operational data. Missing records, conflicting fields, and undocumented exceptions quickly erode confidence.

- Business units were excluded from early design. When AI is introduced as a finished product, teams treat it as an external mandate. Adoption becomes procedural rather than purposeful.

2. Technical gaps

But even when organizational alignment is present, technical gaps can still undermine AI implementation.

These technical gaps include:

- Fragmented or weak data architecture. If an enterprise hasn’t invested in clean, accessible, well-governed data early, every AI capability built on top of it is compromised.

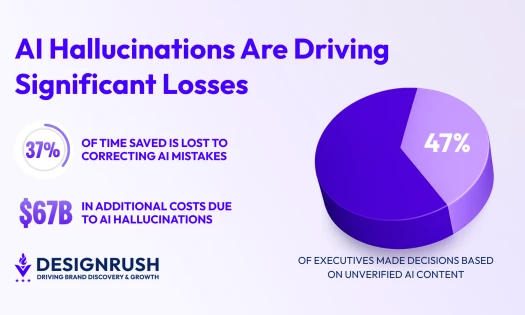

- Missing fallback mechanisms and audit trails. Without clear safeguards and traceability, AI systems can produce unverified outputs, leaving enterprises exposed to compliance and reliability risks.

- Overreliance on off-the-shelf models. Depending too much on generic models limits accuracy for specialized needs and introduces biases that don’t apply to an organization’s industry.

How Unico Connect Approaches Enterprise AI

So what exactly sets the enterprises that have successfully scaled enterprise AI apart?

Aside from addressing both organizational barriers and technical gaps early on, they’re also defining success at the right level.

For the most part, a successful AI implementation is tied to use-case-level metrics like accuracy, response time, or task completion rate.

These metrics, while necessary, aren’t sufficient.

Instead, teams need to tie AI outcomes to business-level KPIs, such as cost per transaction or revenue per interaction, from the beginning.

The same McKinsey report illustrates this point clearly, as only 39% of enterprises reported an EBIT-level impact from their use of AI.

The reason why those gaps exist is that teams optimize for the demo since the expected outcomes weren’t clearly defined.

“This is why we build measurement frameworks into the project design, so there's never ambiguity about whether the solution is actually working for the business," Parekh said.

Unico Connect has used this philosophy to great effect on a wide range of projects.

For example, the agency has built and deployed AI agents for various property management and real estate clients.

These agents were purpose-built to autonomously manage listing updates, tenant communications, and maintenance scheduling based on real-time triggers.

In the maritime sector, Parekh’s team has developed AI systems that aggregate operational and compliance data to surface actionable intelligence for fleet managers.

"The common thread across all of these is that the AI is embedded in the workflow," Parekh said.

"It's not a separate tool someone has to go ‘talk to.’ That distinction matters a lot in terms of actual adoption and ROI."

Embed AI Where Work Actually Happens

AI promised to change the lives of its users. But for enterprises, overfocusing on customer-facing use cases limits how transformative AI can be.

If there’s one thing to take away from these enterprise success stories, it’s that AI delivers sustainable value when it lives inside operational workflows.

And that can become a definitive advantage over competitors who continue to only look at customer-facing use cases instead of the big picture.