Enterprise AI Multi-Agent Platforms: Key Findings

- Enterprise chatbots are hitting scalability limits, as governance gaps and fragmented data expose architectural weaknesses.

- Agent sprawl is creating unpredictability at scale because loosely connected AI tools lack structured memory, shared objectives, and coordinated execution.

- Multi-agent orchestration is the enterprise solution, since governed agentic ecosystems enable scalable, predictable, and production-ready AI systems.

For the past few years, enterprise AI conversations have been easy to summarize. Simply add a chatbot, automate support, and improve response times.

It felt tangible and visible; something executives could point to and say, this is progress.

But as organizations push AI deeper into operations, that simplicity is giving way to tougher questions.

Can these systems handle cross-functional workflows? Can they operate securely inside existing infrastructure? And can they scale without creating more complexity than they solve?

Alexey Spas, CEO of leading AI-powered software development company, Instinctools, says many enterprises are realizing that conversational interfaces alone are not enough.

“The real shift is happening behind the scenes, where architecture, orchestration, and governance determine whether AI becomes operational or remains experimental.”

Watch how Intinctools’ GENiE proprietary solution can build predictable, secure, multi-agent AI systems that deliver optimal results:

Editor's Note: This is a sponsored article created in partnership with Instinctools.

Enterprise AI Adoption Accelerates in 2026

The momentum behind agentic AI is quickly showing up in enterprise adoption data.

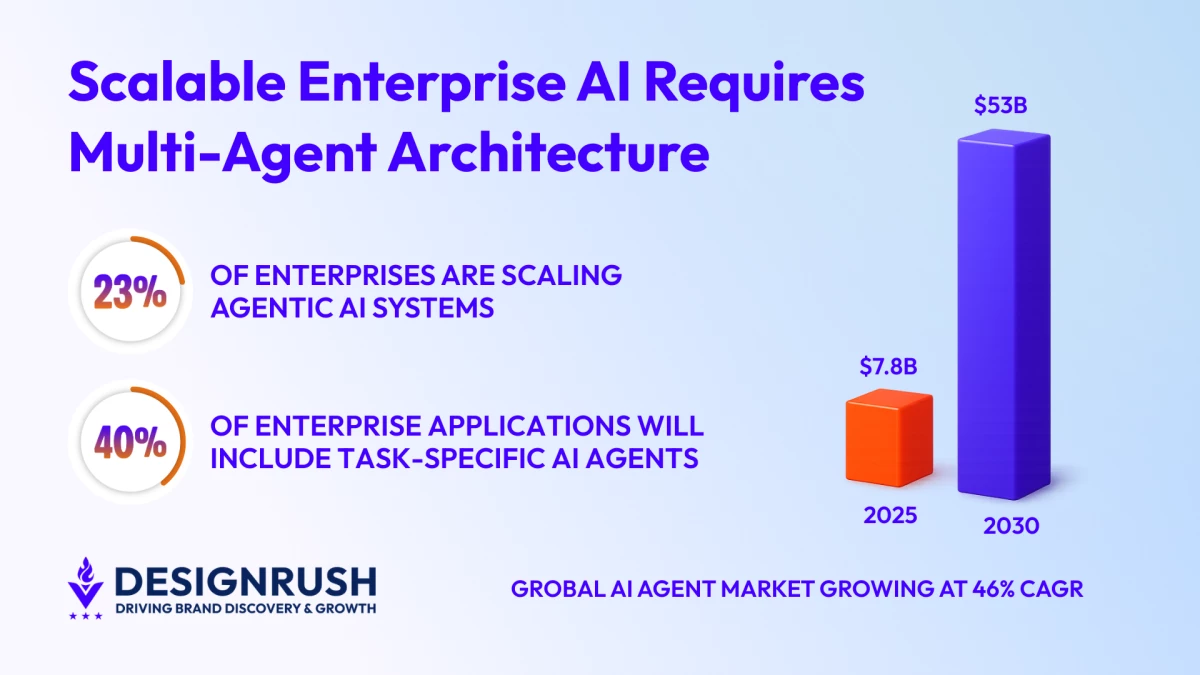

McKinsey’s State of AI report claims that 23% of enterprises are scaling agentic AI systems across parts of their operations, while 62% are actively experimenting with them.

That means more than half of organizations are no longer treating AI as a limited pilot or interface layer.

Instead, they’re actively testing how autonomous systems execute work across departments.

Furthermore, a 2025 Gartner forecast expects 40% of enterprise applications to include task-specific AI agents by the end of the year, up from less than 5% in 2024.

Meanwhile, Markets and Markets estimates the global AI agents market will grow from roughly $7.8 billion in 2025 to nearly $53 billion by 2030, reflecting compound annual growth above 46%.

“These numbers suggest a transition in mindset, where enterprises are no longer experimenting with AI for visibility, but investing in systems that must integrate, execute, and scale,” Spas said.

Why Enterprise Chatbots Fail at Scale

The introduction of Chatbots certainly delivered quick wins in the form of reducing ticket volumes, handling routine inquiries, and offering a visible proof point that AI could improve efficiency.

However, the strain appeared when organizations attempted to extend those systems across more complex workflows.

“Weak governance and a security gap often surface first,” Spas said.

“Many AI agents have limited access to internal system infrastructure, which restricts their ability to complete meaningful tasks.”

At the same time, poorly designed access controls can introduce compliance concerns.

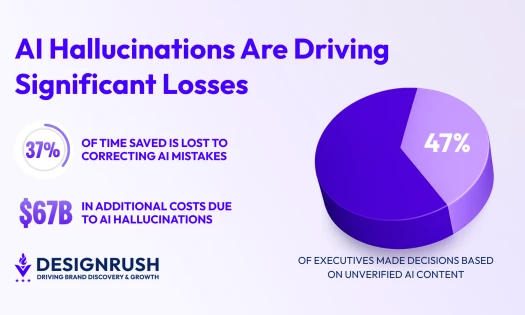

Data readiness is another friction point.

When data is fragmented, computation time increases as systems scale.

As a result, infrastructure costs climb, and what felt manageable in a contained pilot can quickly become expensive and inconsistent in production.

Context introduces another layer of complexity.

“Insufficient context engineering and memory management limit an agent’s ability to maintain continuity across multi-step processes,” Spas said.

Without structured memory, systems respond transaction by transaction rather than operating with awareness of broader objectives.

While some may consider these as superficial flaws, they expose the architectural ceiling of chatbot-centric AI.

What Is a Multi-Agent AI Architecture

Many enterprises initially assemble collections of AI tools that appear integrated on the surface, where interfaces connect, data flows, and dashboards update.

“But loosely connected AI tools do not share structured memory, defined roles, or long-running objectives,” Spas said.

“They may integrate at the interface level, but there is no central orchestration ensuring coordinated execution across systems.”

A true multi-agent architecture is built with that coordination in mind from the start.

This supports smooth workflow orchestration, adaptive context engineering, and proper governance across platforms.

Agents operate within clear responsibilities and shared objectives, forming a cohesive and scalable agentic ecosystem rather than a collection of isolated tools.

That cohesion is achieved through context engineering.

This enables agents to share structured memory and manage multi-step processes without stepping on each other’s decisions.

Spas notes that when data quality is poor or orchestration is weak, agents sharing context across workflows and tools can produce conflicting actions.

And at enterprise scale, this level of unpredictability can quickly erode confidence.

Ultimately, scalable systems are not defined by how many agents they deploy, but by how well those agents can work together for optimal results.

Why AI Orchestration Is Critical in 2026

As companies move from AI pilots to enterprise-scale implementation, governance complexity can quickly expand.

As such, compliance and operational standards must be enforced from the start across multiple agents, workflows, and data sources.

“Proper orchestration enables a group of AI agents to form a cohesive, autonomous system in which agents can handle multi-step processes and share context, achieved through context engineering,” Spas said.

That structure introduces predictability, makes behavior observable, and aligns execution with enterprise policy.

Without orchestration, scaling AI multiplies risk instead of value.

And in 2026, orchestration is less about optimization and more about control.

Essentially, it allows enterprises to trust AI with mission-critical workflows.

Enterprise AI Infrastructure Requirements

These days, architecture alone is not enough, and multi-agent systems depend on practical foundations.

As such, Spas says that data must be ready for AI consumption and accessible across systems.

“Infrastructure must support AI workloads, observability must include AI-specific signals so that teams can understand how agents behave in real time, and security and access control must be designed for autonomous systems interacting with internal environments,” he said.

These elements are not optional enhancements and are prerequisites for a stable agentic ecosystem.

Enterprises have moved past asking whether AI can answer a question and are now asking whether it can execute a process responsibly, align with compliance requirements, and scale without introducing operational volatility.

That is when the stakes start to feel real.

Chatbots initially helped enterprises imagine what AI could do, but the implementation of multi-agent platforms is forcing organizations to decide how seriously they intend to build it.