AI Software Teams Shrinking: Key Findings

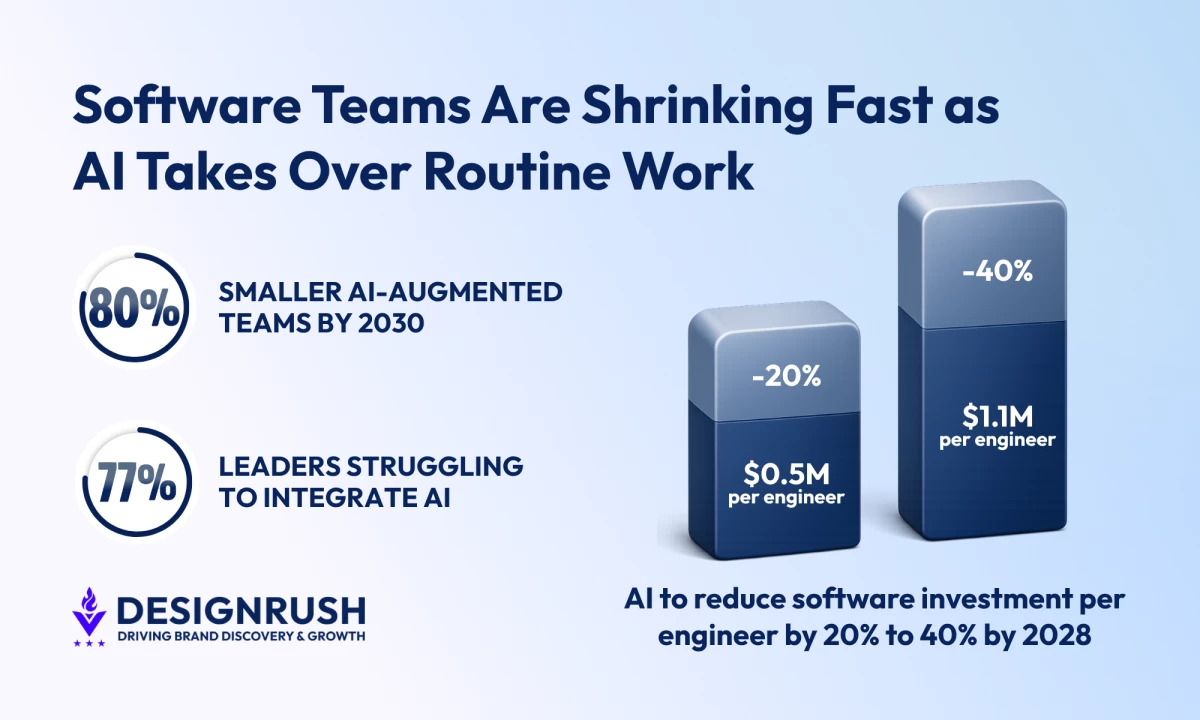

- Most organizations will be running smaller, AI-augmented software teams by 2030, pointing to a rethink in how development work gets done.

- 77% of engineering leaders report trouble integrating AI into applications, which points to a bigger need for clear processes, solid documentation, and roles built for AI workflows.

- AI tools could cut 20% to 40% of software investment per engineer by 2028, proving that training teams to guide AI output and write precise specs pays off.

By 2030, around 80% of organizations will evolve large software engineering teams into smaller, AI-augmented ones, according to Gartner.

This indicates the old build process, with its heavy handoffs and long waits, is losing its edge fast.

“AI-native development isn't about using Copilot to autocomplete code,” Igor Repeta, CEO of Empat, tells DesignRush.

“In practice, it means developers write in natural language first and code second: they describe business logic, edge cases, and constraints, and AI handles the scaffolding.”

Engineers aren’t sitting there typing every line anymore.

They spend less time on repetitive work and more time steering AI output, tightening requirements, and catching mistakes before they snowball.

The daily rhythm changes with it.

“The engineer's core job becomes reviewing, steering, and testing AI output,” Repeta says.

That means standups start centering on validation too. Senior engineers spend more time on architecture calls, security checks, and the ugly corners AI still misses.

Editor's Note: This is a sponsored article created in partnership with Empat.

Teams, Workflows, and Documentation Drive AI Success

The days of obsessing over every bracket and semicolon matter less. Clear intent matters more. Plain English is doing the heavy lifting.

Most software teams are still behind because they bought AI like a separate gadget instead of rebuilding work around it.

Empat recently launched an AI-based estimation tool that generates preliminary project costs in minutes, giving teams an early view of budget ranges before a discovery call.

The output is directional and still requires deeper scoping, but it shows how AI can be built into early-stage workflows instead of sitting on the sidelines.

View this post on Instagram

Gartner’s 2025 survey of 400 U.S. and U.K. engineering leaders found that AI adoption is still causing headaches:

- 77% said building AI into applications was a serious or moderate headache

- 71% said using AI tools to boost software workflows caused the same kind of pain

AI tools are already in the stack, but teams are still wrestling with how to make them work inside real workflows.

Many teams are still using AI as a faster keyboard rather than a different operating model, and their hiring practices haven’t caught up either.

Engineers are still tested on algorithm puzzles instead of skills for managing AI-generated code and workflows.

They don’t test prompt clarity, output review, or how well someone works inside AI-generated code. Legacy codebases do not help either.

Tight coupling, thin documentation, and vague ownership make AI assistance stumble before it gets anywhere useful.

The stumbling blocks in skill and codebase design explain why AI adoption hasn’t reached everyone yet.

Only about 11% of leading companies give nearly all employees access to AI tools, even as overall access has grown roughly 50% year-over-year, according to Deloitte’s State of AI in the Enterprise 2026 report.

That limited access makes structured documentation crucial.

And that’s where documentation starts doing real work.

“Specs, ADRs, and inline comments become the prompt layer,” said Repeta.

AI can’t read minds, and it definitely can’t clean up a messy codebase on its own.

How Teams and Processes Make AI Actually Work

The team structure piece is also changing.

The number of AI architect roles is expected to increase from 30% to 58% over the next two years, which means team structures will change. This comes from Deloitte’s 2026 Tech Trends report.

The same report says leading organizations are designing modular architectures for flexibility and rethinking talent around human-machine collaboration.

That lines up with how AI-assisted development actually works: small modules, clear interfaces, and single responsibilities give them a bounded space to work in.

Modular systems allow AI to tweak code without juggling half the codebase and keep any slip-ups from spreading.

This keeps mistakes small and predictable, turning AI-generated changes into manageable updates rather than surprise disasters.

A bad function in an isolated service is easy to fix.

But a bad assumption in a tightly coupled monolithic codebase can ripple through the whole system like cheap coffee at a Monday meeting.

That’s why modular architecture deserves more attention than it usually gets.

Deloitte’s Tech Trends 2026 says leading organizations are tying AI initiatives to business results they can track and designing modular architectures for flexibility.

And that’s not buzz. It’s the basic plumbing AI needs if the goal is faster delivery without turning every deploy into a small thrill ride.

One example is an AI system handling restaurant bookings end-to-end without forms, calls, or emails:

For product and engineering leaders, the cleanest advice is to stop judging AI adoption by the number of subscriptions sitting in procurement.

“Stop measuring AI adoption by tool subscriptions and start measuring it by how your delivery metrics change,” Repeta said.

That’s the right instinct. The first question is not how many tools the team bought. The first question should be whether cycle time, quality, and throughput improved in any meaningful way.

Deloitte’s banking forecast translates those changes into actual cost savings.

It predicts AI tools will cut 20% to 40% of software investment in banking by 2028, or roughly US$0.5 million to US$1.1 million per engineer, giving leaders a clear idea of where AI should be targeted: on repetitive, high-volume work first.

They should train engineers to judge output, challenge weak answers, and write tighter specs.

They should treat the codebase like a product for AI consumption, with cleaner documentation, lower coupling, and conventions that keep output consistent.

And, yes, that work is boring…but it’s also the part that usually decides who gets ahead and who spends the next year cleaning up after their own experiments.

“Clean boundaries aren't just a software design principle in 2026,” notes Repeta, adding that “they're an AI collaboration primitive.”

And that’s the whole story in one line.

AI-native development isn’t a side tool bolted onto an old process. It’s a new way to organize teams, write requirements, review code, and ship work without drowning in rework.

The teams that get this right won’t be shouting about AI at every meeting.

They’ll ship faster because their specs are precise, their modules are clean, their tests actually catch problems, and they aren’t scrambling to fix avoidable mistakes.

Consistency and clarity become the real competitive edge.