AI Coding Tools Job Requirement: Key Findings

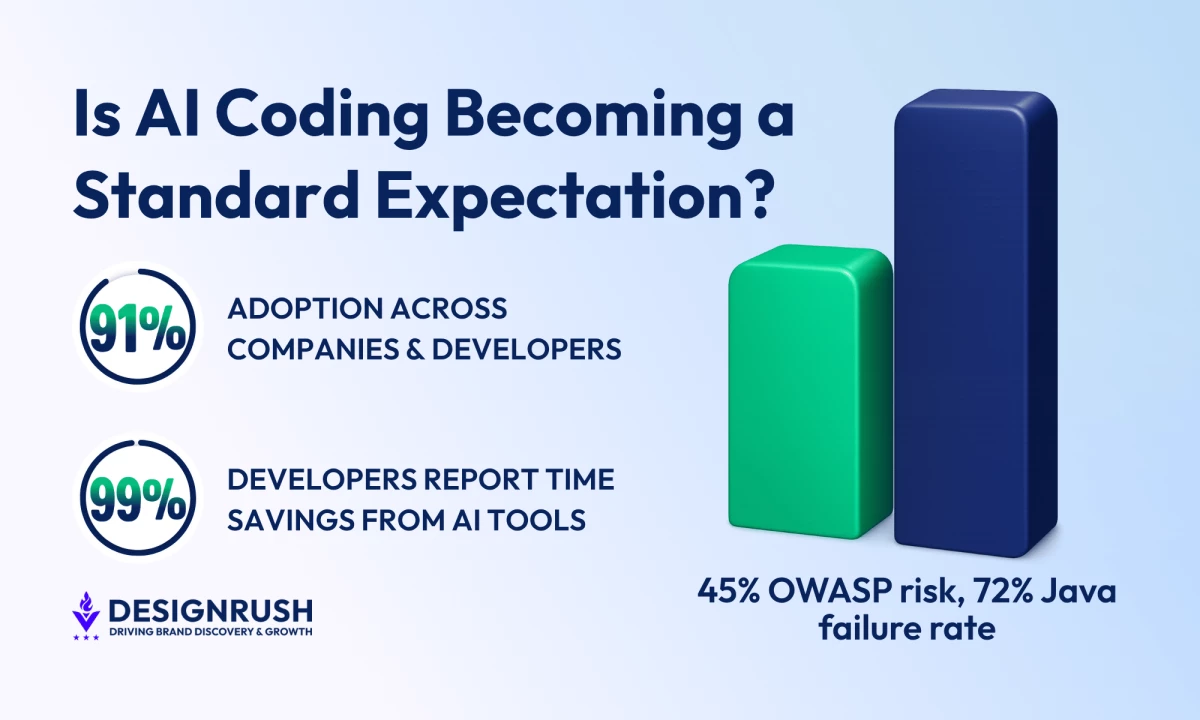

- 91% AI adoption and 22% AI-authored merged code show AI coding tools are becoming a hiring baseline, so companies need to formalize AI proficiency as a core hiring and upskilling requirement instead of treating it as optional familiarity.

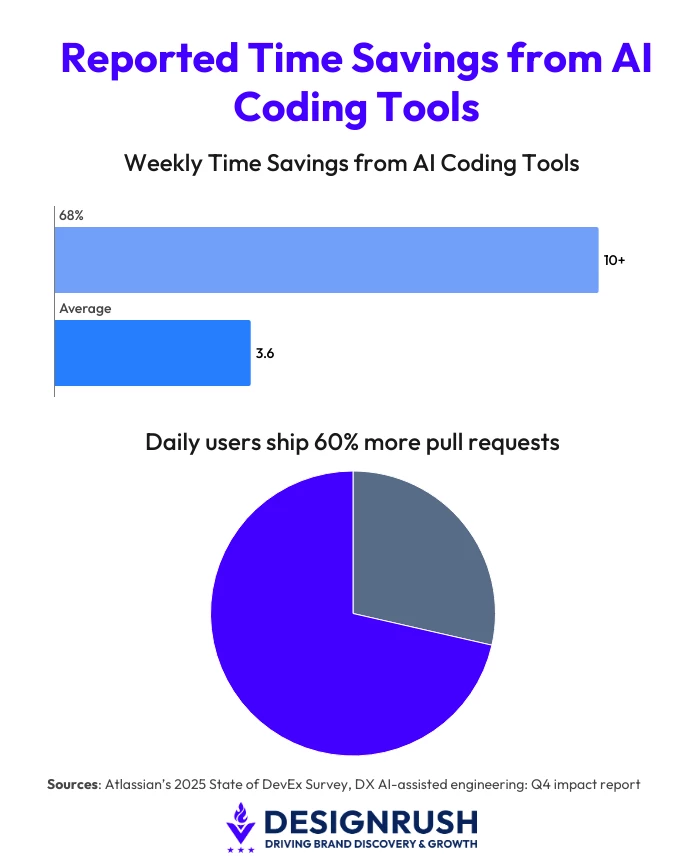

- 3.6 hours saved weekly and 68% of developers saving over 10 hours demonstrate that productivity gains are already embedded in workflows, so leaders need clear policies on how AI-driven time savings are reinvested into code quality, delivery, and documentation.

- 45% of AI-generated code that introduces OWASP Top 10 vulnerabilities shows security risk is rising alongside adoption, so teams need stricter review gates, testing standards, and stronger architectural ownership to prevent long-term system debt.

AI coding tools are becoming a part of daily workflows, hiring expectations, and management pressures within software teams.

In its Q4 2025 report, leading developer intelligence platform DX found an AI adoption rate of 91% across 435 companies and over 135,000 developers.

Of that, 22% of merged code is AI-authored.

On that note, Gartner forecasts that up to 75% of hiring processes will include certifications and testing for workplace AI proficiency.

It’s a loud signal from the market, even by tech standards.

Anand Ashok, founder of Quixta, a custom web and software development company, believes the same thing.

“AI coding tools are already becoming a standard expectation for developers and engineering teams in professional environments,” he said.

But the question now is how quickly organizations can formalize these new standards into baseline skill requirements, and what “competent use” means inside real engineering systems.

AI is Now Part of the Job

Editor's Note: This is a sponsored article created in partnership with Quixta.

While not every team is there yet, the direction is consistent. AI is transitioning from being an optional support tool to an assumed capability.

That nuance is important.

Almost all developers and managers, from a pool of 3,500 across six countries, attribute time savings to AI tools, Atlassian’s 2025 State of DevEx Survey found.

A further 68% said they save more than 10 hours per week.

On average, developers save an estimated 3.6 hours per week with AI coding tools, while daily users ship 60% more pull requests than non-users, DX reported.

On paper, that looks like a straightforward productivity win.

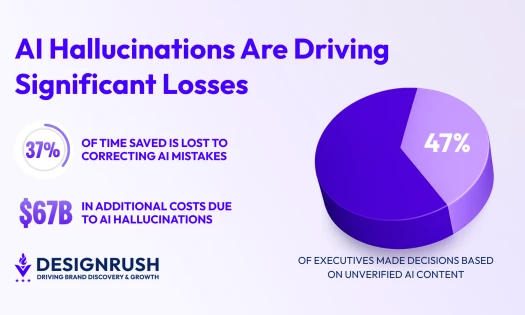

But in practice, outcomes are more uneven.

“AI coding tools have measurably impacted developers’ productivity and daily workflows, but actual outcomes are more nuanced than general assumptions,” Ashok said.

Atlassian’s data further showed that developers tend to reinvest time saved into code improvements, feature work, and documentation rather than increasing output volume.

This is because speed alone doesn’t explain what's actually being produced, and it’s also the point where expectations start to deviate.

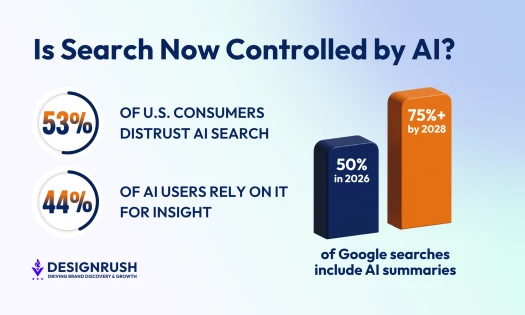

If AI tools are already being embedded in workflows and widely used across repositories, managers might start to treat them less like optional accelerators and more like assumed working knowledge.

But this doesn’t equal a universal reliance on AI for every task. On the contrary, it means that developers are increasingly expected to know when to use it, how to validate it, and when to reject its output.

DX adoption data and Gartner’s hiring forecast both point in the same direction: AI proficiency is becoming part of the baseline definition of technical competence.

And this is where another headache appears, one that is often ignored in productivity discussions.

Speed Without Structure Does Not Hold

The problem starts when speed gets ahead of the controls meant to contain it.

“Organizations that use AI for formal governance frameworks, instead of making it a primary method of productivity, can see better outcomes,” Ashok noted.

That distinction matters because faster development cycles don’t automatically translate into better systems.

More often than not, they increase the volume of code entering production, which raises the question of architecture discipline, review rigor, and long-term maintainability.

Veracode’s 2025 GenAI Code Security Report highlights the risk side clearly.

An analysis of 100+ large language models (LLMs) across 80 coding tasks revealed that 45% of AI-generated code samples introduced Open Worldwide Application Security Project (OWASP) Top 10 security vulnerabilities.

Java performed even worse with a 72% failure rate.

So, despite AI-generated code executing correctly, the trade-off is a myriad of hidden security issues.

And that creates structural challenges for engineering teams, because developers are now expected to operate in a dual role:

- Produce code faster

- Scrutinize it more carefully than before

Maintainability now becomes a central concern.

Code review, testing, and architecture decisions carry more weight too, because AI can generate functional code that weakens the system structure if not actively governed.

The risk is security and accumulation.

What Leaders Should Teach Teams

More generated code without strong architectural discipline can increase long-term technical debt faster than many teams are prepared to manage.

According to Ashok and his team at Quixta, the problem usually shows up in hiring practices, as companies will assess not only coding ability but also how developers operate within these AI-assisted workflows.

“The widespread adoption of AI coding tools is shifting developers’ roles from code author to code validator and system architect,” Ashok said.

It raises another expectation: knowing when not to use AI tools, and discipline in reviewing what they produce.

A practical adjustment is to treat AI coding assistants as controlled inputs inside the development pipeline.

This means:

- Stricter review standards for AI-generated code

- Consistent testing practices

- Stronger architectural ownership at senior levels

Faster production cycles only create value if governance and architecture keep pace. Otherwise, time saved in development is recovered through maintenance, refactoring, and rework.

Ashok’s perspective brings it back to structure over speed. AI works best as part of a defined engineering system, not as a replacement for one.

Teams that treat it as infrastructure, with clear standards around security, testing, and architecture, will extract value without letting maintainability erode in the background.

The rest will discover that speed is easy to achieve. Control isn’t.