Modern Data Platforms for AI: Key Findings

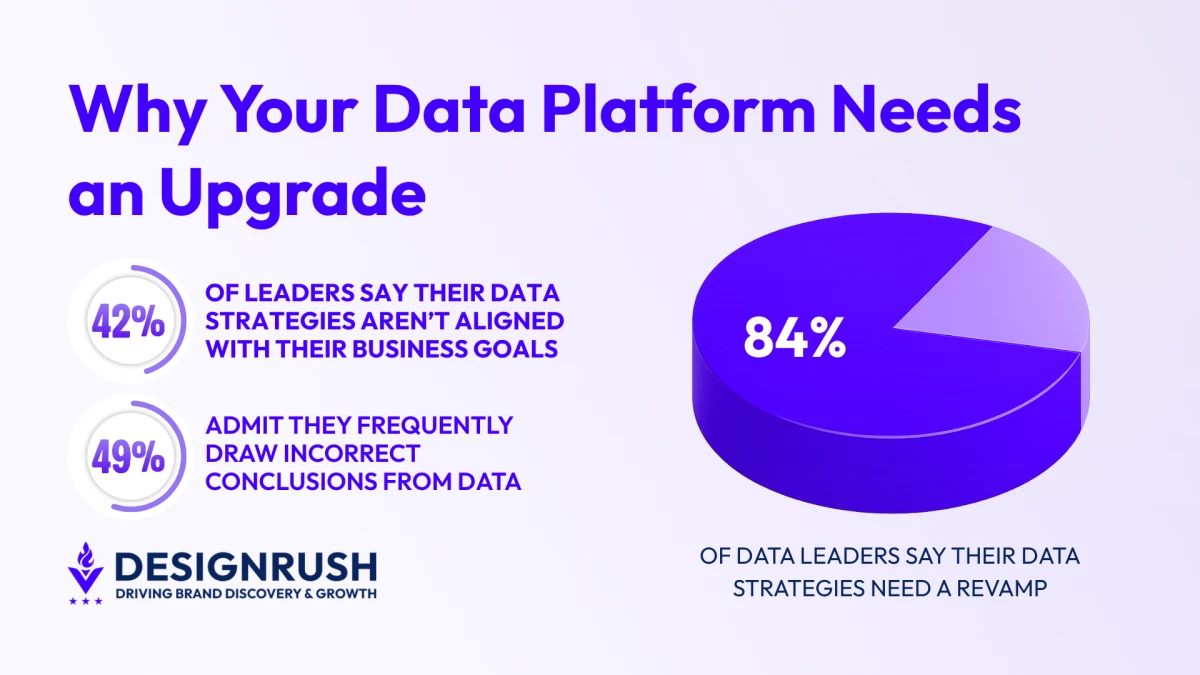

- 84% of data leaders say their data strategies need a revamp, indicating fragmented data environments are blocking AI and analytics outcomes.

- 82% of organizations are already investing in modern data platforms, signaling a rapid shift away from legacy systems.

- Modern data platforms act as a single source of truth, turning fragmented data into usable insights that power real-time analytics and AI initiatives.

AI promises faster decisions, but to see real gains, you must have reliable data systems.

This is especially true for enterprises trying to scale it, and it’s a hurdle many have yet to overcome.

Often, the issue stems from data foundations that aren’t prepared to support AI integration. While frustrating, it’s a common problem.

In fact, 84% of data and analytics leaders say their data strategies need overhauls to support AI, according to Salesforce’s State of Data & Analytics (2nd Edition).

This datapoint suggests the majority are dealing with fragmented data environments built on legacy systems.

The good news is, many are making strides toward solving this bottleneck, with 82% of businesses planning (or already implementing) a modern data platform.

What Is a Modern Data Platform?

A modern data platform (MDP) is an enterprise ecosystem that allows for the collection, storage, and consumption of data while adhering to strict governance rules.

Essentially, MDP is a single source of truth that turns scattered components into a unified system that optimizes the full data lifecycle.

In practice, MDP can take two forms:

- A modern data stack built from cloud tools

- A self-service platform built around those tools

Both forms are effective at ensuring clean and accurate data within an organization, especially when compared to legacy systems.

These traditional setups are slow, expensive to maintain, and hard to scale. MDPs tackle these problems by providing a foundation that accelerates AI and enables real-time analytics.

How to Build The Right Architecture for a Data Platform

A modern data platform is built upon a few layers, each serving an important function in the system.

Data Ingestion

Data ingestion is where the unstructured data from information systems like ERP, CRM, and financial platforms comes in.

A well-honed data ingestion architecture implies that all sources are securely connected, incoming data is validated through an established process, and the formats are standardized.

It utilizes tools like Fivetran, Apache Kafka, and CDC technologies, like Debezium, to handle these processes reliably at scale.

Data Storage

There are three essential data storage options companies can choose from:

- Data warehouses fit scenarios where datasets are well-defined and structured.

- Data lakes are best for organizations that expect analytics to evolve continuously or when they work with diverse data types.

- Data lakehouses combine the performance of a warehouse with the low-cost storage flexibility of a lake, connected by a metadata layer.

Data Processing and Modeling

The data processing and modeling layer is where stored data gets cleaned, structured, and prepared for further initiatives.

While the mix of tools is entirely dependent on the organization’s needs, here is an example of how this layer can look like:

Apache Spark handles large-scale batch processing, while Kafka allows for real-time streaming and fault tolerance. dbt enables SQL-centric transformation directly without leaving the warehouse, and Apache Airflow orchestrates complex pipeline workflows.

Data Consumption

This is the layer where the accumulated data pays off.

Business intelligence tools turn data into dashboards and reports to drive decisions.

Machine learning platforms like Databricks ML or SageMaker allow data scientists to train models to forecast demand or evaluate risks.

Aggregated data can also be used by internal systems and customer-facing applications through APIs like REST endpoint.

Data Governance is The Foundation of Trust

All of the effort put into building solid architecture is worth it only if the data is secure and trustworthy.

Data governance is what makes this possible.

Without the right mix of tools for data governance, there is a high risk of compliance failures and inaccurate insights plaguing the system.

Metadata Management

Crucial to functional data governance, metadata management allows other systems in the data platform to understand where the data is and what its purpose is.

DataHub and Unity Catalog enable detecting owners and indexing schemas, while tools like Cube and Purview classifiers help classify and understand information at a deeper level.

Data Lineage

Data lineage tracks data origin and traces its flows all the way to consumption.

This is possible with tools like OpenLineage, Airflow, and dbt. With this tech stack, organizations have a much easier time debugging, performing impact analysis, and staying compliant.

Data Monitoring

Monitoring tools like Monte Carlo help companies be more confident in their data quality.

Great Expectations and Soda enable detecting schema drift and late arrivals before they reach the analysis stage.

Lastly, query performance monitoring tools like Databricks allow companies to keep the system performance on par with today’s standards.

Data Security

Without established security practices, the data platform becomes a liability rather than an asset.

It’s crucial that only intended users have access to the data and that sensitive information is under solid protection.

Here are the essential practices you need to enforce to achieve this:

- Use engines like Apache Ranger and AWS Lake Formation to remove the risk of ad-hoc permissions.

- Enable role-based models to manage user permissions

- Encrypt or tokenize sensitive information to ensure safe data use across teams

- Enforce schema integrity control to exclude the possibility of unauthorized data changes with tools like Delta Lake.

Data Platform Post-implementation Best Practices

Building the data platform is only half of the equation. The other half is to make sure that the system’s performance is consistent after implementation.

Start with a controlled pilot to test usability in a real, small-scale environment. Make sure to gather feedback from the target user group and address their specific concerns early.

Then, instead of conducting daunting formal training sessions, it’s crucial to integrate learning as part of the daily employee workflows.

Make sure that training is based upon real projects, rather than hypothetical scenarios.

Next, monitor impact by analyzing specific metrics that are tied to both business outcomes and platform performance.

This means tracking user adoption and data quality benchmarks, alongside revenue and operational efficiency gains.

Finally, consider that the modern data platform is a constantly evolving ecosystem that always benefits from adapting new technologies and scaling.

What It Takes to Make a Data Platform That Endures

Every functional and long-lasting data platform is built according to a set of these non-negotiables:

- Data flows through a consistent unified access layer to eliminate fragmentation.

- Business meaning is embedded into data through a semantic layer, making it understandable by the end users, not just the engineers who built the platform.

- All types of data are supported from the get-go, be it text, images, or audio.

- Datasets are well-documented, supplied with metadata, and are treated as a reusable product.

- The platform continuously evolves, constantly adjusting data based on changing business needs and user feedback.

- Governance and security are built into the architecture, ensuring all data is safe and validated at every stage.

For your AI ambitions to amount to anything, the data foundation needs to be built with the definitive intention.

Forcing a one-size-fits-all solution onto different business problems is a sure way for your data platform to become a liability.

Ultimately, success with AI depends less on the tools organizations adopt and more on the strength of the data foundations they build.

At the end of the day, getting it right depends on whether your partner granularly understands the business goals and how to translate them into platform infrastructure.