Visibility in AI Search: Key Findings

- AI-powered search now drives more discovery than traditional search engines, making retrievability a critical content metric.

- Structure, clarity, and independence of ideas determine whether content is surfaced in AI responses.

- Embedding retrievability into every stage of content production helps teams adapt to zero-click search and maintain visibility.

Search engines like Google and Yahoo used to be the only way to conduct online searches. Today, these engines are facing stiff competition from AI.

When looking up simple information, 47% of people use search engines, while 28% and 23% of users prefer to use AI chatbots and AI search, respectively. This is according to a report from Search Engine Land.

Although search engines technically still have the bigger share, it’s telling that a combined 53% of people default to using AI-powered search.

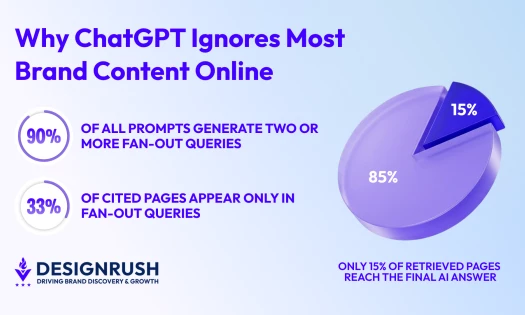

As AI search engines like Google AI Mode, Perplexity, and ChatGPT reshape how people discover information, retrievability has emerged as a distinct criterion separate from indexability or keyword optimization.

This means content must now be structured to allow AI systems to interpret, extract, and reuse it effectively in summarized responses.

That’s why Victorious, an SEO agency focused on AI-driven search, has developed an internal scoring system to evaluate retrievability.

The Role of a Scoring Framework

According to research from Bain & Company, the rise of AI search and zero-click has led to a reduction in organic web traffic by roughly 15% to 25% across the board.

It’s the digital version of a drought, as once-fertile traffic streams now trickle, leaving marketers scrambling to irrigate visibility.

This is why monitoring retrievability through a scoring framework becomes so important for marketers and SEO specialists.

The scoring system identifies structural patterns that support retrieval and reuse, highlights improvement opportunities, and aligns content teams around a shared standard for AI visibility.

It was designed to complement, not replace, conventional SEO metrics. That’s why the framework aims to:

- Promote formatting patterns that support reuse in AI-generated answers.

- Evaluate section-level clarity.

- Prioritize edits that improve visibility in AI search.

- Create a shared vocabulary across strategy, editorial, and technical teams.

Victorious built its framework on five core principles, each of which translates into a specific scoring element.

1. Modular Design

Each section of content should function as a self-contained answer, recognizing that AI retrieval often happens at the chunk level.

The scoring system evaluates this through header alignment or whether H2s and H3s reflect real-world queries and provide semantic clarity.

2. Summary-First Structure

Sections should begin with a clear answer to the implied question. This is measured by assessing whether each section opens with a direct, concise answer.

3. Lexical Grounding

Precise, clearly defined language that directly names key topics allows AI systems to interpret, relate, and reuse content.

The framework scores lexical clarity or whether terminology is specific and avoids vague pronouns or references that depend on previous context.

4. Independence from Context

AI systems favor content that stands alone without relying on context from other sections.

This principle is evaluated through chunk independence, defined as whether content blocks can be reused without additional explanation.

5. Semantic Reinforcement

Internal links and related topic groupings help AI recognize how concepts connect and boost content discoverability.

The scoring system measures the quality and relevance of internal linking between related sections.

-content-large-webp.webp)

Each section of content is scored against these elements, offering insight into areas that can benefit from structural improvements.

However, it’s important to note that the retrievability score doesn’t act as a final grade.

Instead, the team uses it to prioritize edits based on which sections need structural attention to improve AI visibility.

Lessons From Applying the Framework at Scale

After applying the scoring model across hundreds of pages, Victorious uncovered several patterns:

- Strong writing doesn’t guarantee high retrievability. Well-crafted content can still underperform if it isn’t well structured or formatted for reuse.

- Top-ranked pages don’t always appear in AI results. Pages with clean formatting and clear sections often get cited over more authoritative content without a retrievable structure.

- Minor structural fixes can make a big difference. Updating headings or adding summary-first sentences can sometimes improve AI visibility better than a complete rewrite.

- Some formats naturally perform better in AI search. FAQs and how-to pages tend to score higher by design. Editorial content usually needs more structural editing to reach the same level of visibility.

How to Operationalize Retrievability Scores

Rather than treating retrievability as a post-publish metric, Victorious embedded the scoring system throughout their content process:

- Pre-production: Content audits identify structural gaps that could limit AI visibility before writing begins.

- Planning phase: Briefs and outlines now include retrievability targets alongside traditional SEO and editorial goals.

- Quality assurance: Reviews assess whether each section can stand alone and be effectively reused by AI systems.

- Performance tracking: Post-launch analysis compares retrievability scores with actual AI citation frequency, creating a feedback loop that refines the scoring model.

Shift Your Visibility Strategy

The shift toward AI-powered discovery has changed what it takes to surface content at the right moment in the user journey.

Unfortunately, not every company is making the necessary operational changes to continue to drive results for the clients.

Success now depends less on rank and more on whether content can be reused by the engines generating answers.

Retrievability scoring provides teams with a way to meet that shift with intention, by transforming structure into a signal that drives visibility.