Key Takeaways:

- OpenAI is facing a GDPR complaint over ChatGPT generating false and defamatory information, raising serious legal and ethical concerns.

- Privacy advocacy group Noyb argues that AI-generated misinformation violates GDPR, which requires accurate personal data and provides individuals with rights to rectification.

- This case could lead to significant regulatory action, including fines and stricter AI governance measures.

Can businesses trust AI when even top models like ChatGPT are accused of spreading harmful misinformation?

OpenAI is under scrutiny as privacy advocacy group Noyb filed a complaint with the Norwegian Data Protection Authority over ChatGPT’s generation of false personal information.

The case raises concerns about AI misinformation and the European Union’s General Data Protection Regulation (GDPR) laws, which mandate data accuracy.

🚨 Today, we filed our second complaint against OpenAI over ChatGPT hallucination issues

— noyb (@NOYBeu) March 20, 2025

👉 When a Norwegian user asked ChatGPT if it had any information about him, the chatbot made up a story that he had murdered his children.

Find out more: https://t.co/FBYptNVfVzpic.twitter.com/kpPtY1ps25

The complaint stems from an incident where ChatGPT falsely claimed Norwegian citizen Arve Hjalmar Holmen was convicted of murder.

While some details were correct, the fabricated allegations were damaging.

Noyb argues that such errors violate GDPR, which grants individuals the right to correct or delete inaccurate personal data.

Joakim Söderberg, a data protection lawyer at Noyb, indicates that personal data has to be accurate.

"You can’t just spread false information and then add a small disclaimer saying that everything may not be true."

GDPR breaches can lead to fines of up to 4% of a company’s global revenue.

OpenAI’s past GDPR issues, including a €15 million fine in Italy for improper data processing, indicate that European regulators are closely monitoring AI privacy concerns.

This case could prompt stricter AI regulations, forcing OpenAI and other AI firms to improve data accuracy.

In fact, similar interventions have led to changes in how ChatGPT handles user data and transparency features.

Business and AI Governance Challenges

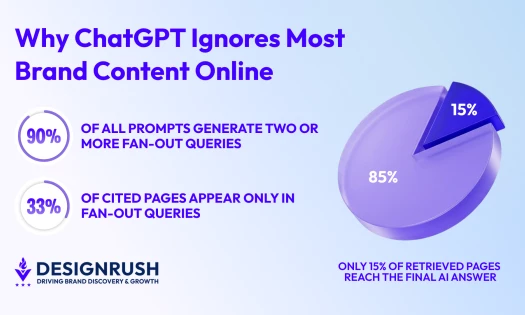

AI models like ChatGPT rely on probabilistic text generation, making them prone to “hallucinations” where they generate incorrect information.

While OpenAI has introduced safeguards, this case highlights the limitations of current AI accuracy controls.

Noyb has cited other false allegations made by ChatGPT, including individuals wrongly linked to corruption and child abuse.

AI hallucinations? 🤯 ChatGPT once fabricated fake legal citations. Google's AI claimed astronauts played with cats on the moon. Why does AI make things up—and why do our brains do the same thing? #AI#ArtificialIntelligence#FalseMemorieshttps://t.co/6MRji7aJ0v

— Nancy Jones (@yanqui) March 13, 2025

These errors pose reputational risks and raise questions about AI’s reliability in business and legal contexts.

The growing scrutiny over AI-generated misinformation highlights the need for businesses to implement robust data governance and compliance measures when deploying AI tools.

Companies investing in AI should prioritize accuracy and transparency to mitigate reputational risks and regulatory penalties.