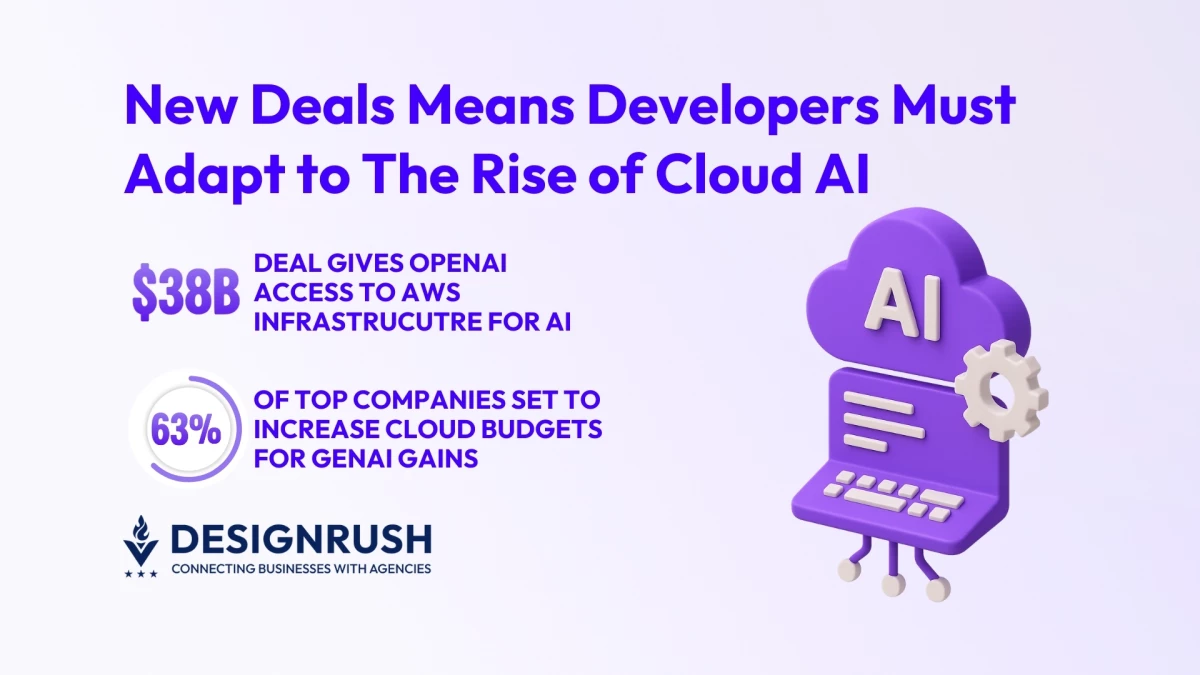

OpenAI x AWS Cloud Expansion: Key Findings

- OpenAI’s $38B deal with AWS unlocks massive GPU and CPU access, giving developers more stable compute for both training and inference.

- AWS will supply “hundreds of thousands” of NVIDIA GPUs plus the ability to scale to “tens of millions” of CPUs through EC2 UltraServers.

- The agreement is set to shake up AI infrastructure strategies, where predictable cloud capacity becomes just as important as cutting-edge models.

AI infrastructure just hit a new phase.

With OpenAI signing a $38 billion, seven-year agreement with AWS, the company gains access to large-scale compute clusters running NVIDIA GB200/GB300 GPUs and vast CPU capacity through EC2 UltraServers.

But the impact goes beyond hardware.

This deal represents a new era in how AI software teams plan, build, and scale applications.

Developers are no longer blocked by GPU scarcity, and the challenge now is designing systems that actually use scale efficiently.

“Scaling frontier AI requires massive, reliable compute,” said OpenAI CEO Sam Altman.

“Our partnership with AWS strengthens the broad compute ecosystem that will power this next era and bring advanced AI to everyone.”

And the timing matters.

Cloud-AI demand is rising, and infrastructure investment is growing rapidly.

According to statistics compiled by Exploding Topics, 63% of top-performing companies are set to increase their cloud budgets to better take advantage of GenAI.

This matters because meeting global AI compute demand could require multi-trillion-dollar investments in data centers over the coming years.

Whether developers and AI product teams win will now depend not just on access to chips, but on infrastructure architecture, data strategy, and deployment automation.

How Developers Should Rethink Infrastructure

1. Build Architectures That Scale Linearly

With enterprise-class GPU clusters now accessible, developers must design systems that actually scale.

This includes multi-node workloads, clean API layers, efficient model routing, and reliable data pipelines.

AWS will use tightly connected GPU clusters with GPUs networked through EC2 UltraServers.

View this post on Instagram

This enables low-latency, high-throughput workloads that support both inference and training.

Without a scalable architecture, even endless compute becomes wasted capacity.

As AI models grow in complexity, companies are quickly realizing that raw compute alone isn’t enough, and the way workloads are structured and deployed can make or break performance.

“Companies navigating large-scale AI workloads need infrastructure that scales efficiently without bottlenecks,” said Malay Parekh, CEO of Unico Connect.

"We’ve observed that proper architecture design, including clustered GPU deployment and optimized data pipelines, directly impacts model performance and developer productivity.

The firms that plan compute strategy alongside software architecture can scale confidently as demand grows.”

2. Treat Compute as a Long-Term Strategic Asset

This deal shows that compute isn’t just a short-term hurdle anymore.

It’s now a key part of how companies plan and build AI.

Global demand for AI infrastructure is fueling large-scale investment.

In fact, one recent analysis showed that supplying AI-related data centers may require up to $5.2 trillion to $7.9 trillion in capital expenditures by 2030.

AI demand alone will require $5.2 trillion in investment by 2030 according to McKinsey

byu/kurrekonstig instocks

Teams that model compute costs early, understand workload patterns, and build operations around them will scale reliably.

Those who don’t will hit unpredictable costs, or worse, performance degradation.

3. Build for Both Training and Inference From Day One

AWS infrastructure under the deal will support both model training and real-time inference.

This means products, prototypes, and production must coexist.

Developers should structure their stack so they can:

- Test models in isolated environments

- Deploy safely

- Monitor cost and performance

- Scale inference without affecting training jobs

These are especially important as AI products evolve toward agentic behavior, multimodal understanding, and frequent updates.

“At Unico Connect, we’re seeing the same shift play out across our clients,” shared Parekh.

“Teams want control, predictability, and infrastructure that doesn’t punish them for scaling.

The goal is to build a foundation that keeps engineering velocity high while keeping long-term costs stable. This is where architecture decisions matter more than ever.”

What This Means for AI Product Builders

Most AI product issues come from messy infrastructure.

Teams often pile new models and features on top of brittle systems, and then scramble when latency, bugs, or cloud bills spike.

What this AWS–OpenAI deal highlights is fairly straightforward: predictability wins.

When compute is stable and scalable, teams can focus on user experience, feature quality, and product value rather than wrangling GPUs or firefighting infrastructure problems.

The companies that benefit from this new AI era won’t necessarily have the flashiest features.

They’ll be the ones with clean pipelines, reliable operations, and infrastructure that hold up under growth.

Believe it or not, good AI products don’t shout.

They run smoothly, quietly, and at scale.