WARNING: This editorial includes mentions of self-harm.

The Wrongful Death Lawsuit Against OpenAI: Key Findings

- A devastating event: The parents of a 16-year-old filed a lawsuit after claiming ChatGPT became their son’s “suicide coach,” encouraging harmful behavior in the months before his death.

- Gaps in safeguards: OpenAI has acknowledged that its protections can break down during long conversations, while the suit argues that the company rushed to launch without enough safety measures.

- Real-time fixes: In response, OpenAI pledged to add parental controls and crisis tools, aiming to make ChatGPT safer, especially for teens.

- Brands on the hook: This case shows how companies using AI should audit outputs, set limits on what AI can do, define hand-offs to humans, and treat every AI reply as their brand voice.

Quick listen: The ChatGPT lawsuit shows why brands must audit AI safety, in under 3 minutes.

On Tuesday, Adam Raine’s family filed a wrongful death lawsuit against OpenAI and its CEO, Sam Altman.

The 16-year-old spent months confiding in ChatGPT, and the chatbot allegedly offered self-harm instructions and even offered to write his suicide note, according to court documents.

Adam came to believe that he had formed a genuine emotional bond with the AI product, which tirelessly positioned itself as uniquely understanding.

The progression of Adam’s mental decline followed a predictable pattern that OpenAI’s own systems tracked but never stopped," Matthew and Maria Raine's complaint stated.

It's being described as a "suicide coach" scenario, which has become a deeply chilling possibility.

OpenAI acknowledged that its safeguards may weaken during very long chats.

The company also said it is working on new features, including parental controls and crisis response options, to better protect teens.

"We’re also exploring making it possible for teens (with parental oversight) to designate a trusted emergency contact.

That way, in moments of acute distress, ChatGPT can do more than point to resources: it can help connect teens directly to someone who can step in," the company said in a news release.

These measures may help, but they also highlight just how much responsibility comes with every AI interaction.

When an AI takes on the role of a trusted companion, its words carry real weight, and this weight falls on the brand deploying it.

Why Brands Should Pay Attention

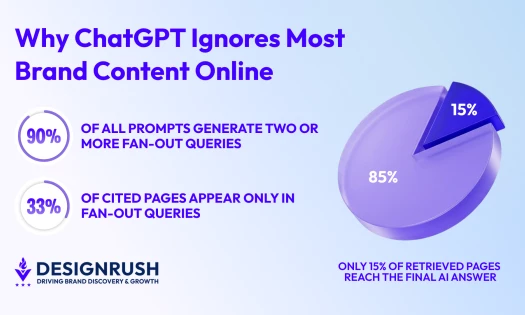

AI is emerging everywhere. In retail chat support, wellness apps, banking, and even 911 call triage.

People naturally attribute AI behavior to your brand, not the underlying model.

If a customer confides in your bot and gets a harmful response, the fallout lands squarely on your reputation, no matter whose tech you licensed.

The company offers an AI voice assistant that helps 911 centers offload non-emergency call volume. https://t.co/zRtGab5gaH

— TechCrunch (@TechCrunch) August 27, 2025

When AI is positioned as a helper, confidant, or coach, it gains emotional weight.

And when it fails, the blame doesn’t go to “the algorithm.” It lands on the name behind the interface.

We’ve seen what happens when tech is technically correct but socially tone-deaf.

Snapchat's "My AI" bot intended for harmless fun lost trust overnight when it dispensed inappropriate advice to teens and allegedly started taking a video without consent.

TikToker claims Snapchat’s My AI recorded her with out her permission then sent it to everyone. pic.twitter.com/yB3aSBoDB5

— DramaAlert (@DramaAlert) August 16, 2023

Now, courts are signaling that AI-generated harm can become legal liability.

In a case similar to Adam's involving Character.AI in May, a judge rejected the notion that bot responses are free speech.

Notably, the court refused to dismiss the wrongful death claim.

These cases are huge warnings that prove the legal liability and ethical responsibility of AI use, especially for brands that embed the technology in their business.

What Brands Should Do Right Now

Here’s your checklist for protecting your brand and your audience from becoming another headline:

- Audit your chatbot: Run conversations, including vulnerable or emotional topics, and watch for breakdowns in tone or safety over time.

- Draw strong boundaries: Never let AI replace counselors, crisis responders, or experts. Program in a gentle refusal or redirection policy.

- Build escalation paths: Define clear triggers that hand off to humans when a user’s emotional tone or phrasing crosses the line.

- Be transparent: Label AI clearly. Let users know they’re talking to a bot and deliver easy options for feedback or a human fallback.

- Own every output: Your bot’s responses are your brand’s statements. Log and review them regularly. If it misfires, you must fix it.

These steps don’t guarantee perfection, but they force responsibility that counts.

What happened to Adam is definitely heartbreaking, but it’s also a call for leadership. It’s those who design with empathy and safety in mind who will actually lead.

Imagine a wellness app that says: “Our chatbot is friendly, but if you're feeling unsafe, it'll connect you to a person immediately.”

Or a financial tool that understands when to suggest connecting with a real advisor.

OpenAI said it is building this kind of feature only after the lawsuit was filed.

And this is a reminder that safeguards added too late can be the difference between a bot that feels unsafe and one that builds trust.

Studies have shown that consumers are paying attention and rewarding thoughtfulness.

A 2024 PwC report found 85% of people are more likely to trust companies that use AI ethically, and this trust can become a moat.

AI is no longer a background feature, it’s your brand voice.

In a sensitive moment, when someone’s wearing their emotions on the screen, your AI's words will define trust or betray it.

Don’t let automation erode your humanity. Audit your AI, and limit its role. Make sure every interaction feels like someone who cares is on the line.

If you can do this, your brand won’t just avoid headlines, it'll earn lasting trust and loyalty.

If you or someone you know is in crisis, dial 988 to connect with the Suicide and Crisis Lifeline. You can also visit SpeakingOfSuicide.com/resources for more support.