AI-Readiness in Business Leadership: Key Findings

- Less than a third of big transformation projects truly last. It’s a sign that AI success comes from real ownership and team buy-in.

- The companies seeing real impact connect AI to clear business goals and make someone accountable for results from day one.

- When teams can experiment safely, adoption happens faster and delivers results everyone can see.

AI is evolving at an incredible pace, though not always in the right direction.

Leaders today are juggling huge expectations. If artificial general intelligence (AGI) really is just around the corner, it could reshape entire economies and societies.

As Mo Gawdat, former Google X executive, puts it, this is “one of the most important transitions humanity will ever face.”

How do you contain something that's a billion times smarter than you?

— Steven Bartlett (@StevenBartlett) August 4, 2025

Mo Gawdat is back, his fifth appearance on The Diary Of A CEO and possibly his most important one yet.

Mo was Chief Business Officer at Google X, so when he talks about the future of humanity and AI, I… pic.twitter.com/DSQVAqiSzk

On the other side, evidence of consistent business value remains patchy. Pilots often make headlines but rarely scale. Many executives are realizing that adopting AI is less about racing ahead, and more about building readiness that lasts.

McKinsey’s transformation research shows fewer than one in three large-scale organizational changes succeed at both improving and sustaining performance.

AI, with its technical and ethical complexities, raises that bar even higher.

“Most leaders are being asked to make AI decisions with more uncertainty than they're used to,” notes Emily Slota, president of Hugo, a company that helps organizations deploy AI responsibly.

“It’s not like past technology shifts where benchmarks were clearer. Here, the data is still evolving while the expectations are accelerating.”

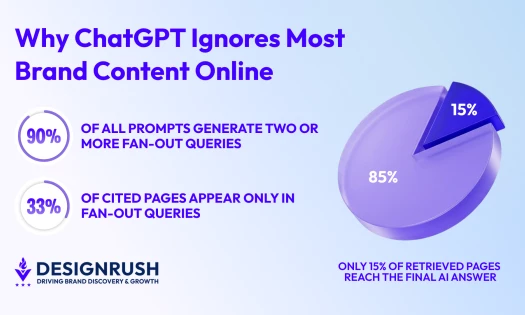

This tension between urgency and uncertainty often produces what experts call AI theater: projects that signal innovation without delivering impact.

Editor's Note: This is a sponsored article created in partnership with Hugo.

The Shift From AI Theater to AI Readiness

AI theater happens when companies chase appearances instead of results.

It shows up in chatbots without purpose, automation without oversight, and “AI-first” visions launched before ownership is defined.

True readiness, by contrast, is slower and steadier. It depends on:

- Clear governance — defining who owns AI initiatives.

- Cultural participation — ensuring every level of the organization can test and learn.

- Measurement and iteration — treating adoption as an evolving capability, not a one-time project.

“The thing that people get wrong,” Slota says, “is that they don't enable participation up and down the chain in actually using AI.”

Executives who understand this build systems that learn over time.

Questions Every Executive Should Ask About AI Readiness

Before investing in AI tools or drafting another “innovation roadmap,” leaders should step back and assess the foundations.

These four questions reveal whether your organization is ready to build sustainably or just perform AI theater.

Question #1: Who Owns AI?

AI readiness starts with ownership. Someone must be empowered to make quick, informed decisions about experimentation, guardrails, and results.

Without clear accountability, AI projects sprawl across departments, creating risk without responsibility. Ownership ensures alignment between AI use and business goals.

Ownership also clarifies how AI fits into the broader operating model: where it adds value, who governs it, and how success is measured.

Question #2: Where Does AI Add Value?

Executives should be able to map AI’s contribution directly to the P&L.

AI is about understanding where automation, prediction, or personalization deliver measurable outcomes.

“Businesses that are ready to leverage AI have a certain risk appetite; they are willing to put some money on the table to go after it,” Slota says.

When value isn’t clearly defined, pilots tend to drift toward vanity metrics: activity that looks innovative but doesn’t translate into results.

Question #3: How Do We Operate Under Uncertainty?

A model that works today might underperform in six months. This is why readiness requires adaptive operations: structures that encourage regular review and recalibration.

These short feedback loops allow organizations to adjust without overreacting, maintaining discipline even when results deviate from expectations.

The goal is to stay agile enough to respond when outcomes change.

Question #4: Are We Investing Before Returns?

AI maturity demands front-loaded investment in calibration, oversight, and reskilling. This can feel uncomfortable for leaders accustomed to fast ROI.

But skipping these steps risks far greater costs later: bias in outputs, security vulnerabilities, and untrained teams making high-stakes decisions.

“Machines will scale,” Slota notes, “but primary-source human data and human oversight will only grow in value.”

Human judgment must remain at the core of every deployment decision.

Create a Culture That Enables, Not Inhibits

Even the strongest frameworks will fail if the culture resists change. Teams need psychological safety to test AI systems and the clarity to know what’s off-limits.

“Executives must clearly articulate their expectations for how to use AI. So the first thing to do is to set ground rules,” says Slota.

That starts with three practices:

- Model curiosity and caution. Leaders should test AI tools themselves and share both wins and failures.

- Be transparent about risks. Define where experimentation stops.

- Hold teams accountable. Reward accuracy and ethical judgment, not just speed.

“If somebody comes to me and says, ‘I revolutionized how I do this thing and saved 80% of the time,’ I’m still going to probe, did you get the right answer or not?” Slota explains.

In AI adoption, speed means nothing without validity.

The companies that will thrive are those cultivating the discipline to learn from each iteration, building systems that are safe, ethical, and enduring.

Want to learn more about how to use AI in business? Check out our guide to what actually works in 2025.