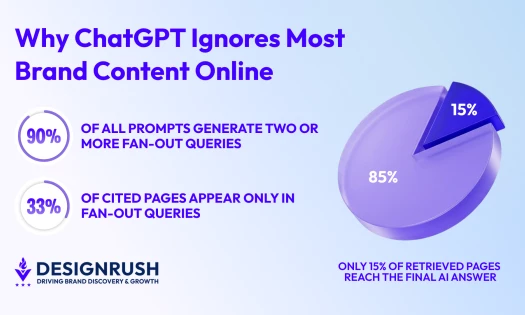

AI Pilot Failure: Key Findings

- 45% of consumers say AI failed to understand their issue, proving AI breakdowns are highly visible and a frequent source of friction in live CX.

- Liveops launched LiveNexus to close the pilot-to-scale gap, orchestrating AI and human agents in live environments with built-in governance and accountability.

- Only 17% of consumers want brands to use more AI, signaling deployment fatigue rather than rejection of the technology itself.

AI was supposed to make operations faster, cheaper, and more consistent.

Instead, many enterprises and contact centers are stuck explaining why AI pilots failed to achieve liftoff from the launchpad.

This gap between experimentation and real-world performance is becoming impossible to ignore.

In fact, a 2025 MIT study found that 95% of GenAI pilots failed to deliver measurable business impact.

The disconnect can also be clearly seen in contact centers.

According to a report from Liveops, 45% of consumers claim that AI didn’t understand their issues, while 55% of them ultimately escalated their interaction to a human agent.

Unfortunately, that mismatch between what was promised and actual outcomes leaves many CX leaders questioning whether their AI investments were worth it.

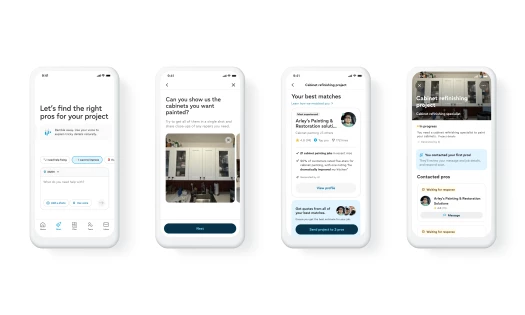

This is the divide that Liveops, a leading customer service solutions provider, set out to address when it launched LiveNexus, an AI and human orchestration platform unveiled at Customer Contact Week Orlando.

"We built LiveNexus specifically to close that gap,” says Molly Moore, COO at Liveops.

“It enables enterprises to test AI in live environments with structured oversight, keep humans intentionally in the loop, and scale only after performance proves durable under real conditions."

In this interview with DesignRush, Moore explains why so many AI initiatives falter, what organizations misunderstand about scale, and how Liveops’ latest launch helps AI initiatives hit the ground running.

Who Is Molly Moore?

As Chief Operating Officer at Liveops, Molly Moore is a recognized thought leader at the intersection of AI, customer experience, and operational transformation. With more than two decades of executive leadership across operations, technology-driven services, and go-to-market strategy, she has guided organizations through complex modernization initiatives – balancing innovation with the operational rigor required to scale.

Molly’s career spans startups to enterprise, where she has held multiple C-level roles and led teams through AI adoption, digital transformation, workforce redesign, and successful exits. She is known for her ability to translate emerging technologies into practical, high-impact operational strategies that improve speed, quality, and customer outcomes.

Why AI Pilots Stall Before Reaching Production

Very rarely do AI pilots fail due to technical issues like model accuracy or tooling choices.

Instead, the breakdown happens when pilots are treated as proof that AI works, rather than recognizing that a pilot only proves it worked under ideal conditions.

That’s a classic case of missing the forest for the trees, which only leads to the creation of the structural gaps that set AI pilots to fail.

And these gaps tend to pop up in the same places, regardless of industry or tooling.

In Moore’s experience, three issues stand out in particular:

1. Confusing experimentation and operational readiness

Speed has been the name of the game when it comes to adopting new technologies for decades now.

The problem is that running quick internal tests just isn’t the same as proving AI can survive real customers, live volume, and unpredictable edge cases.

“Too many initiatives are built to prove the technology works in isolation instead of proving it can perform consistently in production,” Moore says.

That’s because testing environments evaluate predictability, something that contact centers cannot expect in real-world scenarios.

2. Ownership issues

According to Moore, without business ownership, AI is little more than an ornament.

“If AI isn’t embedded within the teams that own customer experience, quality, compliance, and outcomes, it becomes disconnected from operational reality,” she explains.

“Sustainable progress happens when business leaders, not just IT, are accountable for performance.”

Of course, ownership should extend beyond leadership.

When ownership remains siloed inside innovation or IT, AI is measured on technical benchmarks.

But when ownership moves into the business, it is measured by customer outcomes.

That distinction often determines whether a pilot matures into infrastructure or fades into obscurity.

3. Governance gaps

When governance is treated as an afterthought, inconsistency spreads faster than efficiency.

At scale, the absence of governance does not remain invisible. It surfaces through customer confusion, regulatory exposure, and erosion of trust.

These failures then compound quietly until advancing the pilot feels riskier than abandoning it altogether.

How Liveops Identified and Solved the AI Pilot Problem

According to Liveops research, only 17% of consumers want brands to use more AI. On the other hand, twice as many consumers want less of it.

And it’s not hard to see why.

Think back to the last time you called customer service and ended up talking to an AI agent that couldn’t grasp your concern.

Instead of helping, it sent you in circles, only for you to discover that your issue wasn’t even covered in its limited preset list of options.

Despite the fact that so few consumers want brands to use more AI, Moore doesn’t see this as an outright rejection of the technology itself.

“It’s a signal that AI is being deployed without enough operational discipline and accountability,” she says.

Seeing how AI implementation has affected both organizations and the consumers they serve, Liveops designed and launched LiveNexus, a platform that combines strategy, testing, and scaled execution within a single operating model.

LiveNexus integrates AI, Liveops’ network of more than 20,000 agents, and nearly 30 years of CX data within an orchestration layer that tests, routes, and scales solutions in live environments.

Every design decision behind LiveNexus was made with intention.

Drawing from over two decades of experience, Moore understood that every major transformation fails when efficiency outruns trust.

Customers will tolerate new technology only as long as it preserves agency, clarity, and empathy.

When it does not, adoption stalls.

That’s why Liveops centered every feature around three key pillars:

1. Scale readiness over experimentation

LiveNexus assumes that success is measured after the pilot.

The platform is built for real customer behavior, live volume, compliance requirements, and business pressure.

As such, production is the baseline, not the aspiration.

2. AI and human intelligence as an integrated system

AI agents work best alongside human agents.

That’s why LiveNexus handles pattern recognition and routing.

Meanwhile, humans handle judgment and empathy when complexity increases.

3. Governance as a core capability

Trust, transparency, escalation design, monitoring, and business ownership are baseline requirements for any AI that touches customers.

This is why LiveNexus wasn’t made to be an IT side initiative.

It lives within the teams responsible for outcomes.

Reconciling AI With Human-First Service Models

Contact centers are built on empathy under pressure. Yet, AI has historically struggled to coexist with that reality.

Resistance from human agents is often framed as fear of automation. However, leadership should look at this situation from a different angle.

In most cases, AI resistance reflects concern about degraded service and loss of clarity when systems behave unpredictably.

AI didn’t replace the contact center. It redefined it. 🤖📞

— Liveops (@Liveops) February 3, 2026

What we heard from enterprise CX leaders:

✅ AI should absorb volume + surface insight

🧑💻 The next frontier is agent enablement

🧩 Bolt-on tools add clicks, not value

🌍 Global delivery is getting more strategic

Read…

So Liveops knew it had to introduce LiveNexus in a way that directly addressed this challenge. But at the same time, it had to ensure that any AI solution would amplify the agent experience.

“We built LiveNexus around a simple reality: empathy and judgment are still AI’s weakest link,” Moore says.

“Our recent data shows more than 50% of consumers say humans deliver better service, especially when something goes wrong. That reality shaped the architecture.”

Rather than forcing AI to imitate empathy, LiveNexus reduces friction in the agent workflow.

It preserves interaction context, reduces repetitive research, accelerates clean handoffs, and signals when human judgment is required.

“Our view is straightforward. The most effective AI systems elevate human capability. They make agents more confident, more informed, and more impactful; not more invisible,” Moore explains.

Choose Discipline Over Speed

Deploy quickly. Scale broadly. Fix later.

That has long been the formula for success when it comes to technology and business.

However, Moore says that way of thinking has the potential to introduce more problems down the line

“Experience has taught us that the greatest risk is not moving cautiously,” she says.

“The greater risk is scaling a capability before it earns customer trust and before operational performance is proven under real conditions.”

This is why Moore recommends CX leaders continue to evaluate AI beyond the pilot phase.

And even though AI will continue to improve, the deciding factor for contact centers and any other business looking to implement AI is whether they can mature alongside it.