Establishing Proper AI Governance: Key Findings

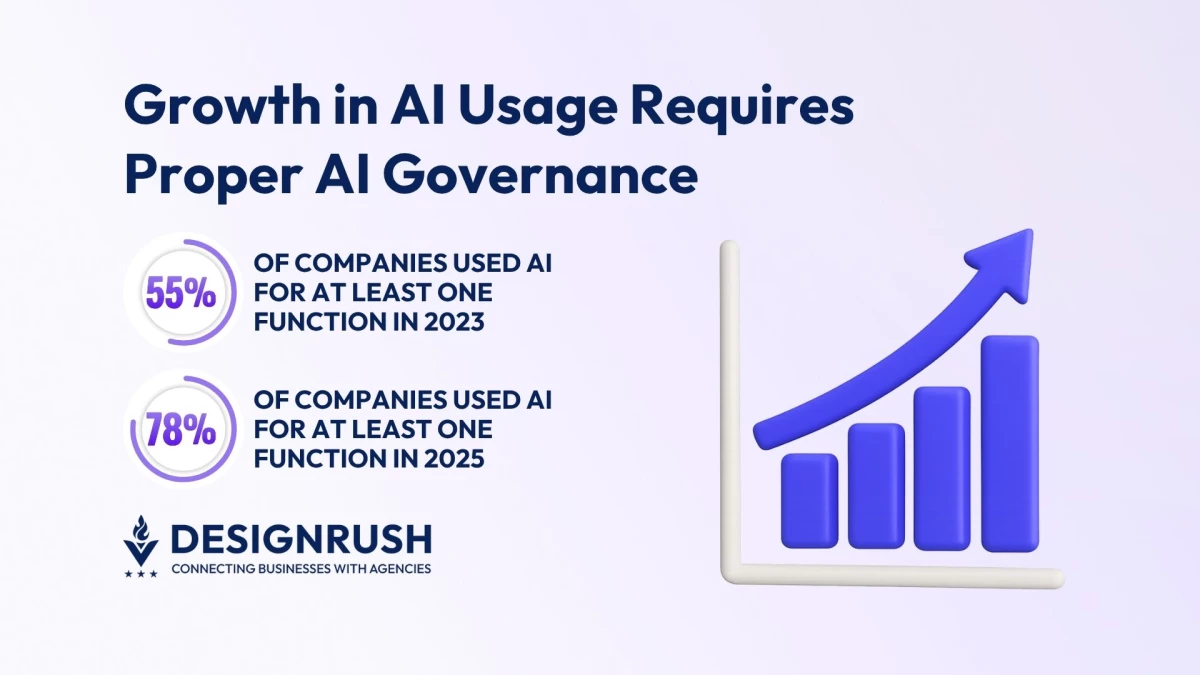

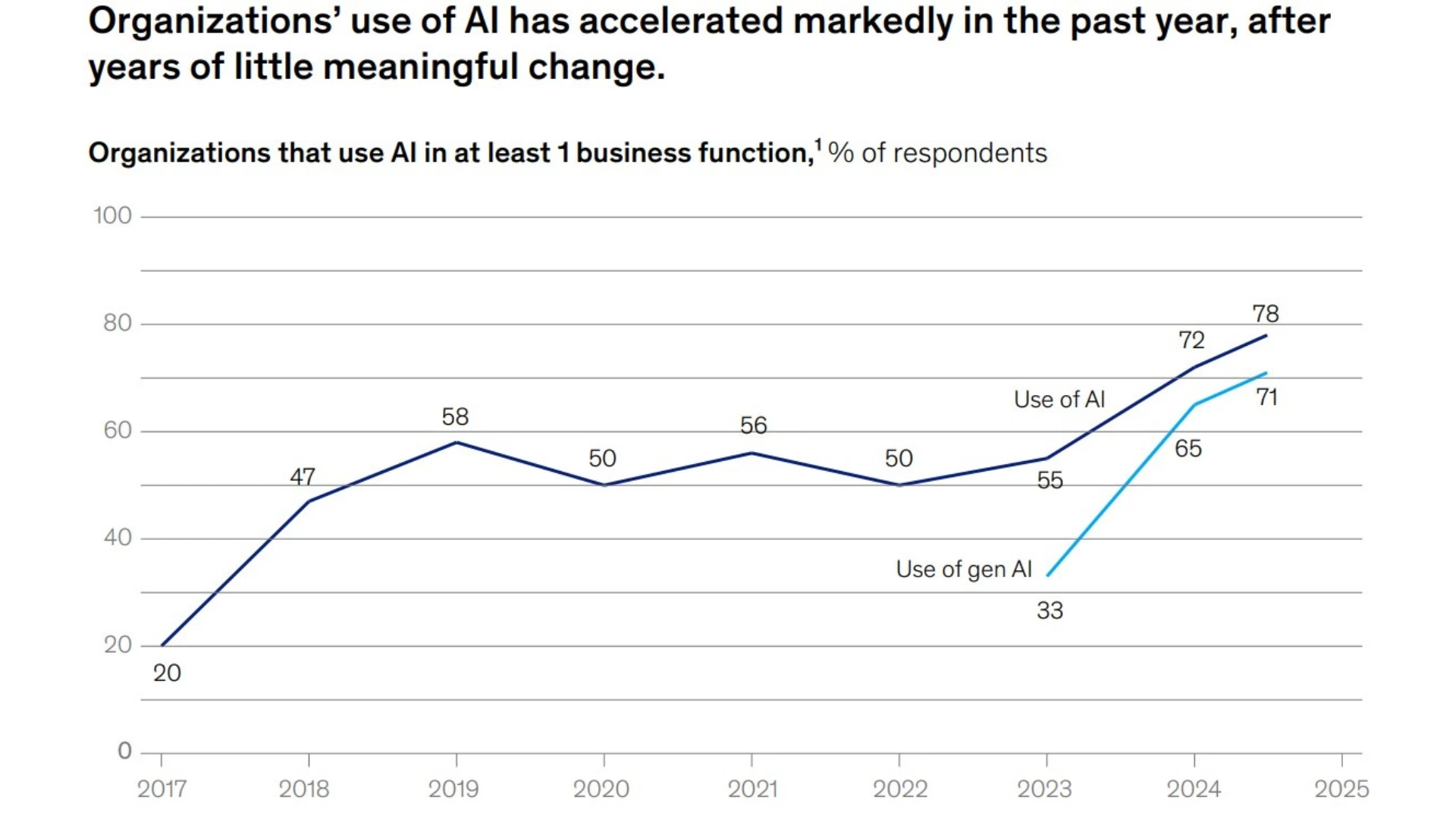

- 78% of organizations now use AI for at least one business function, up from 55% in 2023.

- Shadow AI and rushed adoption expose companies to IP leaks, bias, and security risks.

- Embed guardrails like policy-as-code and monitoring to turn governance into a growth enabler.

Artificial intelligence used to be confined to sci-fi movie scripts. Today, it’s a reality that moves at the pace of a company’s ambition.

What once took years of research and phased rollouts can now happen in just months or even weeks, pushing teams ahead of competitors and sparking innovation.

Given this, the widespread adoption of AI should surprise no one. In fact, a 2025 McKinsey survey found that 78% of organizations were using AI for at least one business function.

This is up from 72% in early 2024, and 55% percent from 2023.

Yet, speed tends to create risk when it becomes the end-all and be-all.

Teams rush to integrate AI into customer support, product design, and analytics, but without agreed-upon rules, they risk creating a patchwork of systems that are powerful but precarious.

There’s also the risk of shadow AI, the unmonitored use of AI tools by teams or individuals. It may seem harmless at first, but it can easily lead to issues like:

- Intellectual property leaking into open systems

- Biases in untested models go unnoticed

- Security vulnerabilities spread as more people use unsanctioned tools

The worst part here is that these problems tend to surface only after the damage has been done.

This is why proper governance is the key to balancing speed and control when it comes to the use of AI throughout an organization.

“Governance has to live inside everyday workflows. The only way to keep compliance from slowing innovation is to make it automatic, invisible, and part of how teams already operate,” commented Unico Connect CEO Malay Parekh.

View this post on Instagram

This all boils down to one central question: How can leaders scale AI responsibly while preserving the agility that makes it so valuable in the first place?

Embed Guardrails Directly Into Workflows

Traditional compliance models rely on static checklists and manual approvals. The only problem here is that AI evolves far too quickly for an approach developed during a slower era.

At the same time, the traditional approach assumes that risks always surface at predictable points and that humans will always catch the problem before it spreads.

View this post on Instagram

To keep pace, proper AI governance has to become a living part of the workflow.

This means guardrails must be designed to operate in real time, inside the systems and processes where AI is actually used.

Three practices, in particular, stand out:

- Policy-as-code embeds compliance rules into the software development lifecycle. Instead of waiting for audits, violations are flagged before code goes live, keeping teams moving fast while staying within bounds.

- Role- and context-based access controls go beyond simple permissions. They ensure the right people have the right level of access at the right moment, preventing sensitive data or models from being exposed unnecessarily.

- Continuous monitoring and anomaly detection keep a pulse on AI behavior in real time. Early alerts on drift, bias, or security anomalies help organizations correct before reputational or financial damage occurs.

When these are done well, governance transforms from a perceived “burden” into a safer and more stable accelerator of innovation.

Make Governance Your Growth Enabler

The conversation about AI governance often stops at risk avoidance. But the more interesting story is how governance expands possibilities.

A company with strong guardrails can experiment boldly, secure in the knowledge that missteps will be caught before they metastasize.

Customers, partners, and regulators read that confidence as trust, which in turn translates to competitive advantage.

That’s why executives who frame governance as a growth enabler build reputations as responsible innovators. They also position AI as a long-term engine for scalability.

After all, the true innovators don’t just break new ground. They also make sure it doesn’t cave beneath their feet when they least expect it.