AI Adoption Key Findings:

- Unstructured AI use leads to risk and inefficiency. Without clear policies, teams default to trial-and-error, exposing sensitive data and leading to fragmented workflows.

- Tools alone don’t drive value; strategic enablement does. AI only delivers impact when it’s paired with training, use case clarity, and ongoing process integration.

- AI must be treated as a long-term capability. Forward-thinking companies are embedding AI into how they work with structure, oversight, and measurable outcomes.

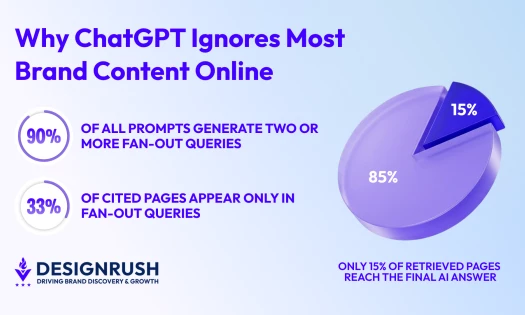

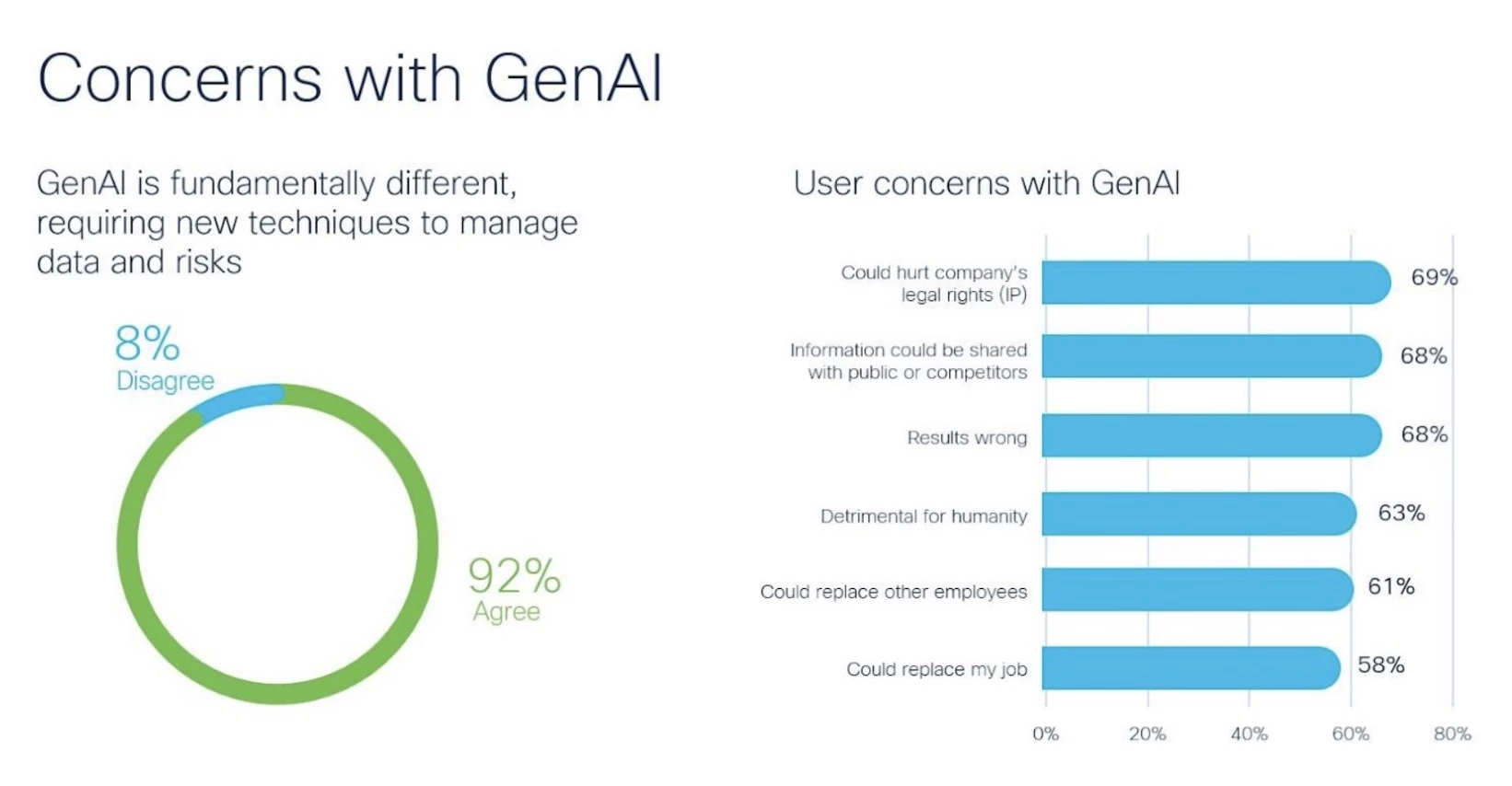

48% of organizations admit to entering non-public company data into generative AI tools, according to Cisco’s 2024 Data Privacy Benchmark Study.

More often than not, this happens because formal policies aren’t in place.

Unfortunately, that kind of unstructured use is becoming the norm.

As teams experiment with AI to boost productivity, many companies are exposing themselves to unnecessary risks, from data leaks and compliance issues to inconsistent workflows and missed ROI.

Editor’s Note: This is a sponsored article created in partnership with Infinum.

Forward-looking organizations are starting to respond by building internal frameworks that bring structure, oversight, and strategic alignment to AI use.

One example is Infinum, a global digital consultancy that chose structure over restriction.

Instead of banning AI tools, it introduced a company-wide enablement framework to guide safe, strategic adoption across every department.

This led to more confident teams, faster delivery, and fewer risks, all without sacrificing control.

It’s the kind of proactive approach more B2B leaders will need to take as AI continues to shape how work gets done, with or without clear internal policies.

To get there, B2B leads should avoid these five common AI implementation mistakes.

1. Establish a Company-Wide AI Framework Before Adoption Spreads

Most AI adoption starts at the individual level. Designers, developers, marketers, and operations teams quietly test tools like ChatGPT or Midjourney to save time or boost creativity.

At first, it seems harmless. But what starts as quiet experimentation can quickly lead to inconsistent outputs, duplicated efforts, and unintentional data exposure.

Cisco shares that 27% of organizations have temporarily banned generative AI tools altogether due to security, privacy, and compliance concerns.

That kind of blanket restriction is often a response to one thing: the absence of structure.

Unregulated use introduces real business risks — from IP leakage and compliance violations to fragmented workflows that undermine long-term capability building.

Treat AI like any other enterprise-wide shift: with structure, governance, and enablement. That means:

- Defining approved tools and licensing them securely

- Creating policies for ethical, responsible use

- Appointing internal leads or champions to support adoption

- Sharing standardized resources (e.g., use case guides, prompt templates)

- Maintaining oversight while encouraging experimentation

2. Invest in Enablement — Don’t Expect Immediate ROI From AI

Most leaders hope AI will reduce costs or improve margins right away. But the early stages of adoption often do the opposite.

Teams spend time experimenting, learning new tools, and adjusting workflows, all of which eat up time and resources.

Treating AI as a quick-win, cost-saving measure sets unrealistic expectations. It also discourages the foundational work required to build sustainable AI capability.

If leadership pulls back too early, teams lose momentum and the organization misses the long-term gains.

Approach AI enablement like a long-term investment in organizational maturity by:

- Budgeting for tools, education, and support resources

- Setting clear expectations: learning now, impact later

- Tracking progress with internal benchmarks (e.g., adoption rates, time saved, new workflows created)

- Focusing on capability-building over cost-cutting

“We believe that tomorrow’s clients won’t be asking if their agency uses AI — they’ll demand it. They’ll want partners who are ready for the future of work, not stuck in outdated processes,” said Nikola Kapraljević, CEO at Infinum.

3. Prioritize Use Cases Over Tools

When AI hits the radar, it’s tempting to start with the tools. Leaders invest in licenses for the latest platforms and assume that access alone will drive results.

But tools don’t create value on their own.

Without clear use cases, teams either underuse the tools or apply them inconsistently, leading to wasted spend, unclear ROI, and frustration.

A tools-first approach often leads to surface-level adoption. People try AI once or twice, get generic outputs, and abandon it because they don’t know how to use it meaningfully.

As the pressure to embrace AI grows, many businesses don't know where to start. Take the assessment and make the first step of discovering the right use case for your business. 👇

— Infinum (@infinum) March 19, 2024

Start by identifying real, repeatable problems AI can solve, then match the right tool to the task.

- Ask each team: Where are the bottlenecks? What’s repetitive? What slows you down?

- Build use case libraries with examples like code scaffolding, content summarization, visual ideation, or QA test generation

- Provide role-specific prompt templates to help teams get meaningful results faster

- Track outcomes by use case (e.g., time saved, quality improved, output volume increased)

4. Set Guardrails & Don’t Rely on Individual Judgment

In the absence of clear policies, most employees will default to what feels helpful and harmless.

That might mean using AI to write emails, summarize documents, or even explore client-specific work.

But without guidance, it’s easy to cross lines that put the business at risk.

When individuals are left to decide what’s safe, companies risk exposing sensitive data, violating client agreements, or unknowingly breaking regulatory compliance.

Even well-meaning employees can make costly mistakes if the rules aren’t clear.

Cisco reports that 91% of businesses acknowledge they need to do more to assure customers their data is used appropriately in AI systems.

That’s not just a legal risk. It’s a brand trust issue.

To avoid this, create simple, accessible policies that define:

- What types of data are allowed (and prohibited) in AI tools

- How AI-generated outputs should be reviewed or disclosed

- When human oversight is required

- The difference between experimentation and production use

Make these guardrails visible in onboarding, internal wikis, and toolkits. And regularly revisit them as tools evolve.

5. Make AI a Capability, Not a Checkbox

As AI becomes more mainstream, many companies rush to label themselves “AI-enabled.”

They publish a press release, run a few internal workshops, or add a tool to their stack and consider the job done.

But surface-level adoption won’t deliver long-term value.

AI isn’t a one-time integration. It’s an ongoing shift in how teams work, think, and deliver.

“Our people are becoming conductors of AI workflows, orchestrating complex tasks with greater precision, creativity, and quality than ever before. It’s about amplifying our impact,” said Kapraljević.

Without continuous learning, process alignment, and internal knowledge sharing, AI stays siloed.

Teams eventually revert to old habits, adoption stalls, and leadership misses out on the compounding benefits of real capability.

- Treat AI enablement as a long-term, evolving initiative.

- Build feedback loops to track what’s working (and what’s not)

- Encourage teams to share workflows, prompts, and outcomes

- Update internal playbooks regularly as tools improve

- Connect AI use to measurable outcomes: time saved, quality increased, delivery accelerated

Build the Capability Now or Fall Behind Later

AI is a fundamental shift in how modern organizations operate, and the companies that recognize this are already pulling ahead.

Firms like Infinum show that the real differentiator is how intentionally AI is embedded into daily workflows, supported by structure, strategy, and shared accountability.

Avoiding the five mistakes above helps organizations move beyond experimentation into real capability.

That means safer adoption, higher output quality, faster execution, and stronger alignment between people, processes, and technology.

Leaders who act now will build a foundation for long-term advantage, while others are still figuring out where to start.