Key Takeaways:

- Software development company WEZOM integrated AIO Tests in Jira, a bug-tracking software, to cut test case creation time by 50%.

- AIO Tests and similar AI-driven tools boost efficiency by handling repetitive tasks and allowing more time to focus on complex projects.

- While AI tools reduce human error, they don’t replace the human expertise needed for exploratory testing and assessing user experience.

Gartner predicts that 75% of enterprise software engineers will use AI code assistants by 2028 — a huge leap from less than 10% in early 2023.

Simply put, the effects of AI are inevitable, explains Daryl Plummer, a distinguished VP Analyst, Chief of Research, and Gartner Fellow.

It’s easy to see why: When software development company WEZOM integrated AIO Tests in Jira, a bug-tracking software, the results spoke for themselves:

- Test case creation time was reduced by 50%: from hours to minutes

- More comprehensive coverage: AI identifies missing test scenarios

- Less human error: AI ensures consistency across test cases

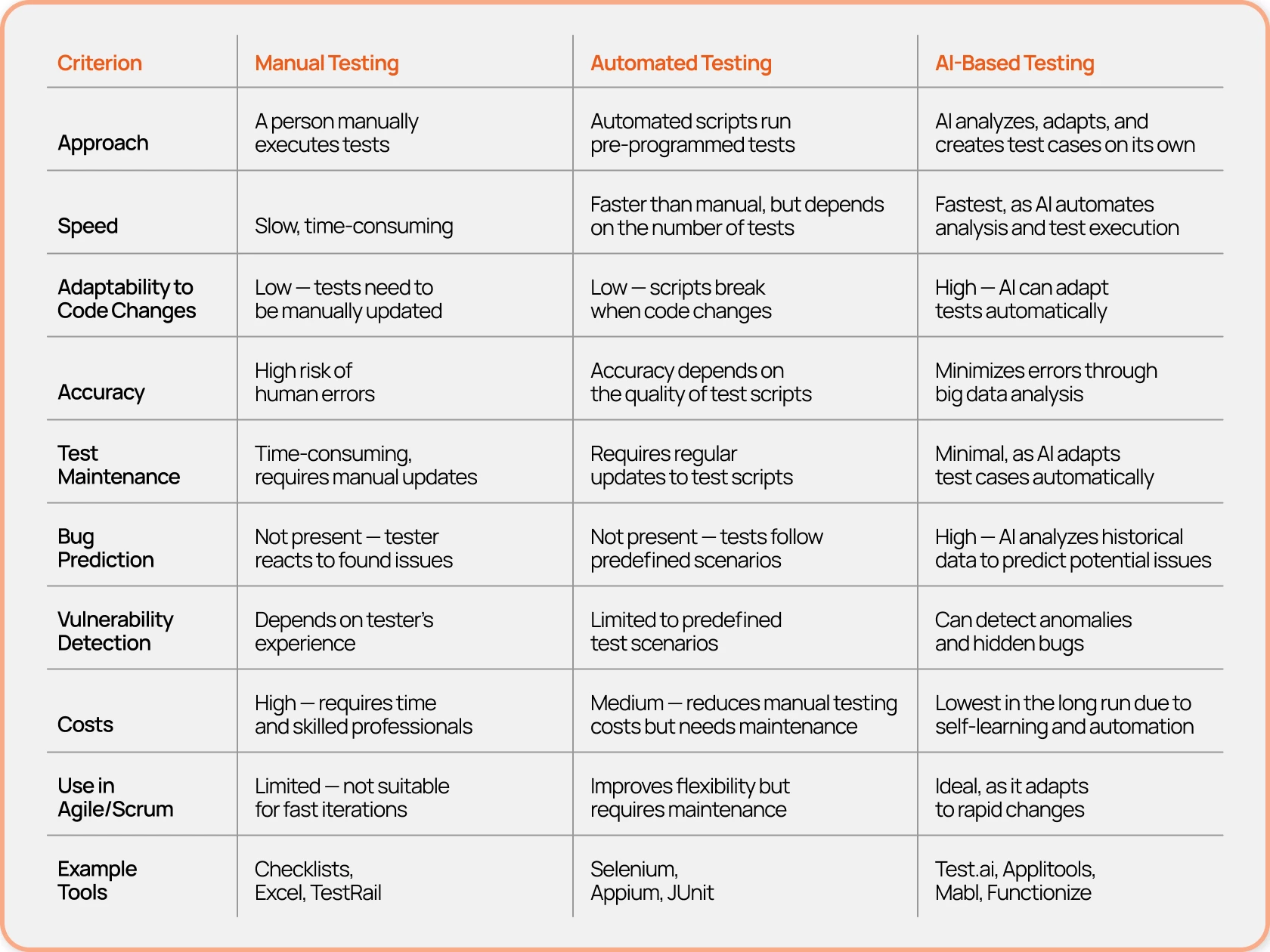

AI in software testing uses machine learning and data to run and improve tests with less human effort.

What differentiates it from other automated tools is that it can learn and adapt, analyze data, predict vulnerabilities, and independently create test scenarios. It can also process unstructured data like logs or user actions, and detect patterns that might otherwise be overlooked.

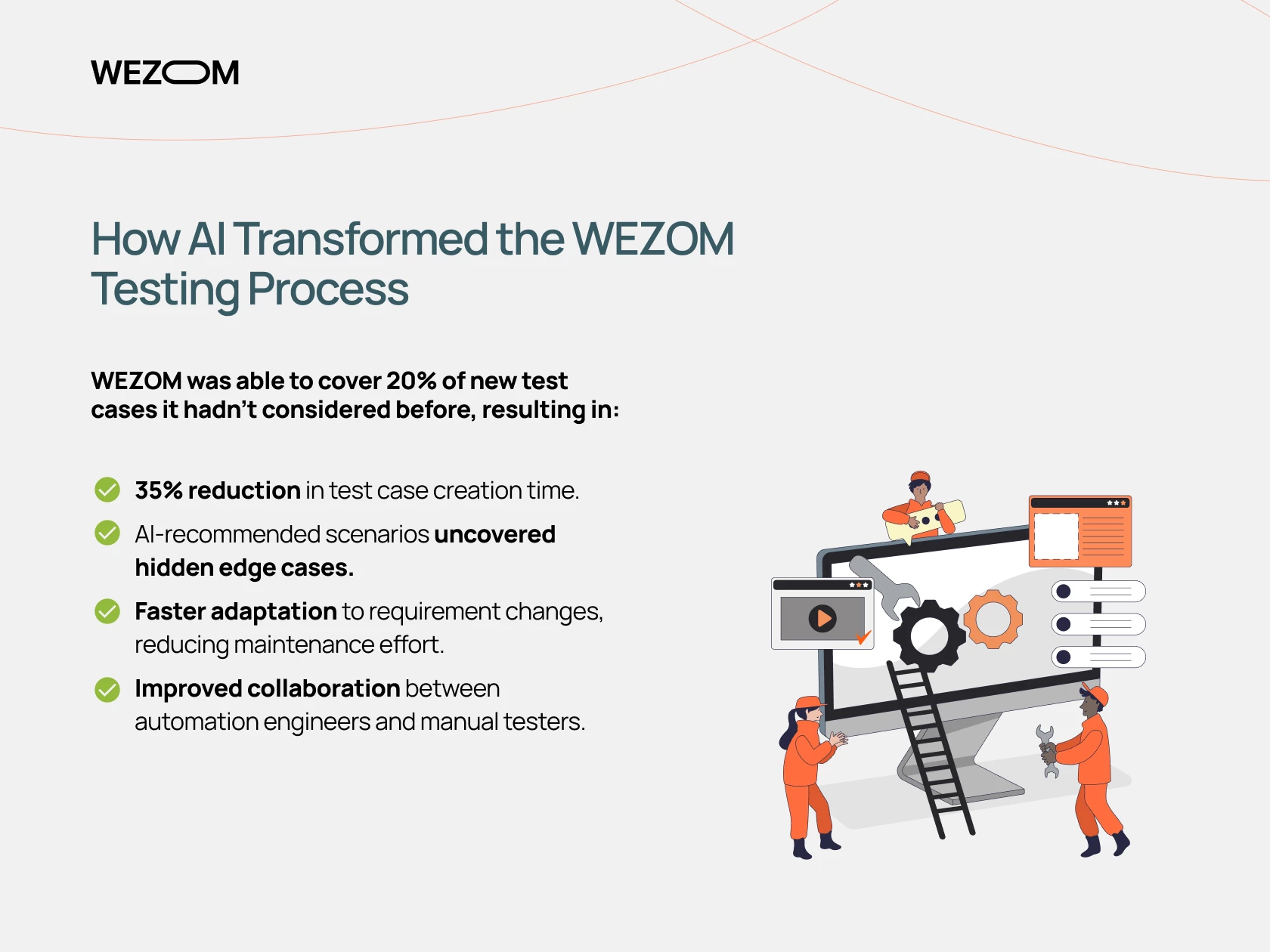

How AI Transformed the WEZOM Testing Process

WEZOM set out to speed up testing for a large web platform, improve test accuracy, and cut maintenance costs, as manual testing was too slow and automation needed constant updates due to frequent requirement changes.

To do this, the agency integrated AIO Tests: an AI-driven solution that analyzes requirements, automatically generates test cases, and spots gaps in test coverage.

The results? Testers could focus on bug analysis instead of test creation, maintenance time was reduced, and the process produced higher product quality.

WEZOM was able to cover 20% of new test cases it hadn’t considered before, resulting in:

- 35% reduction in test case creation time

- AI-recommended scenarios uncovered hidden edge cases

- Faster adaptation to changing requirements, reducing maintenance effort

- Improved collaboration between automation engineers and manual testers

While a common misconception that AI will replace test engineers persists, WEZOM believes AI is a tool and not a substitute for human expertise.

AI Eases Testing So Humans Can Focus

For the agency, AI takes care of repetitive tasks like creating and maintaining test cases, so testers can focus on exploring, validating user experience, and assessing risk.

This teamwork between AI and testers improves coverage and accuracy, boosting productivity without losing the value of human expertise.

While AI handles routine tasks such as regression testing, log analysis, or bug prediction, manual verification is still required for exploratory testing, user experience testing, and non-standard scenarios.

There are still moments when manual testing is crucial, especially when judging how easy and intuitive an app feels to use. AI just can't capture the nuances of human emotions and preferences.

AI allows teams to focus on innovation and user experience, just as it boosts eCommerce performance by simplifying operations and building stronger customer relationships.