Apple On-Device AI: Key Findings

- Apple’s on-device AI unlocks new possibilities for privacy-first, offline-ready, and cost-efficient mobile experiences.

- Product teams can build AI features natively using Apple’s Foundation Models — no server dependencies, no cloud latency, no ongoing inference costs.

- This shift reduces complexity and accelerates development by making generative AI feel like a built-in part of the iOS ecosystem.

Over 80% of enterprises will have used generative AI APIs or deployed generative AI-enabled applications by 2026, according to a 2023 report by Gartner.

But as adoption accelerates, so do the challenges.

Rising inference costs, privacy concerns, and dependence on cloud infrastructure continue to limit how and where AI is integrated into digital products.

That’s what makes Apple’s latest move at WWDC 2025 especially relevant for developers and product teams.

Editor’s Note: This is a sponsored article created in partnership with Infinum.

While the spotlight remained on headline-grabbing cloud models, Apple quietly introduced something with long-term impact: a fully on-device, privacy-first AI framework embedded directly into iOS.

The new Foundation Models Framework allows developers to build generative AI features that run entirely on iPhones and iPads.

That means no server round-trips, no internet dependency, and no external data sharing.

Engineering teams at mobile-focused agencies like Infinum see this shift as a foundational change.

Quick listen: Apple’s on-device AI is changing mobile product strategy — here’s what leaders need to know, in under 2 minutes.

With AI becoming a native part of the Apple ecosystem, product leaders now have a new path to deliver faster, more secure, and cost-effective AI experiences.

Here’s what that means in practice and three strategies teams can use to take advantage:

AI Strategy #1: Treat Apple’s Foundation Models as a core system feature

Apple’s Foundation Models Framework is a strategic shift. By integrating a ~3 billion-parameter generative model directly into iOS and running it fully on-device, Apple is positioning generative AI as a native system capability.

That changes how teams should approach AI development.

Instead of treating AI as a separate, cloud-powered layer, developers can now build AI into their apps as a first-class, system-level feature.

With native Swift and Xcode integration, teams can generate structured outputs, trigger app functions through tool-calling, and handle content safety, all without external infrastructure.

This lowers the barrier to entry, cuts down complexity, and aligns AI features more tightly with iOS design patterns and performance expectations.

View this post on Instagram

“What Apple’s doing isn’t just adding another model — they’re turning generative AI into a native system capability. That radically lowers the barrier to building fast, reliable AI features without worrying about infrastructure, latency, or privacy,” said Filip Gulan, head of web & mobile at Infinum.

For product teams, that means faster implementation, more stable performance, and fewer long-term maintenance risks. It’s not just about adding AI — it’s about embedding it like any other iOS-native capability.

AI Strategy #2: Prioritize use cases where local AI solves privacy, latency, or connectivity limitations

One of the most important advantages of Apple’s on-device AI is that data never leaves the user’s device.

For product teams operating in regulated industries, this unlocks new possibilities for building intelligent features without triggering compliance concerns.

View this post on Instagram

Because the Foundation Models run locally, teams can now offer AI-powered capabilities that are:

- Private by design — no external servers involved

- Instantly responsive — with no network latency

- Fully offline — functional even without an internet connection

This matters more than ever as apps become increasingly personalized and operate in environments where privacy, speed, or network access can't be compromised.

Practical examples include:

- Summarizing patient health records directly on a clinician’s iPad

- Generating insights from financial documents without cloud processing

- Auto-summarizing emails, tickets, or meetings for on-the-go professionals

- Offering travel, fitness, or productivity assistance in places with no signal

For teams building in regulated or infrastructure-constrained contexts, Apple’s model opens doors that traditional cloud-based AI can’t: enabling faster, safer, and more reliable experiences.

AI Strategy #3: Replace variable cloud inference costs with fixed-cost, on-device deployment

One of the less visible but deeply impactful benefits of Apple’s Foundation Models is how they fundamentally shift the economics of AI product development.

With traditional cloud-based LLMs, teams pay per request or per token.

That means every user interaction comes with an incremental cost, which can quickly balloon as usage scales, especially for AI features like summarization, personalization, or conversational UI.

This makes it risky to roll out AI broadly, particularly for apps with large user bases or high engagement.

By contrast, Apple’s on-device models run locally after installation. There are no API fees, no hosting costs, and no per-user billing; just a one-time model download packaged with the app.

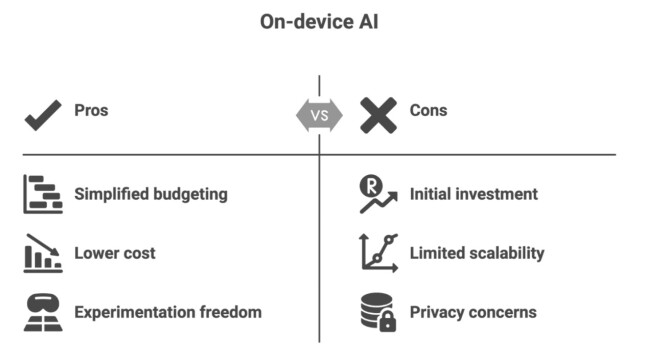

For product teams, this removes a major barrier to scale and experimentation. On-device AI enables:

- Simplified budgeting: with no surprise usage-based pricing

- Lower total cost of ownership: especially for frequently used features

- More freedom to experiment: since every test or rollout doesn’t trigger new costs

That’s why Apple’s approach is so important: it allows teams to build and ship AI features that are cost-stable from day one — something cloud-based models still struggle to offer.

“For teams in regulated or cost-sensitive markets, on-device AI is a game changer. You can now ship intelligent, private-first features at scale — without stacking up API bills or triggering compliance reviews,” said Gulan.

Why Product Teams Should Start Building With On-Device AI Now

Apple’s Foundation Models aren’t the biggest or most flexible on the market, and they’re not trying to be. Instead, they mark a practical turning point for mobile product development.

By baking generative AI directly into the OS, Apple has created a path to:

- Build intelligent features that work offline

- Deliver privacy-first experiences by default

- Avoid scaling costs tied to cloud infrastructure

- Launch AI features faster, with native tooling

For product teams, this changes the equation. You no longer need a research lab, a massive backend stack, or a six-figure inference budget to build meaningful AI capabilities into your app.

Agencies like Infinum are already helping teams take advantage of this shift, from identifying high-impact use cases to rapidly prototyping on-device AI features that are fast, private, and cost-efficient.

The smartest move now? Start prototyping.

Pick one high-impact use case — like summarization or tool-calling — and test it with Apple’s new framework. Because soon, on-device AI won’t be a differentiator. It’ll be table stakes.