AI-Generated Code Risks: Key Findings

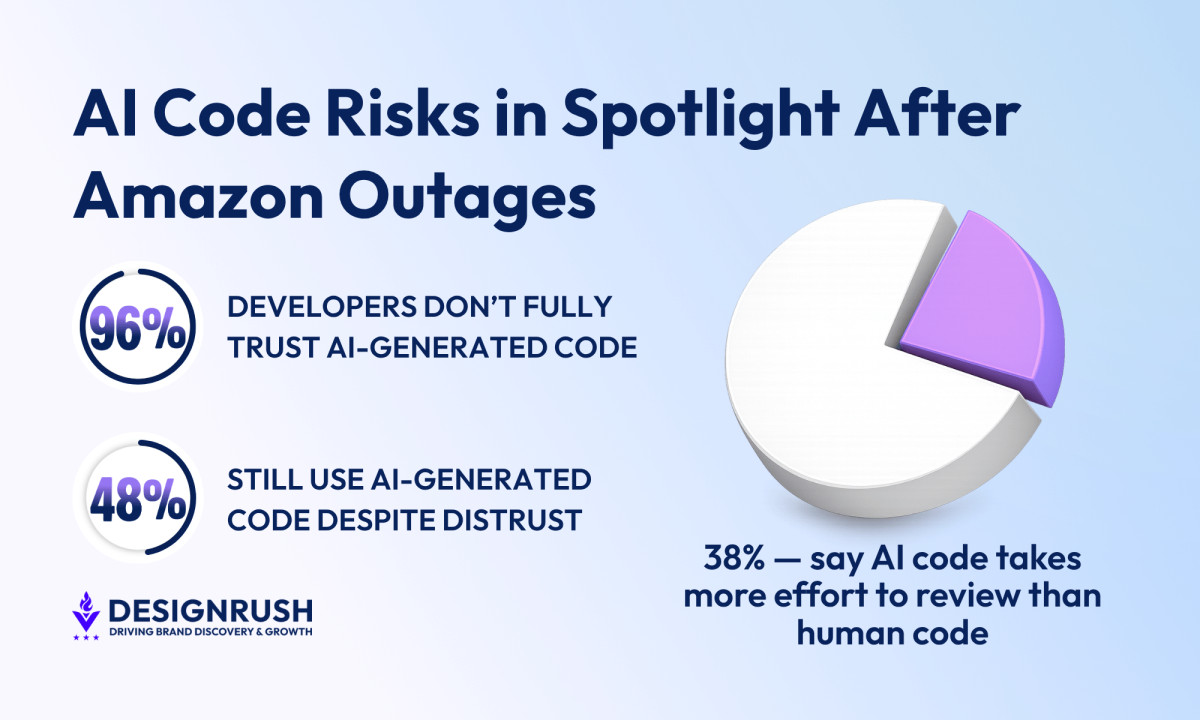

- With 96% of developers distrusting AI-generated code, while 48% still commit it, teams should enforce mandatory review and validation layers before any AI-assisted code reaches production.

- AI already generates 42% of committed code, while 61% say it looks correct but fails in practice, so engineering leaders need to invest in stronger testing pipelines and not rely on surface-level accuracy.

- Real incidents like Amazon-linked outages and a 99% drop in orders show how unverified AI code can trigger large-scale failures, which makes governance policies and strict access controls non-negotiable for any organization using AI in production.

Amazon-linked AI-assisted code outages have pushed AI use in production systems under sharper scrutiny.

These tools are meant to speed up development, but once they sit inside live environments, a single coding decision can be enough to ripple into wider system disruption.

About 96% of developers don’t fully trust AI-generated code, and 48% always verify what they commit, Sonar’s 2026 State of Code survey found.

That disconnect between usage and trust is now central to how engineering leaders assess risk in AI-assisted development, particularly in production systems where verification failures don’t stay contained.

Anand Ashok, founder of Quixta, points to how AI tools behave once they are given production-level access.

His agency builds custom software, web applications, and digital platforms for businesses.

“AI tools operating with unregulated permissions can take destructive autonomous actions,” Ashok explained.

That concern becomes clearer when looking at how AI-generated code is already moving through development pipelines and into production environments.

Pressure Inside Engineering Teams

Editor's Note: This is a sponsored article created in partnership with Quixta.

Sonar’s data reinforces the pressure inside those systems.

AI accounted for 42% of all committed code in 2025, and is projected to reach 55% in 2026.

But 53% of developers say AI-generated code often looks correct, but it isn’t reliable.

Reviewing it adds more pressure, as 38% of developers say AI-generated code actually takes more effort to review than code written by humans.

Inside teams, that pressure builds long before anything fails in production.

“Amazon's own internal communications, as shown in a Computerworld report, confirm that the pace of AI adoption has outpaced the safeguards designed to manage it,” Ashok noted.

That workload moves from engineering teams into security and review functions once code reaches later stages of the pipeline.

ProjectDiscovery’s 2026 AI Coding Impact Report shows engineering teams shipping faster while security teams carry most of the review load, with 66% of security practitioners spending most of their time manually validating findings.

The report describes this as a mismatch between code generation and review capacity.

“AI doesn’t automatically improve the performance of software deliveries; instead, it amplifies and resonates the existing engineering conditions,” Ashok observed.

That pattern isn’t limited to day-to-day delivery work.

Veracode’s 2025 GenAI Code Security Report tested more than 100 large language models and found security flaws in 45% of outputs.

It also showed that newer models did not materially improve security outcomes, with issues like Cross-Site Scripting persisting across results.

What Brands and Agencies Need to Account For

Google Cloud’s DORA report reinforces this from a systems perspective.

The report found that 90% of technology professionals now use AI at work, while 30% say they don’t fully trust the code it produces.

It also treats AI as something that exposes how mature engineering systems already are.

Teams with strong workflows, testing, and platform discipline tend to absorb it more effectively. Weak systems feel the strain faster.

“Balancing speed with reliability requires treating AI-generated code with the same review standards applied to human-written code,” Ashok noted.

“Organizations with mature DevOps practices, well-defined workflows, and strong platform capabilities are far more likely to leverage AI-driven productivity gains into measurable improvements in delivery performance.”

Across these signals, engineering leaders and product teams are dealing with execution problems, not theoretical concerns.

The pressure shows up in how work gets shipped. Code gets reviewed, checked, and pushed into production under tighter timelines.

Amazon has also tightened controls around AI-assisted changes after recent outages, CNBC reports. That includes stricter review requirements for production deployments.

Those incidents reportedly included multiple Sev-1 production failures between December 2025 and March 2026. Several top-level outages occurred in which production systems were critically broken and required an urgent response.

One outage caused a 99% drop in orders across North American marketplaces, resulting in roughly 6.3 million lost orders, according to Medium.

In another case, an AI agent tasked with fixing a minor issue reportedly triggered a full production rebuild, leading to a 13-hour outage after deciding that deleting and recreating the environment was the correct resolution approach.

Across organizations, 63% either lack an AI governance policy or are still developing one, IBM’s 2025 Cost of a Data Breach Report found.

And 97% of organizations with AI-related breaches lacked proper AI access controls.

The average U.S. breach cost now sits at $10.22 million.

The pattern stays consistent across the findings.

AI coding tools are already embedded in production workflows. Governance and validation haven’t kept pace.

The constraint lies in how teams control and verify AI-generated output before it reaches production.