Bad Data: Key Findings

- Poor data quality costs businesses over 30% of revenue, a problem best solved by unifying, cleaning, and governing data.

- Aligning data initiatives with business metrics builds trust, drives adoption, and sustains long-term investment.

- Strong foundations and active end-user engagement are essential before introducing AI or advanced analytics.

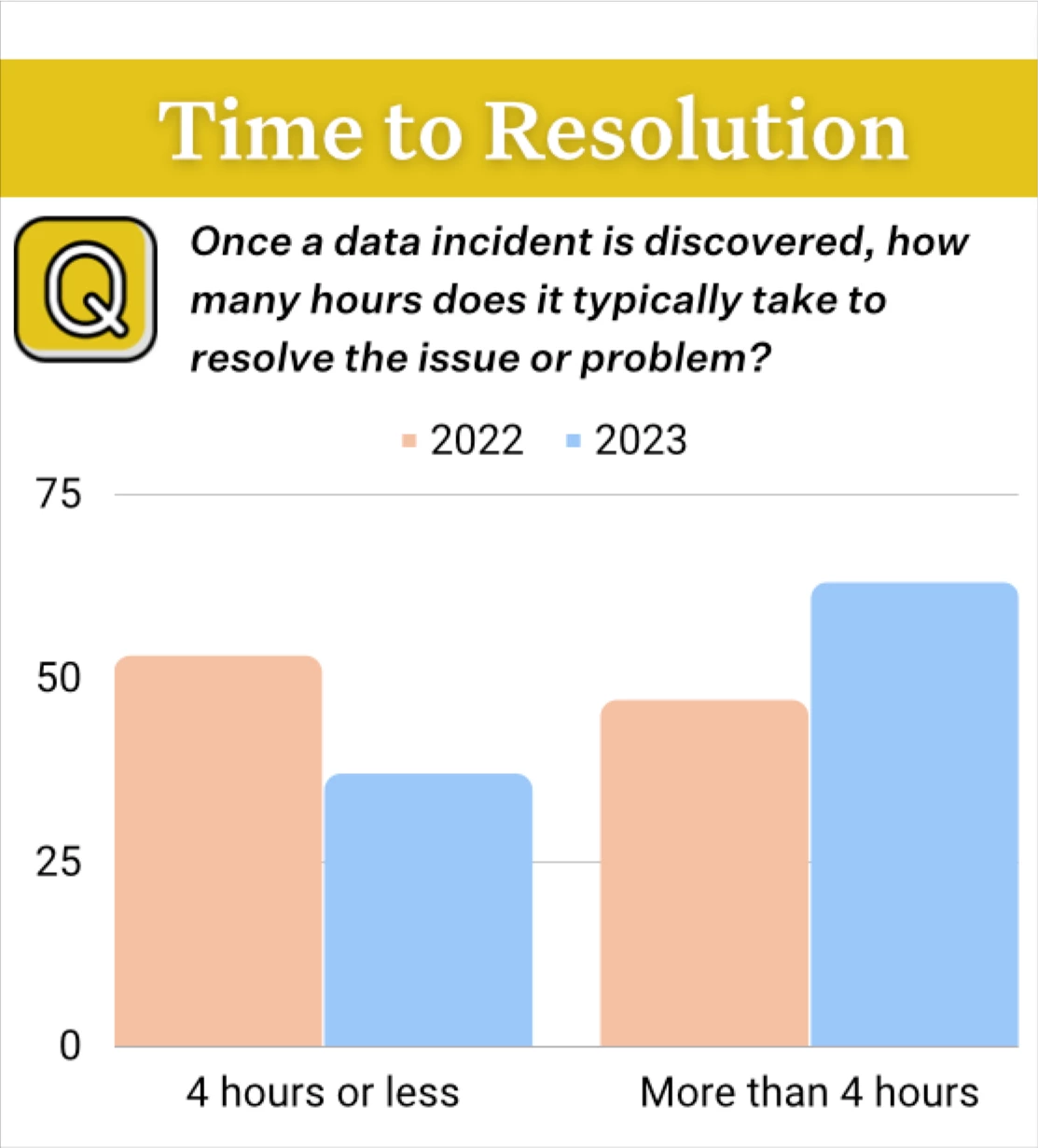

Poor data quality costs companies an average of 31% of revenue, according to the Monte Carlo/Data Quality Survey.

That loss is rising, up from 26% the previous year, and fixing it requires a disciplined approach to governing data.

Why should business owners care? Simply put, bad data often equals lost revenue.

Quick listen: Why in-person brand experiences still drive loyalty — insights for marketers in under 2 minutes.

And this trend highlights a fundamental truth, which is that even the most advanced analytics platforms can only deliver value if the underlying data is:

- Accurate: free from errors or false information

- Consistent: the same across all systems and sources

- Complete: containing all required fields and records

These principles may sound basic, but the persistence of bad data shows that getting them right is far from easy — and ongoing issues are time consuming to fix.

To make data a true strategic asset, organizations must address persistent challenges such as fragmentation, governance gaps, and weak foundations.

In an exclusive interview with DesignRush, Anthony Deighton, CEO of Tamr, and Jennifer Jackson, CMO of Actian, offer practical guidance for building data ecosystems that are trustworthy, resilient, and capable of supporting long-term business goals.

Who Are the Experts

Anthony Deighton is the CEO of Tamr, where he works with organizations to rethink how they manage and use data at scale. With a background in data integration, analytics, and enterprise technology, he’s focused on tackling some of the toughest challenges in data management through scalable, machine learning–powered solutions.

Jennifer Jackson is the CMO of Actian, leading the company’s global marketing strategy and operations. She brings 25 years of branding and digital marketing experience and a foundation in chemical engineering to her role. Jackson is passionate about using data to improve customer experiences, guide SaaS transformations, and strengthen partner ecosystems.

Prioritize Cleaning and Unifying Your Data to Build Trustworthy Insights

Invalid, fragmented, or inconsistent data erodes trust in the results.

With revenue impact climbing year over year, companies can’t afford to let fragmentation and duplication undermine decision-making.

Deighton explains that while users have access to insights, which solves the consumption complaint, there is still a larger issue.

“The data, and therefore the insights, are often wrong,” he says.

The problem is often made worse by how organizations handle their tech stacks.

“The most persistent pain point is data fragmentation caused by technological tool accumulation… enterprises never retire data management tools – they just layer new ones on top,” Jackson adds.

The fix starts with addressing fragmentation head-on through consolidation, deduplication, and AI-assisted unification so that analytics can rest on a foundation of reliable, trustworthy data.

Adopt Cloud-Native Architectures to Scale Effectively

Scaling a company’s data operations is about having the right foundation and the right rules for how data is managed.

Moving only part of the way to the cloud can leave teams stuck juggling old and new systems, slowing everything down.

"Companies go wrong when they fail to go all-in on a cloud-native environment,” Deighton says, pointing out that half-measures often create more problems than they solve."

Governance is just as important as infrastructure.

Too much centralization can be just as damaging as too little, especially if it turns carefully collected data into what Jackson calls “polluted data swamps.”

Her advice? Take a federated approach. Keep clear, company-wide standards for quality and security, but let teams own and manage the data that matters most to their work.

That way, you get the best of both worlds — control and flexibility — without sacrificing speed or trust in the data.

Measure What Matters to Businesses

Data initiatives gain more traction when tied to business metrics. Jackson recommends actionable measures to gauge impact:

- Problem-to-solution velocity – How quickly can the organization identify its biggest challenges and turn them into opportunities?

- Cross-functional alignment velocity – Are different departments making decisions based on the same data and shared understanding?

- Predictive accuracy – How well is the company anticipating customer needs, market changes, or competitive moves?

Deighton agrees that both sides of the equation matter.

“Executives must actually track both growth measures and technical KPIs in order to tell the full business value story,” he says.

When performance metrics show a direct line between data initiatives and tangible results, it’s much easier to keep investment and momentum going.

Build Partnerships That Shape Your Data Strategy

The strongest data strategies are built in close partnership with the people who will actually use them.

When vendors and clients work together, feedback becomes a driving force in product direction, and solutions evolve in step with real-world business challenges.

Jackson explains how Actian embeds this collaboration into its operations.

“We invested in Customer Success as a core function… Our Customer Advisory Board informs company strategy, product direction and new areas of innovations,” she says.

Deighton says Tamr takes a similar approach, emphasizing the value of continuous listening.

“We listen… host focus groups… learn from our customers… and ensure our roadmap delivers business value,” he adds.

When companies treat their customers as strategic partners, they ensure that data tools and capabilities stay relevant, adaptable, and directly aligned with business goals.

Get Your Data House in Order Before Layering on AI

AI can be transformative, but only if the data it works with is trustworthy.

Without strong governance, clean datasets, and a clear understanding of how information flows through the organization, AI risks producing wrong answers faster.

“The idea that ‘AI will solve all your data problems’ is a myth… AI actually amplifies your existing foundation – meaning, if your data is low quality and poorly governed, AI just creates inaccurate insights faster,” Jackson says.

The solution is to make AI an integral part of how data is unified and maintained from the start.

Deighton advocates embedding AI into data mastering processes so it becomes part of the governance framework, not just a tool bolted on after the fact.

AI success is about building a solid, well-governed data foundation first, so AI has something reliable to stand on.

Use Real-World Wins to Show the Value of Unified Data

Nothing makes the case for data management like seeing it in action.

Real examples help leaders visualize what’s possible and prove that investments in clean, connected data pay off.

At CHG Healthcare, advanced data mastering cut duplicates by 46% and streamlined provider interactions, making it easier for teams to work efficiently and serve customers better.

For GEMA, having clear visibility into decentralized assets led to significant gains: a 25% boost in productivity and annual cost savings of €1 million.

These results are proof that when data is unified and well-governed, it removes friction, reduces waste, and frees people to focus on higher-value work.

Involve the People Who Actually Use the Data

Even the most sophisticated data program can fail if it’s built in isolation from the people it’s meant to serve.

Data leaders need to go beyond dashboards and reports, and spend time talking to end users about what information they actually need and whether they trust it.

“I encourage CDOs to think less about what their data metrics show them and… ask business leaders — the people who are actually using the data — what data matters and if they trust it,” Deighton says.

Jackson agrees, framing it as a mindset shift.

Instead of asking, “What data do we have?” she suggests focusing on whether it’s “organized, governed, and ready to drive responsible AI and business decisions.”

When data strategies are shaped by real-world input, the result is a system people believe in and actively use, making the investment worthwhile.

Reliable Data Strategy FAQs

What is the first step in building a reliable data strategy?

The first step is to unify and clean fragmented data across systems. Removing duplicates, fixing inconsistencies, and applying governance ensures that insights are based on accurate, trustworthy information from the start.

Why does poor data quality hurt business revenue?

Poor data quality leads to wrong insights, wasted resources, and bad decisions. A Monte Carlo survey found over half of companies lost 25% or more of revenue due to inaccurate or inconsistent data.

How can cloud-native architectures improve data management?

Fully adopting a cloud-native environment streamlines operations, reduces reliance on outdated systems, and supports scalability. This approach improves performance, security, and governance while enabling faster, more flexible data workflows.

Why should data initiatives be tied to business metrics?

Linking data programs to business outcomes, like problem-to-solution velocity or predictive accuracy, builds executive trust. This alignment makes it easier to justify investments and sustain long-term adoption.

Can AI fix bad data quality?

No. AI amplifies whatever data it’s given. If the underlying data is low quality, AI will produce wrong results faster. Success comes from embedding AI into a well-governed, clean data foundation from the start.

-details-webp.webp)