API Resilience: Key Findings

- API failures cost Global 2000 firms $400 billion in 2024, showing why downtime is no longer tolerable.

- Resilient APIs require built-in redundancy, intelligent retries, and real-time visibility.

- AI is driving self-healing infrastructure, but smart planning still underpins long-term stability.

Every minute your APIs fail, you lose revenue and customer trust.

Even minor disruptions can carry staggering costs — about $6 million per annum, to be precise, according to a commissioned Forrester Consulting survey on the financial impact of internet disruptions.

That amount skyrocketed to $400 billion for Global 2000 companies in 2024, and McKinsey advised tech officers to earmark explicit investments toward scalability, including API design.

As Jeff Finkelstein, founder and president of Customer Paradigm, puts it:

“It really impacts end customers when a system isn't stable and orders, shipments, or billing go missing. One missed shipment might not be the most catastrophic event of all time, but it creates quite a bit of doubt in a company when things don't work most of the time.”

Our interview reveals why fault‑tolerant APIs must sit at the heart of your digital infrastructure, and how Jeff’s real‑world strategies can keep your systems humming under pressure.

Who Is Jeff Finkelstein?

Jeff Finkelstein is the founder of Customer Paradigm, a Boulder-based interactive marketing firm serving clients like Xcel Energy, 3M, Level 3, and BP. He helps businesses build customer-focused digital experiences through SEO, eCommerce, and web strategy. Recognized as an expert in internet privacy and web marketing, Jeff ranks in the top 125 of 350,000 SEO professionals on Moz.com and has been featured in The New York Times as a “Web Guru.” His firm received the 2008 Rocky Mountain Direct Marketing Association Supplier of the Year Award.

Editor's Note: This is a sponsored article created in partnership with Customer Paradigm.

The financial fallout is only part of the API story.

Operational instability also puts teams in high-stakes situations where failure isn’t just expensive; it’s unacceptable.

In fact, 37 % of technology leaders name API security and stability among their top challenges, a Salt Security Perspective on the 2024 Gartner Market Guide for API Protection found.

The problem, Jeff says, is that many teams ship APIs with a “set it and forget it” mindset and pay the price when a downstream system goes dark.

“But what happens when you're trying to send in a new order or shipment and the system you're sending to is down right now? What do you do? Do you just hope this never happens?” he asks.

In mission‑critical flows, every second offline can erode trust, damage reputations, and, even worse, risk lives.

Hope isn’t a strategy. When a hospital client’s life‑support delivery hangs on a flaky endpoint, that hope is a liability:

“It’s really not so good for the patient when the hospital needs surgical equipment delivered, but one of the subsystems that receives it is down temporarily,” Jeff says.

Mitigating Single Points of Failure

When the stakes are that high, resiliency has to be second nature. And for Jeff, it is.

Long before he was building APIs, he learned about failure the hard way: suspended thousands of feet in the air with nothing but rope, anchors, and trust in his system.

He had spent years in alpine mountaineering, learning to “trust in secure, safe systems, and look for single points of failure.”

And what he learned is that, just as climbers use multiple anchors, resilient APIs must rely on diverse fail‑safes, not a lone retry that leaves you dangling.

Jeff applies this climber’s discipline directly to API architecture:

- Redundant Endpoints: Mirror critical services across regions or providers

- Graceful Degradation: Serve cached or partial data under strain

- Circuit Breakers: Halt calls to unhealthy subsystems before errors cascade

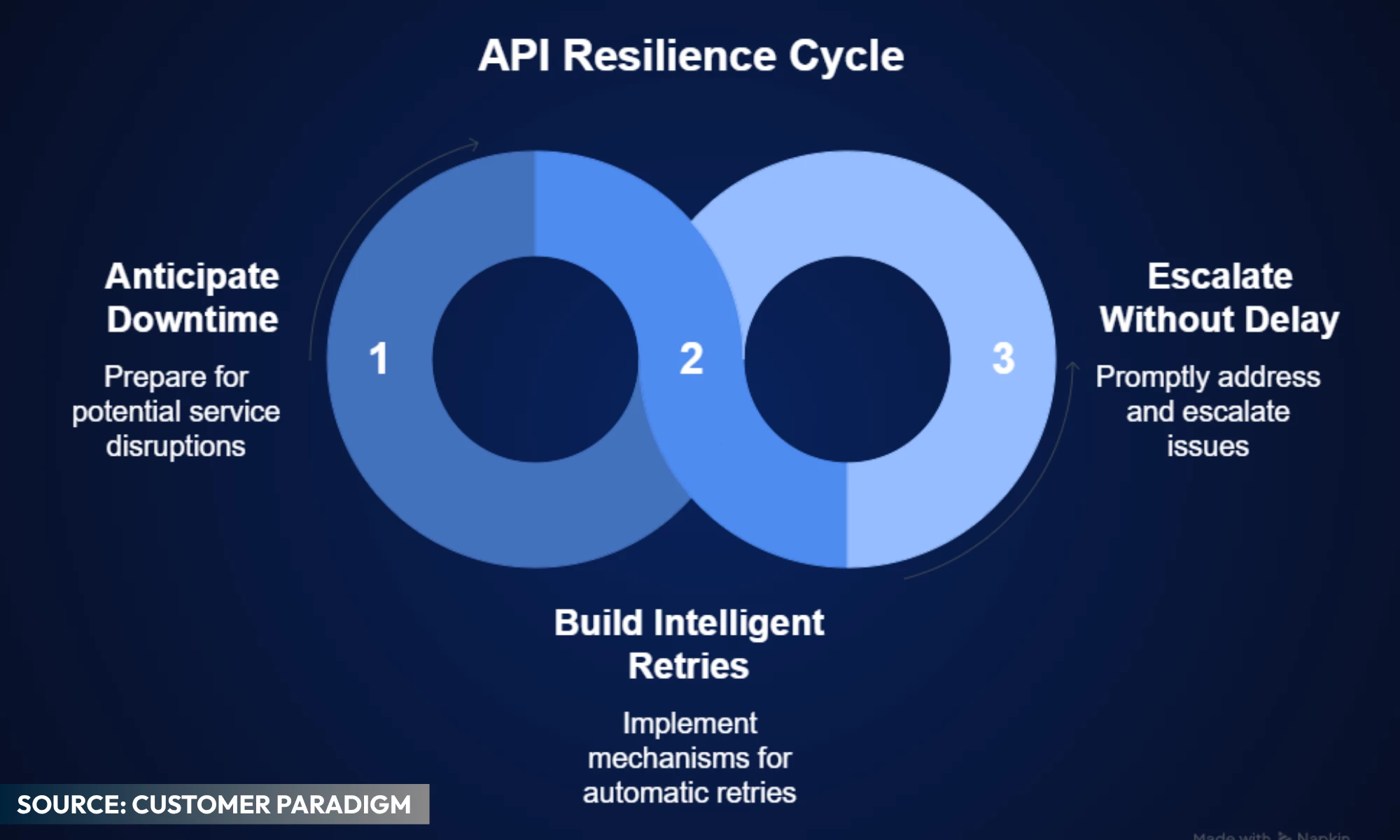

His recipe is simple: anticipate downtime, build intelligent retries, and escalate without delay.

The trick is to expect that the system you're sending to could be offline.

“What do you do? Perhaps send the shipment or order, or other data in, but listen for the request from the other system — letting you know that it went through,” he says.

If that doesn’t work, try again in five minutes, 20 minutes, four hours, or one day. But send a notification to let someone know that the system is still trying to connect.

The same applies to earning trust with big-name clients who can’t afford downtime.

Jeff believes the best approach is being proactive, asking a lot of upfront questions about what’s important, determining how specific issues should be handled, and whether the client wants the system to be flexible or rigid.

Building the Audit Trail: Visibility and Root Cause Analysis

But building a resilient system is only half the equation. You also need clear visibility into how it performs under pressure.

That’s why Jeff encourages CTOs and technical founders to move beyond assumptions and track real indicators of resilience at scale:

- Monitor server response time, uptime, and throughput

- Enable step‑by‑step traceability (“show your work”)

- Record timestamps and audit trails for all requests and responses

The most important thing, however, is how the system is designed and whether it will allow an analyst to review the work at each step in the database.

“For example, so that most of the important decisions in the coding can be verified and reviewed,” Jeff adds.

Yet even the best dashboards and audit trails can’t preempt every issue. Decision‑makers must also watch out for warning signs that reveal deeper flaws.

What red flags should they never ignore?

Your system will whisper before it screams. Pay attention to early warnings and act on them immediately. Jeff highlights two standout examples:

1. Recurring Errors

According to Jeff, the same glitch over and over means your quick fixes aren’t enough.

Solution: Drill down, find the root cause, and build a permanent solution.

2. “We’ve Never Failed” Claims

Jeff believes that anyone who insists they’ve had zero production issues is hiding something. Real-world systems fail.

Solution: Look for teams that share postmortems, own their mistakes, and improve from them.

“The reality is that anyone who has been doing large-scale systems has seen systems blow up, become unstable, suffer downtime, or have serious issues. Hopefully, they were able to learn from their mistakes and do things better next time.

And unlike skydiving, where you can only make a serious mistake once, IT gives you the ability to see small issues and fix them before it goes wrong,” Jeff says.

These red flags underscore why theory alone isn’t enough. Real resilience shines when you turn a fragile, manual pipeline into an automated powerhouse.

In one project, Jeff’s team tackled a distributor that relied on three staffers to retype orders from emails, faxes, and paperwork.

This project was an error‑ridden bottleneck that threatened high‑value contracts.

The challenge: Manual retyping of orders and faxes led to frequent data integrity issues and costly delays.

The fix came in three phases:

- Scan & Digitize: Customer Paradigm’s team built a pipeline to convert PDFs, faxes, and images into structured files. This reduced human input but still suffered from garbled or incomplete fields.

- API Endpoint: Next, the team deployed a flexible API capable of handling varied payloads. Large-scale tests across thousands of transactions helped map edge cases and stabilize parsing.

- Iteration & Visibility: Over five weeks, the team tuned retry logic, logging, and field mapping. Real-time dashboards were added to flag duplicates and failures instantly.

The impact? Manual rework disappeared. The team moved from firefighting to monitoring. Accuracy and throughput improved. What was once a bottleneck became a stable, “boring” system.

As Jeff puts it, “API development, when done right, is a lot like the insurance industry. You plan, hope, pray, and work extremely hard to mitigate risk and avoid downtime. The best outcome… is something that just works.”

In other words, exactly what you want in critical infrastructure.

“An API system, if built correctly, should have plenty of stability from the code base. You should be able to throw quite a bit at it and it should just run. Speed? That's just how much processor power, memory, and bandwidth you want to allocate,” Jeff says.

“In this day and age, as long as you have something running in a modern server infrastructure/data center, you should be pretty good. We run a lot of our development and production systems on AWS, and don't have to worry a ton about it.”

Now, the stability strategies we’ve covered are critical, but they aren’t static.

As cloud infrastructure, artificial intelligence (AI), and development tooling evolve, so do the ways we design for resilience.

Jeff sees this shift already underway.

“We're already using machine learning and AI to help build and deploy API systems, and use them as tools to help create code and decode and scan documents.”

He predicts a wave of self-healing systems: infrastructure that can automatically correct common errors, validate backups, and scale resources the moment a traffic surge hits.

Systems that don’t just notify you something’s broken, but quietly fix it before you even notice.

That kind of automation won’t replace smart planning, but it will supercharge it.

Embrace Risk, Engineer for Resilience

Hope isn’t a strategy, whether you’re dangling off a cliff or managing mission‑critical digital systems.

Jeff’s mountaineering mindset demonstrates that anticipating failure, building redundancy, and maintaining clear visibility aren’t optional extras; they’re key survival skills.

Don’t wait for your next outage (or your next cliffhanger) to test your resilience. Take Jeff’s playbook and:

- Audit your current API architecture for single points of failure.

- Layer in intelligent retry policies and real‑time alerts.

- Measure everything, so you can prove to stakeholders (and yourself) that your infrastructure won’t let you down.

After all, bulletproof API design isn’t about eliminating risk; it’s about embracing it, planning for it, and outsmarting it.

Strap in, build smart, and let your systems carry the weight — no prayer required.