Talent Attrition in the AI Age: Key Findings

- AI is reducing repetitive support work, but that doesn’t always reduce pressure on human agents since the remaining work can become more emotional and complex to resolve.

- Support teams may face a hidden attrition risk if companies judge AI success only through ticket reduction, without redesigning the human role left behind.

- Hiring, onboarding, and training need to change as support agents move from task execution to judgment-based work that requires curiosity, intuition, creativity, and AI fluency.

AI is often pitched as a way to make customer support easier. For basic requests, that promise holds up.

AI can handle the routine work that customers expect to be resolved quickly. But this leaves human agents with more complex issues that can’t be ignored.

That creates a problem many leaders overlook.

Lower ticket volume can still put pressure on support teams if the remaining conversations require a higher level of judgment, empathy, and emotional stamina.

Such a situation can be draining for support teams, potentially leading to lower-quality service. And that’s a huge problem in this industry.

According to an Accenture survey, 87% of people are likely to avoid a company after just one bad service experience.

That makes the cases AI cannot handle more important, because those are often the interactions that decide whether a customer stays or walks away.

Is Customer Service on the Brink?

— CX Today (@cxtodaynews) March 25, 2025

Accenture's latest study reveals rising frustration—87% of customers would walk after one bad experience, and many say tech is making things harder, not easier.

Are companies prioritising cost over CX?

👉 Read more here:…

Funmi Mide-Ajala is the Director of Customer Support and Digital Operations at Hugo Inc., a global provider of customer support, technical support, and AI-powered operations solutions.

Hugo Inc. helps brands build and manage high-performance teams across regions and channels.

For Mide-Ajala, the AI conversation is too often framed around whether bots are coming for support jobs, something that misses more immediate issues.

“[...] The more I interact with AI, the more I'm beginning to see that, yes, it's gonna take some jobs, but it's going to leave us humans to be more human,” she says.

“And to be more human means that we're going to be more creative or we're going to have to plug in more into our creative side. It means that we're gonna be more personal, we're gonna exercise more judgment.”

In episode No. 137 of the DesignRush Podcast, Mide-Ajala explains how AI is changing support talent, training, and attrition risk.

She also looks at why companies need to rethink the human side of automation before it starts affecting performance and retention.

Watch the full episode now on YouTube or listen on Spotify.

Who Is Funmi Mide-Ajala?

Funmi Mide-Ajala is the Director of Customer Support and Digital Operations at Hugo Inc., where she builds customer support systems, AI-assisted workflows, and team development models for fast-growing companies.

Her work focuses on making support operations more efficient and reliable without losing the human layer that customers still expect.

The Hidden Attrition Risk Behind AI Efficiency

Most companies look at AI in support through the lens of efficiency.

When a tool lowers ticket volume and handles repetitive work faster, it looks like progress.

Human agents, however, often inherit the work that AI cannot resolve.

AI can clear the simple requests before they ever reach the team. What remains is the kind of conversation where a customer needs a person who can read the situation and make the right call.

“[...] Stuff that's repetitive, stuff that people don't really care about that much. But we're going to have to then be more creative. We're going to have to create more personal connections with each other. We're going to have to exercise more judgment in how we operate as people.”

That is where the talent problem starts. The emotional weight of the role can increase while the company believes automation has made the job easier.

As Mide-Ajala explains, companies risk missing three talent problems if they treat AI only as a support efficiency tool:

1. Measuring AI Success Only by Ticket Reduction

A common assumption around AI is that fewer tickets will naturally reduce burnout.

Mide-Ajala challenges that idea.

“[...] If you have to deal more and more with the more painful things, handle more emotions, that in itself can be intense and can be draining.”

“[...] The volume has reduced and maybe you're not working overtime hours and cranking through tickets as you used to, but your emotional battery is draining every day,” she says.

The support volume only tells part of the story. This system looks efficient on the surface since the number of resolved tickets goes up.

However, the gravity of work that’s left behind for humans to resolve makes the work itself difficult to sustain.

That’s because the work asks agents to protect the customer relationship while deciding what the business can reasonably do.

“[...] You're critically analyzing things and putting pressure on your creative skills. At the same time, trying to make people happy, right?” Mide-Ajala adds.

“So you have to make judgment calls between what are policies and processes and how people are going to walk away feeling about the business.”

“[...] But at the end of the day, you have to turn the experience around for the customer. You have to leave them happy.”

In other words, such a system leaves agents in a catch-22 situation that only accelerates burnout.

2. Hiring for Old Support Roles After the Work Has Changed

Many companies still treat support roles as if the work has not changed.

The old support model rewarded people who could follow a process. Today, the role asks for people who can stay steady when the process no longer gives them the answer.

Mide-Ajala says companies need to start by asking, “after automation, what work is actually left?

“[...] There will not be, and again, let's blame AI for this. We're going to move away from playbooks now. The playbook is expired,” she says.

That line reframes the talent problem.

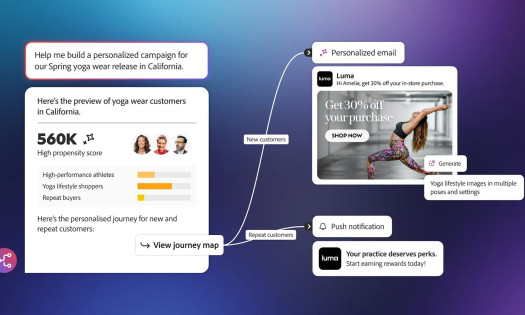

“[...] How AI is solved for one business is going to be different from how it is solved for another business,” Mide-Ajala says.

Some companies may use AI mostly as an assistant. Others may automate a large share of basic conversations.

Those choices directly influence the type of people they need.

“[...] What's going to be left after your automation is done, and then you have to custom-make your training, your onboarding, your hiring, your profiling to what's left,” she says.

That means hiring has to become more specific. The question is whether people can operate when the script runs out.

3. Training People to Follow Fixed Scripts When the Work Now Requires Judgment

If support work becomes more judgment-based, then training cannot stay mostly process-based.

Traditional onboarding often prepares new agents for the process, not for the kind of real-time decisions the role now demands.

It also doesn’t fully equip people for a role where agents are expected to handle ambiguity, emotion, and higher-stakes decisions.

Mide-Ajala says training needs to be rebuilt around the work agents are now expected to do.

“[...] There will be a shift in, first of all, understanding that our regular training curriculum has to be overhauled into building leaders who can make the judgment calls that we want them to make,” she says.

That creates a major assignment for learning and development teams.

Instead of training only for compliance and process accuracy, companies need to prepare agents for situations where the right response is not always written into the process.

“[...] It's less classroom instructions but it's more how do you help people to be more cognitive? How do you help people to make decisions on the spot?” Mide-Ajala says.

That preparation needs to happen before the work becomes harder.

“[...] There’s gonna be a lot of hands-on training. It's gonna be a lot of exposure to new things. There's gonna be a lot of testing people's limits.”

If the remaining human work is more complex, people need to be prepared for that complexity before it becomes overwhelming.

The New Support Role Requires Stronger Baseline Skills

AI may also change what entry-level means in customer support.

In the past, new agents could often begin with simple requests and gradually move into more complex work. If AI takes much of that simple work, the learning path changes.

Mide-Ajala says that may make companies more intentional about the people they bring in.

“[...] I think because of how intentional we are going to have to be about hiring, we will naturally gravitate towards the smarter bunch of people, the more creative people,” she says.

That does not mean support teams will only need senior hires.

It means companies may need to look for stronger baseline skills earlier, especially in roles where agents are expected to handle more complex cases from the start.

For Mide-Ajala, standing out will matter more as the work becomes harder.

“You can no longer be a blue umbrella. You can't blend it. You have to be the red umbrella now.”

If companies keep hiring for the old role while AI changes the actual work, people may enter jobs they are not ready or supported to handle.

The Skills That Matter When AI Takes the Routine Work

If support agents are left with harder cases, the skill profile changes.

Mide-Ajala points first to curiosity.

“[...] You have to be curious. I think that has always mattered in CX anyway. You have to be curious, but I think now you have to be more curious,” she says.

That curiosity matters more when agents no longer have a clear decision tree to follow.

She also points to intuition as a critical skill when the answer is not obvious.

“You have to be more intuitive because if you don't have a decision tree any longer and you still have to make a decision, you're gonna have to be bold enough to make judgment calls, but you can't make the judgment calls blindly.”

The same applies to AI fluency. The agents who remain valuable will be the ones who know how to use the technology well enough to solve harder problems.

“The winner will emerge from a bunch of people who know how to master this tool and know how to use it to their advantage, know how to solve new problems with the same tool that is taking your job, unfortunately,” Mide-Ajala says.

McKinsey reported in February 2026 that customer care leaders are rethinking how humans and AI agents work together as AI affects customer experience, cost reduction, and revenue growth.

And while AI may take the routine work first, companies still need people who can handle what automation leaves behind.