Data Breaches Expose AI Risk: Key Findings

- AI-driven growth is outpacing security standards, as organizations rapidly embed AI into products without evolving the development frameworks needed to protect increasingly complex and interconnected systems.

- Breaches are costly and reputationally damaging, with thousands of incidents each year and an average breach cost of $4.4 million that extends far beyond regulatory fines.

- AI security must be built into development from day one, requiring architectural threat modeling, strict access controls, continuous cloud monitoring, and executive-level accountability rather than treating security as a late-stage compliance task.

It’s hard to go a day now without interacting with artificial intelligence in some way. It sharpens our photos, helps write our emails, and quietly improves the content we publish.

That ease is what makes AI feel almost invisible.

Most people never think about the servers, the storage layers, or the permissions quietly working in the background.

They just see the result and assume everything behind it is handled responsibly.

That’s when reports surfaced that more than 1.5 million private images, 385,000 videos, and millions of user-generated AI media files had been exposed.

This wasn’t the result of some complex cyberattack.

The data was simply left exposed through a misconfigured cloud storage bucket connected to an AI-powered Android editing app.

Aleksey Gureiev, Technical Lead at multidisciplinary software design and development agency, Shakuro, says that incidents like this are easy to frame as isolated technical mistakes.

However, in reality, they reveal a deeper issue.

“AI capabilities are advancing so rapidly that the development standards meant to secure them haven’t evolved at the same pace,” he said.

The video below outlines the security risks that come with sharing personal information with some apps:

Editor's Note: This is a sponsored article created in partnership with Shakuro.

AI Data Breaches by the Numbers

What’s concerning is that this reported AI app exposure is not an isolated case. Data breaches are becoming a daily occurrence rather than a rare disruption.

According to Breachsense, more than 4,100 publicly disclosed data breaches occurred in the last year, averaging roughly 11 breaches every day.

In just one quarter, nearly 109 million accounts were compromised.

The financial consequences are equally significant.

IBM’s Cost of a Data Breach 2025 Report suggests that the global average cost of a breach amounts to $4.4 million.

The damage doesn’t stop at fines or cleanup costs. It lingers in lost customer confidence and weakened brand credibility.

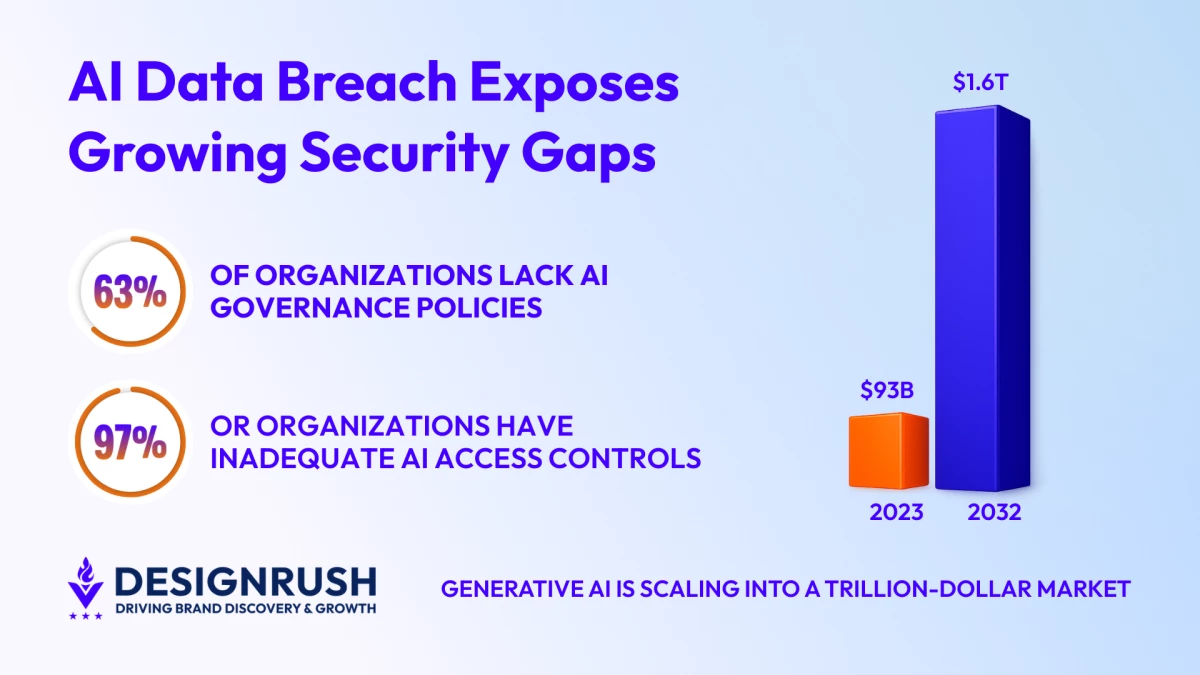

At the same time, governance gaps remain widespread.

As many as 63% of organizations lack formal AI governance policies, and among those that experienced AI-related security incidents, 97% reported inadequate AI access controls.

The fact that AI isn’t being rolled out slowly or cautiously compounds this problem.

As AI spreads across consumer and enterprise platforms, the amount of sensitive data passing through those layers keeps growing.

And the money following this shift is just as significant.

Bloomberg Intelligence projects the generative AI market will reach $1.6 trillion by 2032 from only $93 billion in 2023.

“When thousands of breaches are already occurring each year and tens of millions of accounts can be compromised in a single quarter, adding increasingly complex AI architectures without equally rigorous safeguards amplifies risk,” Gureiev said.

How AI Expands the Cybersecurity Attack Surface

Artificial intelligence does more than enhance features. It reshapes the architecture of applications.

Traditional systems operate within relatively defined boundaries, where the frontend connects to backend services and databases.

As such, security models have evolved around that structure.

AI-powered systems introduce additional layers, including model inference endpoints, cloud storage for training data and user uploads, third-party APIs, real-time data pipelines, and temporary processing environments.

Each of these layers increases interconnection.

And every connection requires governance. Without it, every overlooked permission becomes a potential vulnerability.

“AI expands the attack surface not only through complexity, but through distributed complexity,” Gureiev said.

“Systems become more dynamic and interconnected, and their dependencies multiply.”

When development standards fail to adapt to this structural shift, risk can quickly accumulate.

See how hackers have exposed cybersecurity vulnerabilities in AI:

AI Security Best Practices for Modern App Development

AI cannot be treated as a decorative layer applied to existing systems, and must be approached as foundational infrastructure.

That demands security integration across the entire application lifecycle.

“It begins with architectural threat modeling that accounts for AI workflows and the movement of sensitive data between services,” Gureiev said.

This includes disciplined credential management and strict access controls across cloud environments.

It also requires eliminating hardcoded secrets and enforcing least-privilege permissions at every level.

Moreover, it calls for automated configuration audits before deployment and continuous monitoring after release.

“Most importantly, AI-aware standards require collaboration,” Gureiev said. “Product teams, engineers, designers, and security leaders must align around a shared understanding that intelligence increases complexity, and complexity demands stronger governance.”

When security is integrated early, it strengthens innovation rather than slowing it.

For executive teams, this is no longer a technical implementation detail. It is a strategic decision about risk tolerance, brand resilience, and long-term competitiveness.

“Organizations that fail to elevate AI security to the boardroom level are not just exposing systems, they are exposing reputation and market position,” Gureiev said.

IBM outlines some of these best practices in the video below:

Why AI Security Is Now a Brand Trust Issue

For brands, this goes beyond systems and infrastructure. It comes down to trust and loyalty.

AI tools often process highly personal information, including photos, voice recordings, and usage patterns; things people don’t share lightly. Most users don’t think about where that data travels, and expect it to be handled responsibly.

But when that expectation is broken, the impact isn’t limited to a line item on a balance sheet.

People hesitate. They question the product. Regulators take a closer look. Competitors gain ground.

And once trust slips, earning it back takes far more effort than protecting it in the first place.

“In a marketplace where AI capabilities are rapidly becoming standard, responsible development is the true differentiator,” Gureiev said.

“Companies that demonstrate rigorous safeguards signal stability and long-term thinking, and show that innovation and protection can coexist.”

Need to know more about the relationship between data protection and consumer trust? The video below outlines all you need to know:

AI Security as a Competitive Advantage

The leak is not the headline. It is a warning.

The next generation of AI leaders will not be defined by how advanced their features are, but by how resilient their systems prove to be.

“As AI regulation matures and customer expectations around data protection intensify, the market will increasingly reward companies that treat AI security as core infrastructure rather than reactive compliance,” Gureiev said.

And in the race to build smarter applications, the companies that endure will be the ones that choose discipline over speed every time.