AI Moderation & Brand Reputation: Key Findings

- Keyword filters can’t keep up with today’s pace. AI moderation spots and removes harmful content faster and with greater accuracy.

- Fast moderation keeps campaigns safe, protects visibility, and has cut acquisition costs by as much as 19%.

- Clear rules and preparation give brands an edge, driving ROI gains of more than 300% in some cases.

Toxic content online isn’t just a community issue. It’s a brand risk and a public safety problem.

In fact, Facebook, Instagram, TikTok, Twitter, and YouTube collectively fail to act on 89% of posts containing anti-Muslim hatred and Islamophobia, even after being reported, according to a report from the Center for Countering Digital Hate.

The takeaway for brands and agencies? Content safety is a competitive advantage in winning and keeping clients. This is because brands that handle moderation well safeguard their reputation and ad performance while maintaining high-value engagement.

This kind of moderation gap erodes trust, damages campaign performance, and can silence the very communities brands aim to support.

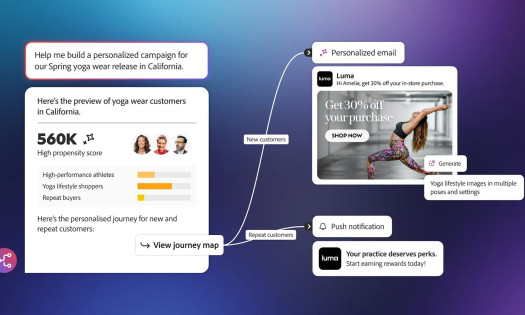

Some companies are turning to AI tools to manage these risks in real time.

Quick listen: Why AI moderation is now a brand reputation issue under 2 minutes.

Among them is Arwen, a moderation platform designed to help brands filter toxicity, protect creators, and surface constructive engagement across paid and organic channels.

I spoke with Arwen CEO Matthew McGrory to understand what’s actually working on the front lines of content moderation.

In our conversation, he talked about the most common pitfalls, the limits of keyword filtering, and how smart systems are helping brands stay ahead of harmful content online.

Who Is Matthew McGrory?

Matthew McGrory is CEO and co-founder of Arwen AI Limited, where he’s on a mission to help brands revolutionize their social conversations. Matt leads Arwen’s drive to use AI for good, putting brands in control of their paid and organic comments by removing toxicity, surfacing the positive, and protecting teams and communities at scale.

Don’t Rely on Human Moderation or Keyword Filters Alone

A lot of brands still depend on human teams to scan comments or use simple word filters.

The problem is, neither approach works well when content is coming in constantly and in different forms.

Manual teams get overwhelmed, and keyword filters are easy to outsmart.

Matthew explains that bad actors often twist language to avoid detection.

“Bad actors use slang, emojis, or ‘algospeak’ to dodge basic filters,” he says.

“You end up with both false positives (good comments hidden) and false negatives (bad stuff gets through), and wanted comments just slip through the net.”

When moderation systems fail, both your audience and your message get buried.

For marketing leaders choosing agency partners, a brand’s moderation skills signal operational maturity. And agencies that demonstrate smart, scalable moderation processes can position themselves as safer, more trusted options for brand campaigns.

Act Quickly to Keep Campaigns Safe

Some of the biggest risks happen during moments when brands are trying to do good, like running inclusive or advocacy-based campaigns.

If harmful comments aren’t removed fast, they can take over the conversation, push away supporters, and even affect the people featured in the content.

Matthew shares an example from a dating app.

“Before Arwen, toxic comments would pile up, hurting their reputation, creators, and ad results. With Arwen, toxic comments were removed instantly, making campaigns safer and more effective.”

In addition to being a social media operations issue, this is also a client retention factor. Agencies that can show they respond to toxic content in seconds, not hours, can prove they protect both campaign sentiment and ROI.

This shift didn’t just make the space safer. It also led to a 19% drop in customer acquisition costs for the brand.

Reduce Bias by Combining People and Technology

People often assume AI is more rigid or error-prone than humans, but it’s the opposite when done well.

Trained models that are regularly reviewed by diverse teams can actually apply rules more consistently than tired human moderators.

“AI can actually be less biased than humans,” Matthew says.

“Well-trained models (reviewed and corrected by diverse teams) outperform humans, especially at scale, and avoid unconscious bias and fatigue-driven mistakes.”

The goal isn’t to replace people. Instead, it's to help them spot more issues with fewer slip-ups.

In a competitive pitch, proving your moderation keeps things fair and spotless is like showing up in a freshly pressed suit, meaning you instantly look sharper than the rest.

Move Fast to Protect Engagement and Visibility

Toxic content signals to algorithms that your posts aren’t safe for wide reach.

Removing bad comments quickly shows your community that it’s okay to speak up, and gives your positive content a better chance of being seen.

View this post on Instagram

“Every extra second a toxic comment is visible damages brand trust and hurts engagement,” Matthew says. “On average our clients see a 21.3% increase in engagement (likes per post, comments per post, virality per post etc).”

For marketing teams and agencies, this lift in engagement translates into more efficient paid spend and stronger organic reach — tangible proof that moderation impacts both performance and visibility.

The faster a brand responds to the good stuff, the more momentum those conversations get.

Set Clear Guidelines Before Bringing in Moderation Tools

AI tools can help clean up your channels, but they need clear direction. This means deciding what’s off-limits and what matters most before the tech kicks in.

Matthew outlines five steps brands should take before rolling out AI-powered moderation:

- Audit your pain points – Figure out how toxicity, spam, or slow responses are affecting your team and brand.

- Define red lines – Be specific about what you want to block or highlight.

- Get teams aligned – Make sure legal, marketing, support, and comms are on the same page.

- Test on your real content – See how the system performs on your actual social channels.

- Track and improve – Use data to keep improving your filters and response speed.

Agencies that follow these steps can present prospective clients with a clear, rules-first approach to content safety, which is a growing requirement in brand RFPs.

“A client recently recorded over 300% ROI from using Arwen across their paid and organic,” Matthew says.

It’s proof that clear prep pays off.

Leave Outdated Myths Behind

There are still a few misconceptions stopping brands from acting on moderation, especially when it comes to using tech.

Matthew points to three that come up often:

- AI can run moderation entirely on its own.

- Basic keyword filters are sufficient for content control.

- Removing harmful comments means censoring open dialogue.

Each one misses the mark. Smart tools help surface the good and get rid of what’s violating clear policies.

“Smart AI can protect open conversation — by removing only what breaks the rules, and surfacing valuable comments faster,” he says.

Prepare for More Complex Content and Smarter Detection

The way people communicate online keeps evolving.

It’s no longer just text. Comments often include images, video, emojis, and layered meaning. Brands need systems that can read context across different formats and languages.

Matthew says this is the next big leap.

“Real-time, multimodal moderation (text, images, emojis, video) is now table stakes.”

Looking ahead, platforms like Arwen are focusing on spotting harmful patterns before they blow up and building systems that keep learning from real-world use.

Moderation isn’t just a technical challenge anymore.

It’s a human one, and solving it means building systems that keep learning, adapting, and responding in real time.

AI Moderation & Content Safety FAQs

What is AI-powered moderation and why do brands need it?

AI‑powered moderation uses trained algorithms to detect and remove toxic content in real time. Brands need it to ensure fast, scalable protection, reduce bias, and preserve community trust on social media platforms.

How does moderation speed impact engagement?

Faster removal of toxic comments shields brand reputation and encourages more respectful participation. It also enables positive comments to surface quickly—boosting algorithmic reach and overall engagement.

Can AI moderation be biased or unfair?

When models are trained and continuously reviewed by diverse teams, AI can perform more consistently and with less bias than human moderators subject to exhaustion or subjective judgment.

Do brands still need humans if they use AI?

Yes. Human-in-the-loop moderation enables quality control, handles edge cases, and fine-tunes AI models. The most effective systems combine AI automation and human oversight.

How does a brand’s moderation approach influence hiring decisions?

A clear, documented moderation process demonstrates operational maturity, risk management, and audience care. These are factors that often influence which agency a brand chooses to manage their campaigns.