Growth of Vibe Coding Among Startups: Key Findings

- 25% of YC Winter 2025 startups ran on codebases that were 95% AI-generated, showing how fast vibe coding has entered startup building.

- Startups adopt AI coding tools 20% faster than enterprises, gaining speed but taking on more maintenance risk.

- Unico Connect says AI coding requires guardrails, pattern-level reviews, and AI-assisted maintenance to turn speed into a long-term engineering advantage.

Reporting by TechCrunch on the Y Combinator Winter 2025 cohort highlighted that 25% of startups had codebases that were 95% AI-generated.

These weren’t weekend experiments or random people testing out the capabilities of vibe coding.

They were funded companies building real products, shipping to real users, and growing at 10% week-over-week.

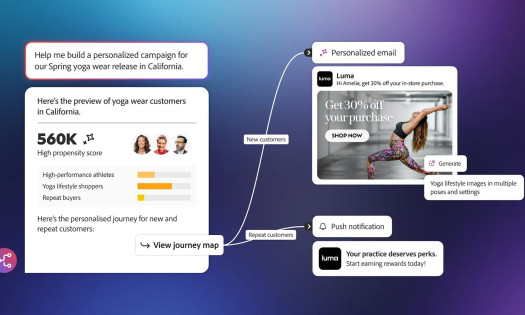

Given this, it isn’t surprising that vibe coding is seeing exponential growth, both in its use and financially.

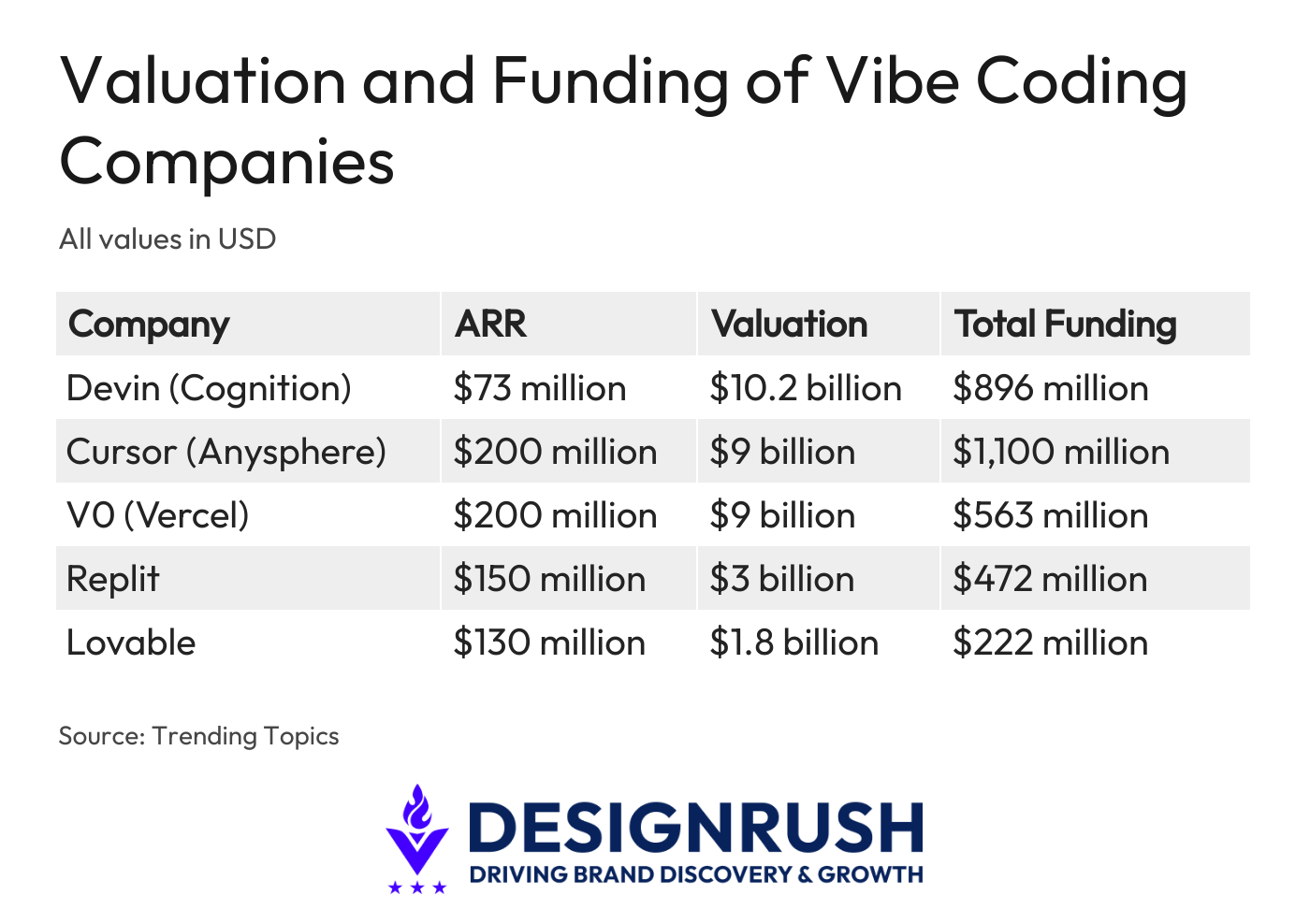

In fact, vibe coding startups, like Cognition, Lovable, Replit, and Cursor, saw their combined valuations grow roughly 350% year-over-year, from $7–8 billion in mid-2024 to over $36 billion in 2025.

Research from Implicator.ai shows startups adopt AI coding tools at 20% higher rates than large enterprises, and the results are showing up in speed, iteration cycles, and capital efficiency.

But speed creates its own problems.

And the problem that’s starting to surface across the industry is “what happens to these codebases six months, twelve months, and two years down the line?”

The Maintenance Problem Nobody Planned For

AI is remarkably good at generating code that works. It is less consistent in generating maintainable code.

When you ask an AI tool to build a feature, it produces something functional.

But when you ask it to iterate on that feature five or ten times, issues may arise.

Each iteration may solve the immediate problem, but it could also introduce subtle inconsistencies in naming conventions, architectural patterns, and dependency management.

The codebase grows quickly, which feels productive, but the internal structure becomes increasingly fragmented due to the growing number of inconsistencies.

Different sections of the application may follow different patterns, not because multiple developers made different choices, but because the AI made different choices across sessions.

The result is what some engineers have started calling a “technical debt accelerator,” code that ships fast, but becomes progressively harder to modify, debug, and extend.

For early-stage startups moving fast, this trade-off can be acceptable.

But in my experience working with enterprises from all over the world, that trade-off quickly becomes a serious operational risk for companies that reach product-market fit and need to scale.

Why Traditional Code Review Falls Short

Most engineering teams rely on code review as their primary quality gate.

But code review processes designed for human-written code do not translate directly to AI-generated codebases.

This is mostly because the volume is different. AI produces code faster than any human, which means review queues grow quickly.

Likewise, the patterns are different. AI-generated code often passes functional tests while violating architectural conventions that a human developer would have followed instinctively.

The challenge is that AI writes code without the institutional context that experienced engineers carry.

It doesn’t know that your team decided to standardize on a particular data access pattern six months ago. It doesn’t remember that a specific module was designed to be stateless for a reason.

Each generation starts from scratch, and the accumulated decisions that give a codebase its coherence are not factors the AI considers unless explicitly told.

What Actually Works: Building Guardrails Before the Code

At Unico Connect, we write a significant portion of our code using AI tools.

Over 80% of our codebase is AI-assisted, which means we have dealt with these maintenance challenges firsthand.

The approaches that have worked for us aren’t theoretical. They came from shipping production code and dealing with the consequences when standards slipped.

From there, we made three important shifts:

1. Treat AI as a co-author that needs explicit guidance

In other words, AI isn’t a tool that can be left to its own judgment.

This means teams must have established conventions before the AI starts generating code. These include:

- Directory structures

- Naming patterns

- Module boundaries

- Dependency rules

When these are defined upfront and embedded in the development environment, AI tools produce more consistent output because they have context to work within.

2. Rethink how you review generated code

Rather than reviewing individual pull requests in isolation, the focus needs to move toward pattern-level audits that answer questions like:

- Does the new code follow the same architectural approach as the rest of the module?

- Are there emerging inconsistencies in how similar problems are being solved across the codebase?

These are the questions that catch drift before it compounds.

The teams that struggle with AI-generated code are usually the ones that treat AI as a replacement for engineering discipline.

It is not.

If anything, AI-generated code demands more rigor, not less, because the volume is higher and the inconsistencies are subtler.

The companies that figure this out early will have a real advantage as these codebases scale.

3. Use AI as a maintenance tool

The same models that generate code can be directed to audit it. This includes scanning for pattern violations, flagging inconsistencies, and suggesting refactors.

This is not a fully automated process, but it turns weeks of manual review into hours of guided analysis.

The Bigger Picture

The vibe coding movement is not going away. The economics are too compelling, and the tooling is improving rapidly.

But the companies building on AI-generated codebases today will face a sorting event over the next few years.

Those who invested in maintainability, clear standards, structured review processes, and a deliberate approach to AI as a co-author will scale.

On the other hand, those who optimized purely for speed will find themselves spending more time fixing what they built than building what comes next.

For engineering leaders evaluating their own AI adoption, the question is not whether to use AI coding tools. That decision has largely been made by the market.

The question is whether you are building the discipline around these tools to make the output sustainable.