Why CRO Fails: Key Findings

- 50% of marketers use CRO, but many still stall, showing that focusing only on buttons and headlines misses bigger journey issues, so audit the full path before testing to drive growth.

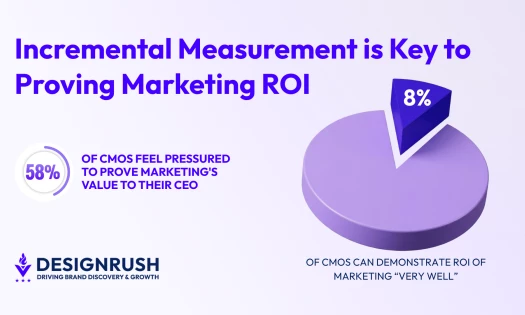

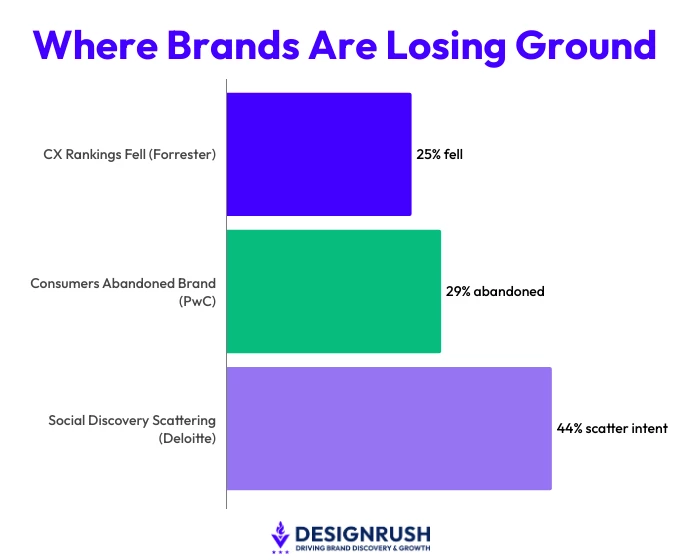

- 25% of U.S. brands saw customer experience rankings drop while only 7% improved, proving isolated optimization fails, so align messaging and structure across channels to retain customers.

- Users often discover content on social media and then convert elsewhere, highlighting that trust and clarity drive decisions, so design journeys that guide users and build confidence before chasing small wins.

Half of marketers report using conversion rate optimization. It still ranks second among optimization tactics, according to HubSpot.

That looks like progress. The reality tells a different story.

Many teams focus on buttons, headlines, and isolated tests, while the customer is making decisions across multiple steps and channels. When the path is broken, tests can still run perfectly…but conversions stall.

The wider data confirms this pattern.

In the U.S., 25% of brands saw their customer experience rankings drop in 2025, according to Forrester. Only 7% improved.

PwC puts a number to the fallout: 29% of consumers stopped buying or using a brand after bad experiences, whether online or in person.

The path to conversion is just as unstable. Deloitte found that 44% of fans discover content on social media, then leave to watch, listen, or buy somewhere else.

Small test tweaks can’t fix that. The tests work, but they run alone. And that’s why many CRO programs stall.

Editor's Note: This is a sponsored article created in partnership with Isadora Agency.

Why Isolated CRO Stalls

That’s the trap; teams test a headline, swap a button color, maybe tighten a form field, then call it optimization.

And those are useful moves, but they only work when the underlying experience is already doing its job.

If the value proposition is fuzzy, then trust is weak, the page flow is confusing, the message differs across channels, or the site feels sluggish…a test may improve one step while the overall journey still loses customers at other points.

The customer isn’t grading the homepage in isolation.

They’re grading the whole experience, and they are doing it with their wallet.

That is why Isadora Marlow-Morgan, founder of Isadora Agency, keeps coming back to the same point: CRO gets stuck when it stays too close to the interface and too far from the decision.

Her background in design and web development gives her a practical view of the problem, and her agency’s own work leans hard into customer journey design, information architecture, and clarity.

“A lot of CRO work gets stuck at the button level. The real issue is whether the experience makes sense from the first click to the final decision.

“If the story is unclear, the next step feels uncertain, or the content keeps asking people to do the work themselves, the test result only tells part of the story.

“The next step for CRO is to design the journey with more care. Teams need to build confidence, reduce confusion, and make the decision path feel obvious before they start chasing tiny wins on isolated pages.”

CRO Only Works When the Path Makes Sense

That approach shows up in the company’s case work.

CRO can only deliver meaningful results when the customer journey is clear, structured, and builds trust. Otherwise, testing buttons or headlines produces little lift.

For Texas State Technical College, the team reviewed nearly 20,000 pages across subdomains and used surveys, interviews, and user journeys to map out a clear site structure.

The problem was scale and confusion. The solution was structure.

The result was a student-focused marketing platform that made it easier to surface the right information and guide users through programs, financial aid, and support:

- Increased application starts by 453%

- Increased program views by 31% across 50+ offerings

- 84% increase in application page traffic

Tilton School offers the same lesson in a different setting.

The school needed a better way to connect with prospective families, since print viewbooks were no longer doing the job.

View this post on Instagram

Isadora Agency built a dynamic digital viewbook around strategy, storytelling, and user journey design.

Within weeks, Tilton saw a spike in web engagement, stronger email conversion, and better SEO performance:

- 27% page view growth

- 59% increase in request info traffic

- Top 10 Google ranking for “boarding school viewbook”

And that’s the point. The work wasn’t centered on a single test; it was centered on how families actually explore, compare, and decide.

Deloitte’s 2025 Connected Consumer survey adds another useful reminder.

Respondents aligned with “trusted trailblazers” spend 62% more annually on tech devices and 26% more monthly on tech services than those aligned with slower-moving brands.

Which proves that trust and perceived control are doing real commercial work there.

So if the experience leaves people guessing, testing a headline won’t fix the bigger problem. Rather, the journey has to earn confidence before it can earn conversion.

And CRO still matters. Nobody is suggesting otherwise.

The point is that the discipline works best when it’s tied to the full customer journey, clean measurement, and a clear trust layer.

Without that, teams get a lot of activity and very little lift.

With it, optimization starts to behave like a business system instead of a collection of tidy little edits.

What Brands and Agencies Should Do Next

The issue shows up the same way across teams.

More tests go live, dashboards fill up, and the numbers barely move.

The problem sits earlier in the journey. When the experience is hard to follow, users hesitate. When they hesitate, they leave.

So the work starts there.

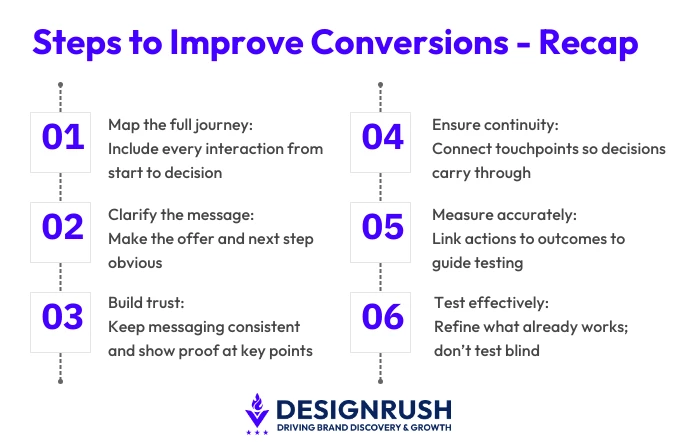

1. Look at the full path from first interaction to decision.

Not just the landing page. Not just the checkout.

The whole thing. Where do people slow down? Where do they drop off? Those moments point to confusion, and confusion is what blocks conversion.

2. Clarity is usually the first problem.

If someone lands on a page and has to stop and figure out what’s being offered, they’re already slowing down. Most won’t recover from that.

That hesitation shows up in the next step. Fewer clicks, more exits, less follow-through.

The fix is basic. Say what the offer is and show what to do next. No guessing.

People move when it’s clear what they’re looking at and what comes next.

3. Trust builds or breaks the decision.

If an ad promises one thing and the landing page says something slightly different, doubt creeps in. And if important details are buried or missing, users start second-guessing.

That slows everything down. So, keep the message consistent, show proof where decisions happen, and make it easy for someone to feel comfortable taking the next step.

4. Then there is the issue of continuity.

People rarely convert in one go. Instead, they move between channels, compare options, and come back later. If each touchpoint feels disconnected, they have to reprocess the decision every time.

That repetition weakens intent. Keep the story steady so each interaction adds to the last one instead of resetting it.

5. Measurement also plays a part.

When teams can’t clearly connect actions to outcomes, they end up testing without direction.

That might lead to small, isolated wins, but it’s not to say that they’ll add up.

Clear metrics tied to actual business results make it easier to see what is working and what needs attention.

6. Testing still matters.

It just works better when the foundation is solid.

If the journey makes sense, if the message is clear, if the experience builds confidence, then testing can refine what is already in place. Without that, results stay limited because the core issues remain.

Taking these steps at scale requires the right expertise and approach, which is why the choice of partner matters when tackling complex enterprise websites.

View this post on Instagram

Marlow-Morgan sums it up clearly:

“Teams move into testing before they confirm that the experience supports the decision. If users cannot follow the path or understand the offer, results will stay limited.

“Start with the journey. Make sure it guides people from one step to the next without confusion. Once that is in place, testing becomes far more effective.”

Focus on fixing the journey first.

When the path is clear and the impact visible, testing generates gains that move conversions forward instead of producing small, isolated improvements.